Sydney Saubestre

Senior Policy Analyst, Open Technology Institute, ╣·▓·╩ėŲĄ

As artificial intelligence (AI) becomes more integrated into higher education, institutions find themselves balancing two competing imperatives: promoting data access that is open to all for collaboration to drive innovation and research, and protecting student privacy and institutional autonomy. While AI more personalization, predictive insights, and resource optimization for students and educators, its reliance on large-scale student data of surveillance, discrimination, and educational technology (edtech) vendor overreach. The demonstrates the diverse ways in which their studentsŌĆÖ data in the age of AI, ranging from open-arms data sharing to risk-averse data hoarding. A third optionŌĆöintentional, collaborative data governanceŌĆöoffers a way to harness AIŌĆÖs potential while safeguarding the core values of higher education.

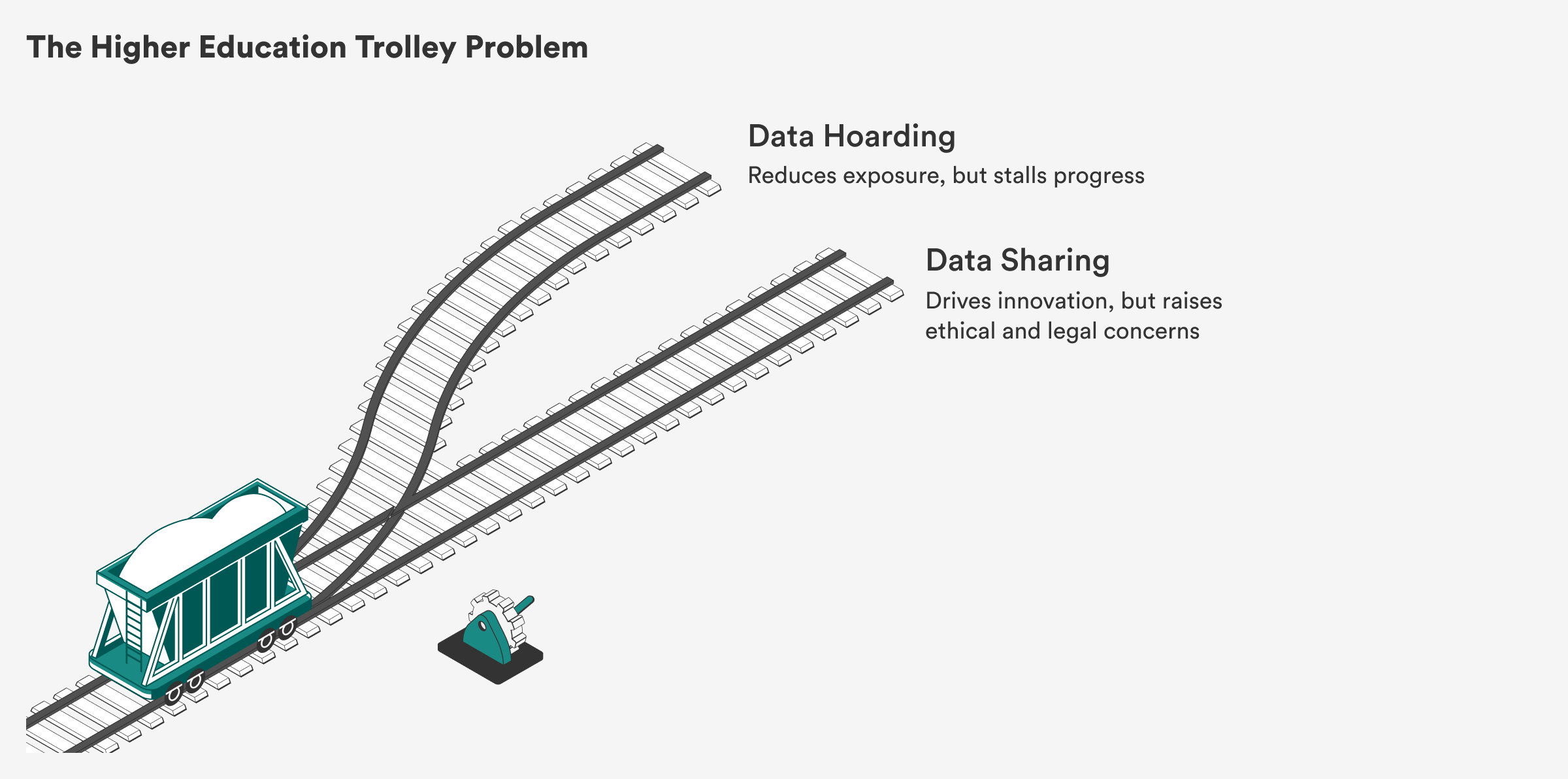

The rapid advancement and reactive implementation of artificial intelligence in higher education has cultivated a fundamental tension: How can institutions pursue open collaboration with other institutions or outside partners while also protecting the of their students, faculty, and staff? The reality is a genuine , in which pursuing one goal often means sacrificing the other. Institutional actions shape data governance, technological ethics, and what it even means to be an institute of higher education in the age of AI. Too often, these decisions default to either overreach or paralysis, the latter especially as schools adopt a misguided belief in AI as inherently neutral or benevolent.

Universities once collected limited information about their students and focused on producing knowledge. Today, they are to collect detailed, real-time information on studentsŌĆöfrom practice test scores to time spent on materials to how often they access class readings. All of this data is created as students move through digital platforms designed to track them. This information is increasingly viewed not just as a byproduct of the studentsŌĆÖ education, but also as a to optimize the educational system itself. Because data-driven predictive AI insights might help further , improve , or identify , universities are that using the data is responsible and forward-looking. That same logic means that higher education institutions view as falling behind.

AI is often as a force multiplier, a tool that simply becomes better the more data itŌĆÖs fed. But data isnŌĆÖt magic. ItŌĆÖs not inherently powerful or good. In fact, . DataŌĆÖs valueŌĆöand its riskŌĆödepends entirely on how and why itŌĆÖs used and who controls it. Further, AI models will more consistently generate useful information if given focused, , rather than entire datasets that havenŌĆÖt been cleaned or filtered. In this sense, . Decisions about how data is collected, structured, protected, and shared shape the kinds of systems universities enableŌĆöand whether those systems serve institutional values or undermine them.

ŌĆ£Decisions about how data is collected, structured, protected, and shared shape the kinds of systems universities enableŌĆöand whether those systems serve institutional values or undermine them.ŌĆØ

There are in implementing AI to bolster learning: greater personalization, real-time feedback, and improved decision-making tailored to diverse student needs. But those potential benefits have been used to justify an for student data, often without clear boundaries or sufficient safeguards. AI systems are supposed to depend on large volumes of standardized, high-quality data, but that technical requirement is frequently misinterpreted as a license to collect data across all aspects of student life. In doing so, campuses and classrooms become of continuous surveillance, monitoring not only academic outcomes but behavioral cues and patterns of engagement, all in the potential service of educational goals. The shift isnŌĆÖt just pedagogical, itŌĆÖs ŌĆö power from faculty and administrators to opaque systems built by private vendors.

Too often, data sharing is as inherently good and data hoarding as inherently bad. But institutions should be grappling with how to hold their studentsŌĆÖ data, with whom to share it, and for what purpose they are holding it in the first place. The kind of data sharing that could truly higher educationŌĆöbetween departments, across institutions, or even making data open sourceŌĆöremains rare and under-resourced. ItŌĆÖs also hard to implement within current limited conceptions of data governance. To move forward, institutions need to redefine what sharing means, not as an open invitation for institutions to extract studentsŌĆÖ information but as a practice of collaborative stewardship that is intentional, centered around privacy, and aligned with their missions.

Most institutions lack the to collect and use studentsŌĆÖ information efficiently, so they turn to third-party vendors offering platforms that promise both insights and . What results is the following: Instead of building internal capacity to use data in ways that align with academic values, institutions key functions and student data to external platforms. These offer polished tools and promise greater efficiency, but in practice, they seize control and obscure the educational institutionŌĆÖs visibility. Data flows upward into proprietary systems where institutions may have limited access and limited ability to adapt tools to evolving needs. Meanwhile, the potential for data to support core educational goalsŌĆöenhancing instruction, informing research, strengthening student well-beingŌĆöis under-explored.

ŌĆ£To truly use AI to improve their educational goals, institutions must reject the false binary of data hoarding versus sharing.ŌĆØ

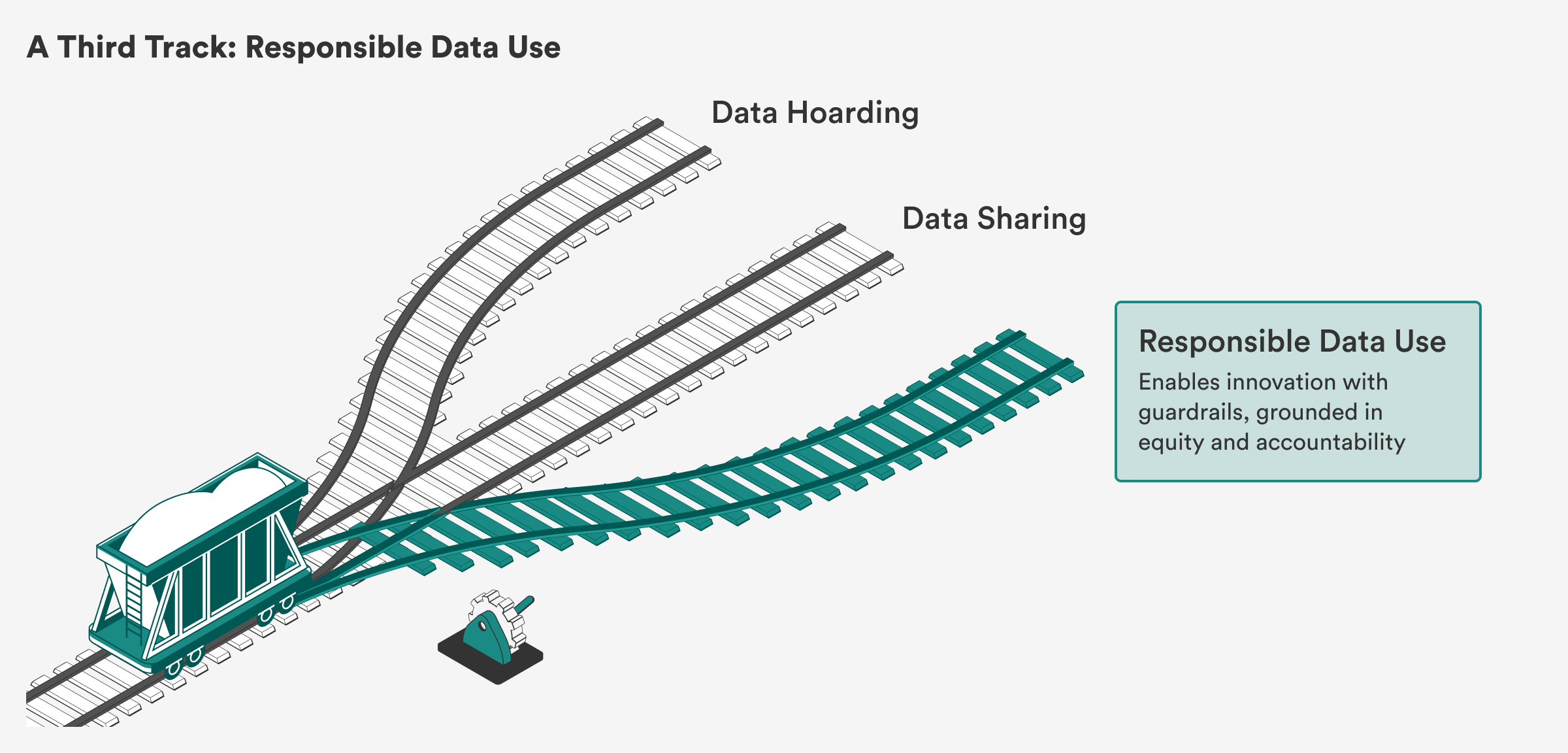

To truly use AI to improve their educational goals, institutions must reject the false binary of data hoarding versus sharing. They must instead build structures that prioritize ethical collaboration over extractive use. AIŌĆÖs role in higher education is not inevitable; it is a choice that universities must make with intention, vision, and care.

Suppose youŌĆÖre a university administrator, charged with upholding the institutionŌĆÖs mission to educate, inquire, and serve. In front of you is a lever that can switch a trolley along one of two tracks: one promises accelerated learning through AI, but it does so at the potential cost of giving up control over the institutionŌĆÖs data, its reputation, and its responsibilities to students and faculty. The other track rejects AI use and preserves cautionŌĆöshielding the institution from liability, public scrutiny, and unintended consequencesŌĆöbut at the potential expense of innovation, improved student outcomes, and institutional relevance.

ItŌĆÖs a false dichotomy, but a persuasive one. Universities are they must either embrace AI to stay relevant or risk falling behind. Pressure is rising from all sides: AI vendors promise efficiency with insights for classroom management and individualized learning, peer institutions tout cutting-edge innovation stemming from their ability to use AI for student recruitment or retention, and the public expects AI to be with in some way. In this context, adopting AI feels like a stark tradeoff between progress and control. But that framing misses what is possible. The real choice isnŌĆÖt between reckless adoption and total avoidanceŌĆöitŌĆÖs between ceding control to outside forces or shaping AI to serve the universityŌĆÖs mission.

This moment demands more than binary thinking. The core question is not whether institutions should share student data, or even with whomŌĆöbut how to share responsibly, with clarity about who is being served and what needs protecting.

Higher education institutions must recognize that there is a third trackŌĆöone that avoids both reckless data extraction and risk aversion. This middle path requires building new governance structures, not just choosing between two bad options. This track doesnŌĆÖt reject AI, but reclaims it by asking: How can universities shape AI to serve learning, equity, and the public good, rather than letting it reshape them?

ŌĆ£The false choice between data hoarding and indiscriminate sharing misses the point: Effective governance depends on aligning data practices with a clear purpose and a clear beneficiary.ŌĆØ

The false choice between data hoarding and indiscriminate sharing misses the point: Effective governance depends on with a clear purpose and a clear beneficiary. Privacy, equity, and openness are not mutually exclusive; they must be in tandem, with design and accountability structures that prioritize the needs and rights of students, faculty, and staff. The real task is to clarify to whom institutions are accountableŌĆöprimarily students, faculty, and the broader higher education systemŌĆöand to design data practices that reflect that obligation.

Universities often invoke the public interest or communal good to , especially for interventions like predictive analytics or fairness audits. However, these justifications frequently bypass individual consent and transparency, in who benefits from data use and who bears the risk. Collective governance models that seek to expand data use without establishing formal mechanisms for meaningfully protecting individual agency risk replicating the very power imbalances they seek to correct. AI implementation complicates this balance further, especially when institutions with companies like OpenAI, Microsoft, Turnitin, and Civitas Learning to implement AI tools. These partnerships often rest on , heightening the risk that student data will be repurposed, commodified, or exposed without student knowledge or institutional oversight.

Many institutions struggle with siloed data and limited capacity for integration, creating bottlenecks. (IR) offices and (ISO) are increasingly tasked with reviewing or brokering all internal data access requests, often without additional resources or clear frameworks for decision-making. As risk aversion calcifies, these gatekeeping structures become . Faculty and institutional researchers trying to build student success models or early warning systems encounter red tape, long delays, or flat denials. Innovation slows. And in the absence of viable internal pathways, universities begin to turn outwardŌĆöoutsourcing analytics and AI development to vendors.

Meanwhile edtech companies gain significant rights over data use, retention, and model training, often beyond the purpose of collection. What looks like data hoarding is often vendor overuse: Data flows out to third parties under broad agreements, but the university and students see little of the benefit. Universities frequently lack as to how vendors are using student data, even when the systems involved directly affect instruction or advising, further weakening universitiesŌĆÖ ability to shape AI systems in line with their missions.

Under-resourced institutions a particular challenge. Lacking privacy and technical teams, they have to rely on vendors, fearing they will otherwise fall behind. The resource gap increases as well-supported universities negotiate partnerships, while others stay bound to opaque systems.

Meanwhile, remains murky. Universities collect vast amounts of sensitive information, but students give meaningful or informed consent for secondary uses of that data. Consent processes are often buried in agreements with platforms, and institutions routinely claim ownership of student data by default. Few formal mechanisms exist to evaluate AI tools, and student voices are excluded from procurement decisions.

Existing legal frameworks to fill the gaps. the Family Educational Rights and Privacy ActŌĆöremains the primary federal safeguard, but it was not designed to address (a set of data that describes other data) or (the process by which an AI system draws conclusions or makes predictions based on patterns in data). It offers limited protections for modern AI systems and does not impose strong accountability requirements on third-party vendors. This regulatory vacuum is compounded by inconsistent processes, which allow irregular contracting and opaque vendor relationships to flourish.

The result is an identity crisis for higher education institutions. Although risk-averse policies may limit exposure, they can also reduce transparency, stifle experimentation, and degrade internal technical capacity. Innovation gets displaced from the public to the private sphere, weakening universitiesŌĆÖ role as stewards of student data and trusted actors in the AI ecosystem. Over time, this drift leaves institutions with less insight, less flexibility, and less say in how AI reshapes teaching, learning, and student life.

ItŌĆÖs clear that universities should respond to AI thoughtfully, and they must consider the perspectives of students when making their choices. Teaching students how to prompt ChatGPT is not the same as designing systems that inquiry, equity, and democratic knowledge; there is a difference between teaching for consumption of AI versus creation with AI. Even more ŌĆ£tracksŌĆØ emerge depending on how university administrations decide to implement artificial intelligence across the institution: Do they prioritize privacy, consent, ownership, convenience, or institutional autonomy?

ŌĆ£Teaching students how to prompt ChatGPT is not the same as designing systems that empower inquiry, equity, and democratic knowledge.ŌĆØ

Despite assurances from institutions of ethical intent, many students donŌĆÖt know how their data is collected, analyzed, and shared, particularly when it is repurposed for uses beyond direct educational support. Students have with opaque data practices, often describing a reluctant acceptance of university systems and a deep concern about the ethical implications of how their information is handled. These concerns reflect not only a lack of transparency but also a failure of institutional accountability in meaningfully engaging students as stakeholders in data use and sharing. Furthermore, consent is often in institutional clauses embedded in documents that students may need to sign, such as enrollment agreements or digital platform terms of service, rendering that information inaccessible or unintelligible.

This lack of transparency and student involvement not only limits studentsŌĆÖ agency over their own data but also stifles opportunities for them to actively shape and master AI technology. Instead of addressing these problems, universities often direct their resources to concerns like AI plagiarism detection, overlooking the critical need to engage students in understanding, questioning, and mastering the technologies that shape their education and the world at large. By students from these conversations, institutions miss the chance to them as collaborators in developing ethical AI systems, foster critical thinking, and prepare them to navigate and influence the technological landscape of the future.

As AI becomes more integral to campus operations, the governance structures that are meant to provide oversightŌĆönamely (▒§ĖķĄ■▓§)ŌĆöa░∙▒ . These bodies were not designed to assess the ethical risks posed by algorithmic systems or data-intensive platforms, leaving significant gaps in the institutional safeguards meant to protect students. But the risk of harm toward students extends far beyond a classroom; notably, many students are entirely unaware that their might be used for research and predictive analytics or shared with third-party vendors. Predictive analytics tools, while often deployed to improve student retention or identify ŌĆ£at-riskŌĆØ learners, can reinforce existing systemic inequalities. For instance, algorithmic models trained on historical data can that disproportionately flag students from marginalized socioeconomic or racial backgrounds, leading to mislabeling, over-policing, or uncontextualized interventions.

As universities work to balance data protection with open access, meaning data that is freely available for anyone to view, use, or share, several promising models within higher education and beyond serve as worthwhile precedents for ethical and participatory data governance.

Within higher education, student data cooperatives are emerging to restore student agency in AI governance. Stanford researchers have proposed as fiduciary intermediaries that can negotiate with companies and third-party vendors on guidelines around data sharing and access, as well as how benefits from that data are distributed. As a speculative model, these data co-ops enhance trust within universities, providing a substantial avenue through which students can have their rights represented in AI development and adoption.

To balance open data for research innovation with student privacy, universities can consider using , which offer different levels of access depending on a userŌĆÖs role, purpose, and training. These systems allow for granular control over who can view and work with data to ensure its responsible use, offering a way to adjust the tradeoffs between research utility and privacy protection. While tiered data access systems arenŌĆÖt necessarily new innovations within large organizations, more examples are emerging in higher education. The requires researchers to submit detailed proposals, undergo ethical reviews, and justify their data use based on learner privacy considerations. offers protected sandboxed environments for working with sensitive institutional datasets, minimizing the risk of misuse.

Initiatives like the are creating interoperable data standards across institutions, shedding light on a critical need to standardize how universities across the country assess and procure AI software. Without a method of standardization or the required expertise, some third-party vendors will often fill the gap by suggesting terms and settings that best serve them but do not necessarily benefit the institutions or the students themselves. A data standard model shows what may be feasible when universities engage in knowledge-sharing to solve problems. Doing so also increases their collective leverage; rather than negotiating alone with vendors, they can act together, demanding tools that are transparent, effective, and accountable. Collaboration is what gives institutions the power to set the terms.

Universities could also consider applying models that are usually used outside of higher education. , which have been used by local government and hospital systems, enable community-governed data agreements, where stakeholders collectively decide how data is shared, used, and protected. Civic data trust frameworks emphasize the idea of stewardship over ownership, prioritizing collective benefit over extraction.

Pursuing pro-social goals is not only legitimateŌĆöit is also essential to the mission of higher education. But as institutions scale AI systems in service of these aims, they must confront the reality that maximizing aggregate impact can obscure harm to individuals, especially those who do not conform to dominant data patterns. This is not a reason to abandon AI-driven innovation but a call to for transparency and individual agency in the most beneficial way. The real challenge in using AI in higher education is not choosing between public benefit and individual rights, but designing systems that recognize and reconcile both so that ethical intentions donŌĆÖt ultimately cost the very students they seek to serve.

Universities today might believe they face only two options: Continue down the track of ad hoc, reactive policiesŌĆölurching from one AI disruption to the nextŌĆöor impose rigid controls that risk stalling innovation and undermining their academic missions. But a third, better path exists: reimagining governance itself.

Rather than treating AI as disruptive innovation that they must race to adopt, universities can build governance frameworks that deliberately protect their core valuesŌĆöacademic freedom, equity, opennessŌĆöwhile enabling responsible, thriving innovation. Governance is not a brake on progress; it is the architecture that makes durable, mission-aligned innovation possible.

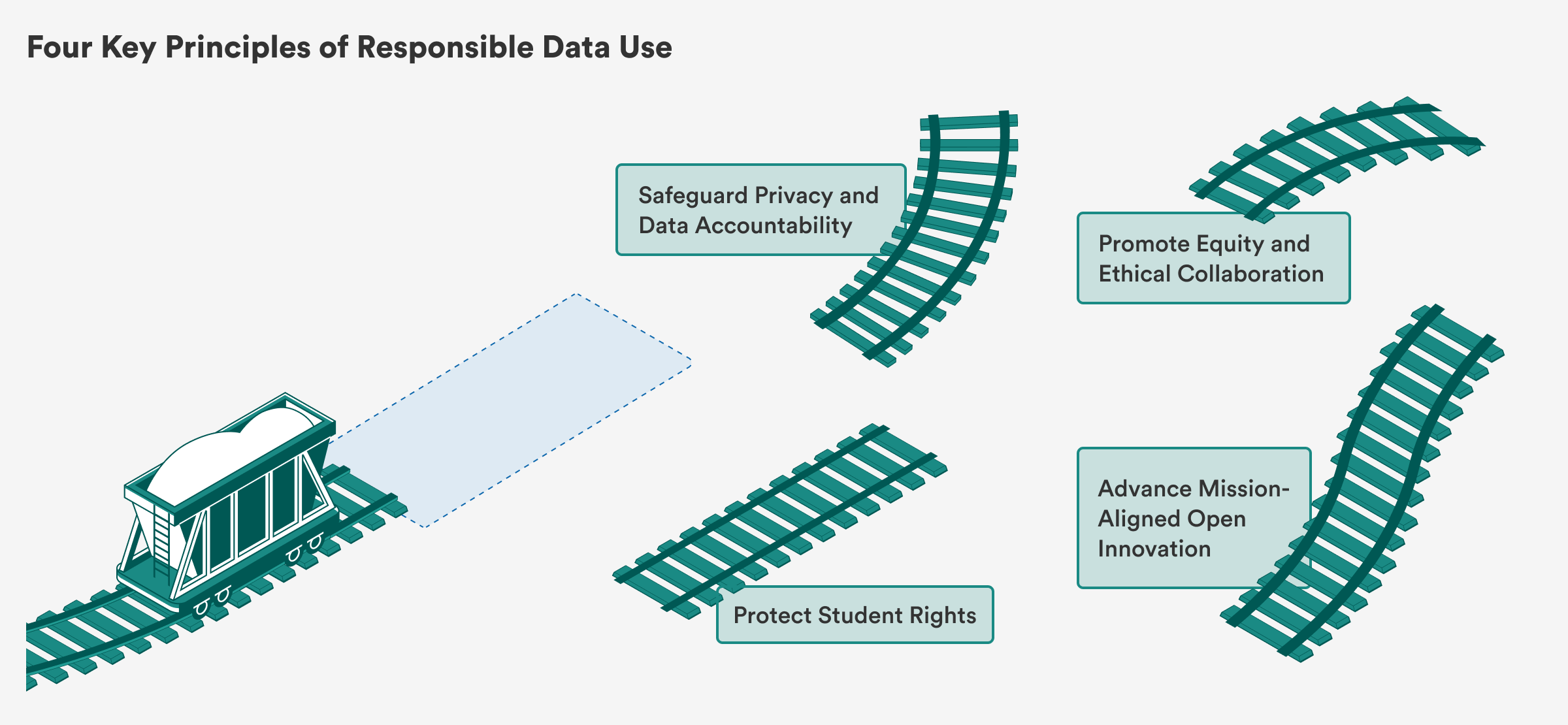

This reimagined governance model rests on four key principles:

Good governance is not about saying ŌĆ£noŌĆØ to AI; itŌĆÖs about making deliberate, mission-aligned choices about where, how, and why to adopt it. In practical terms, this means mapping each (such as adaptive tutoring, or early warning systems for students at risk of not meeting their degree requirements) back to explicit institutional objectivesŌĆöretention rates, pedagogical improvement, or research breakthroughsŌĆöand evaluating tools by how directly they further those goals. A proactive governance framework gives universities the confidence to experiment responsiblyŌĆösetting clear standards, protecting community members, and ensuring that new technologies deepen, rather than erode, the universityŌĆÖs academic and civic role. Without it, institutions risk becoming reactive and brittle, losing the trust of their communities and missing opportunities for meaningful, sustainable innovation.

The real question for universities is not whether they will govern AI, but how. Framing AI governance as a choice between two opposing pathsŌĆöone of unchecked innovation and the other of stagnationŌĆöcreates a false dichotomy. Universities donŌĆÖt have to sacrifice progress for protections; they can actively pursue both. The goal isnŌĆÖt to avoid AI, but to integrate it in ways that uphold these institutionsŌĆÖ core mission: advancing knowledge, equity, and the public good.

Through principled, proactive governance, universities can navigate the complexities of AI not as passive bystanders, but as leaders shaping responsible innovation. This approach allows them to foster academic freedom, enhance civic engagement, and fortify democratic values, ensuring that AI serves the long-term interests of education and society.