Babe Liberman

Project Director, EdTech and Emerging Technologies team, Digital Promise

How Can We Maintain Our Intellectual Autonomy in an AI-saturated World?

AI literacy doesnŌĆÖt just involve understanding the technology itselfŌĆöit requires understanding ourselves in relationship with technology. In response to Deji OlukotunŌĆÖs short story ŌĆ£Mothering the Bay,ŌĆØ education technology expert Babe Liberman explores how we can teach students to be aware of how AI either empowers or obscures their decisionmaking. ItŌĆÖs crucial, she argues, to notice when weŌĆÖre truly thinking versus when weŌĆÖre being led.

Future Tense Fiction is partnering with ╣·▓·╩ėŲĄŌĆÖs Technology and Democracy programs on ŌĆ£Digital Futures Reimagined,ŌĆØ a series of policy dinners around the country. "Mothering the Bay" was partly inspired and informed by ŌĆ£Democracy and Digital Empowerment in the Age of Deepfakes,ŌĆØ a dinner attended by the author and co-hosted by ╣·▓·╩ėŲĄ and University of California, BerkeleyŌĆÖs College of Computing, Data Science, and Society. The dinner was held on the UC Berkeley campus in October 2024.

This story was in . Subscribe to the Issues to make sure you never miss a story.

Does my meeting agenda include all necessary discussion points? How can I make a plan to start meditating, considering that I have zero ability to focus? Can I trust myself to wing a spicy vegetarian stew recipe without poisoning anyone? These are the types of questions IŌĆÖm grappling with as I consider when and how to leverage generative artificial intelligence in my own life.

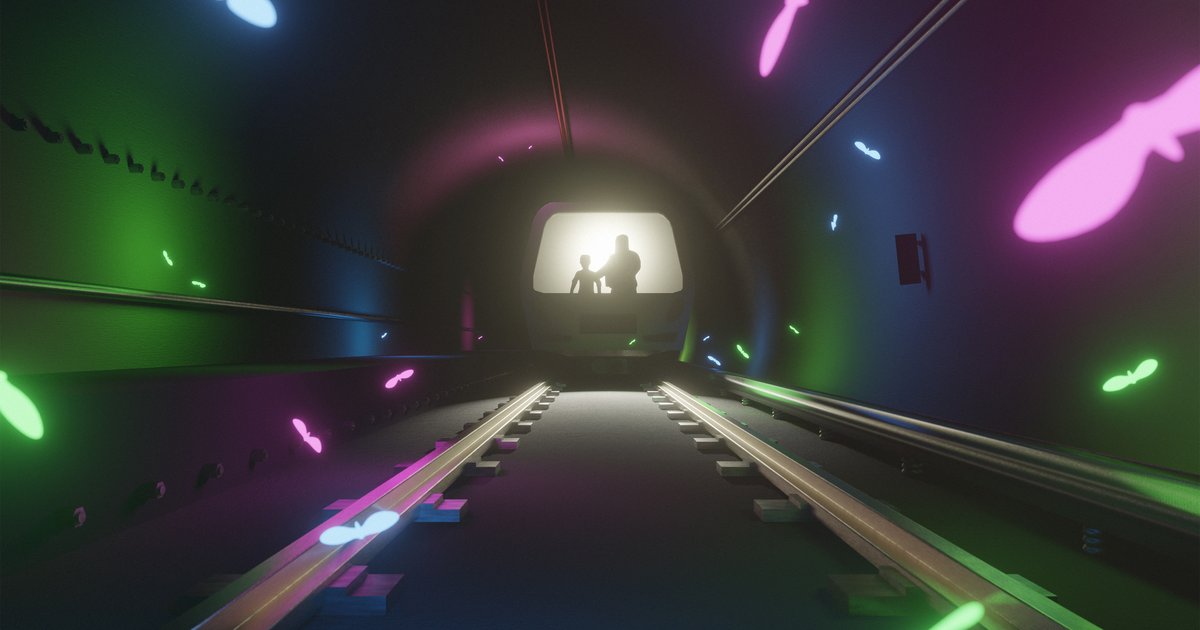

As an education researcher new to AI tools myself, I feel extra pressure to get the ŌĆ£when and howŌĆØ questions rightŌĆöon an individual level and, more broadly, in the academic settings where those tools have so rapidly propagated. To do so, we need to develop and teach a skill IŌĆÖll call "intellectual proprioception"ŌĆöthe ability to sense when weŌĆÖre thinking independently versus when we're leaning on AI. We usually talk about proprioception in relation to the physical world: It represents our ability to understand where our body parts are, and how they move. Just as a skilled dancer develops an innate awareness of their bodyŌĆÖs position in space, I hope to practice noticing my mindŌĆÖs position in relation to technology. But maintaining this awareness isnŌĆÖt easy when technological shortcuts are as omnipresent as the buzzing moths on the BART in Deji OlukotunŌĆÖs Future Tense Fiction story ŌĆ£.ŌĆØ

OlukotunŌĆÖs tale imagines a gripping and plausible near-future where humankind interacts with personalized AI agents through translucent visors and AI-enabled ŌĆ£mothsŌĆØ that filter realityŌĆöcurating ads and experiences designed to tap into usersŌĆÖ deepest fears, desires, and insecurities. People in this future are physically attached to these agents, even more profoundly than we already are to our phones, to a point where many can no longer rely on themselves to understand whatŌĆÖs happening in the world directly around them.

Through the story, Olukotun explores a question that feels almost as urgent for us readers as it is for the storyŌĆÖs characters stuck together in a Bay Area Rapid Transit car in the aftermath of an emergency: How can we maintain our intellectual autonomy in an AI-saturated world?

In ŌĆ£Mothering the Bay,ŌĆØ Luisa, a nursing assistant who works in cosmetic surgery, brings a nuanced perspective to technology as she parents her newly adopted daughter, Simone. Without rejecting technology altogether, Luisa distrusts pervasive technological enhancements that brazenly claim to improve lives. She sees cosmetic surgery as navigating the tension between transformation and authenticity, not just enhancement. At work, her strength lies in guiding patients through recoveryŌĆöthe careful, personalized integration of change.

She applies this same wisdom to parenting Simone, who had previously been quarantined from AI, ŌĆ£as if it were a virus,ŌĆØ by the foster care system. For Luisa, true protection isnŌĆÖt avoidance but rather educationŌĆöteaching Simone to understand how AI shapes perception and decisionmaking while developing her capacity to think independently. Simone, Luisa thinks, ŌĆ£should have been taught to think for herself to understand how agents workedŌĆöthe data sets, the policies, the algorithmsŌĆöor at least she should have been taught to understand what kind of agent could enrich her life.ŌĆØ Like postsurgery recovery, learning to engage with AI requires careful integration and constant awareness.

In the real world, the rapid onset of generative AI has led to a proliferation of AI literacy frameworks that hope to provide guidance for learners in a range of settings and life stages. Many of these frameworks, including , emphasize understanding AIŌĆÖs capabilities, recognizing its applications, and thinking critically about its use. ThereŌĆÖs energy around implementing these frameworks more explicitly in schools, universities, and workplaces. But theyŌĆÖre also relevant on an individual level: How do we each embody these skills in practice, as we go about our most mundane daily tasks? Developing AI literacy is an active, ongoing process. Like Luisa helping Simone navigate AI responsibly, we need guardrails and practice to integrate these tools while maintaining our intellectual autonomy.

Students today face a patchwork of inconsistent policies around AI use in education, with some educators embracing these tools while others ban them outright. At the same time, most studentsŌĆÖ knowledge of AI comes not from structured learning environments but rather from informal channelsŌĆöfamily discussions, social media influencers, extracurricular activities, or independent playing around with apps and tools. This DIY approach is inevitable since many of the adults around themŌĆöteachers, administrators, and policymakersŌĆöare themselves struggling to understand these technologies.

When IŌĆÖve spoken to middle and high school students about how they use (or wish they could use) AI, theyŌĆÖve told me they arenŌĆÖt simply seeking permission to use the technologyŌĆötheyŌĆÖre actively looking for guidance on how to develop a balanced relationship with it. Adults often suspect kids want to surrender themselves to technological convenience, but that is misguided. Most kids want to harness AIŌĆÖs efficiency and capabilities while still developing their authentic voices and critical thinking skills. a student has an idea for an exciting new app, but needs help to bring it to life through code, or maybe they have imagined a short story, but would benefit from prompting to further develop their characters. Young people deserve a more coherent educational approach that helps them make thoughtful decisions about when to leverage AI and when to rely on their uniquely human capabilities.

Providing this structure doesnŌĆÖt have to be scary. It can begin with simple reflection exercises that invite students to document their thought processes during AI-assisted assignments, distinguishing between their original ideas and AI-influenced work. For instance, in a history lesson, students might first independently answer questions like ŌĆ£How did the New Deal reshape the role of government in American society?ŌĆØ before comparing their responses to AI-generated answers. By experimenting with different prompts and analyzing what makes answers more complete or nuanced, students can learn to use AI strategically while developing critical thinking skills. Teachers can scaffold this process by providing that guide students through key questions including ŌĆ£Am I using AI tools to enhance my learning?ŌĆØ and ŌĆ£Am I actively reflecting on my use of AI tools?ŌĆØ Similar to showing their work in math class, this process can help students reflect on their knowledge, identify gaps, and leverage the resources available to them.

Research shows that ŌĆ£ ŌĆØ strengthens independent thinking; doing challenging brain workŌĆöespecially work that feels uncomfortable in the momentŌĆöis where lasting learning happens. By supporting learners to alternate between AI-assisted and independent work while documenting their workflow, we can help them develop a greater awareness of their thinking patterns. Like dancers who practice isolated movements to build body awareness, students can enhance their cognitive processes by engaging in structured problem-solving both with and without AI assistance. In this way, cultivating AI literacy is not simply about understanding the technology itselfŌĆöitŌĆÖs about understanding ourselves in relationship with the technology.

In writing this essay, for example, I engaged with an AI chatbot, Claude, as a reflective exerciseŌĆöto experience firsthand the very tension IŌĆÖm exploring. As I collaborate with Claude to brainstorm examples or refine a paragraph, I notice myself becoming hyperaware of my thinking process. Each interaction becomes an opportunity to consider: When am I using AI as a thought partner to develop and clarify my own ideas? And when am I letting it do too much of the heavy lifting?

My productive struggle with this balance mirrors what weŌĆÖre asking of our students. Experiences with AI become opportunities to practice intellectual proprioception, to notice when weŌĆÖre truly thinking versus when weŌĆÖre being led. The consequences of getting this wrong are significant: already suggest that excessive reliance on AI can lead to diminished critical thinking abilities, much like my sense of direction went out the window once I started depending on GPS for daily driving. More subtly, without developing strong intellectual proprioception, we risk losing touch with our own decisionmaking processes.

As AI tools become increasingly sophisticated and ubiquitous, weŌĆÖre all navigating questions about authenticity and autonomy. Like OlukotunŌĆÖs BART riders who panic when their AI agents go offline, we risk becoming overly dependent on artificial guidance, unable to trust our unaugmented judgment. We want to leverage AIŌĆÖs capabilities while preserving our unique voices.

Success won't come through a total ŌĆ£quarantineŌĆØ from AI, and it wonŌĆÖt come from willy-nilly integration. Instead, we can practice becoming aware of how AI influences our thinking and whether that engagement authentically serves us. As educators and mentors, our role extends beyond teaching technical AI literacy. We must advocate for ethical AI use that keeps human judgment central, while nurturing the intellectual autonomy that will enable students, and all of us, to use AI responsibly and effectively.