Getting to the Source of Infodemics: ItтАЩs the Business Model

Abstract

Ranking Digital Rights (RDR) content is no longer updated on ╣·▓·╩╙╞╡тАЩs website. RDR is now located at the.

Acknowledgments

This report was written by Ranking Digital Rights (RDR) Senior Policy and Partnerships Manager Nathalie Mar├йchal, Founding Director Rebecca MacKinnon, and Director Jessica Dheere.

Research Director Amy Brouillette, Company Engagement Lead Jan Rydzak, Communications Manager Kate Krauss, Communications Associate Hailey Choi, and Editorial Consultant Nonna Gorilovskaya also contributed to this report.

We wish to thank all the reviewers who provided their feedback on the report. Their inclusion here does not mean that they support or agree with any of the reportтАЩs statements, proposals, recommendations or conclusions:

- Dunstan Allison-Hope, Vice-President, BSR

- Sharon Bradford Franklin, Policy Director, Open Technology Institute

- Melissa Brown, Partner, Daobridge Capital Limited & Advisor, Ranking Digital Rights

- Bennett Freeman, Co-Founder, Global Network Initiative & Board Secretary, 2010-2020

- David Kaye, University of California Irvine & UN Special Rapporteur on the promotion and protection of the right to freedom of opinion and expression

- Gaurav Laroia, Senior Policy Counsel, Free Press

- Eric Null, U.S. Policy Manager and Global Policy Counsel, Access Now

- Courtney Radsch, Advocacy Director, Committee to Protect Journalists

- Spandana Singh, Policy Analyst, Open Technology Institute

- Amie Stepanovich, Executive Director, Silicon Flatirons Center for Law, Technology, and Entrepreneurship at the University of Colorado Law School

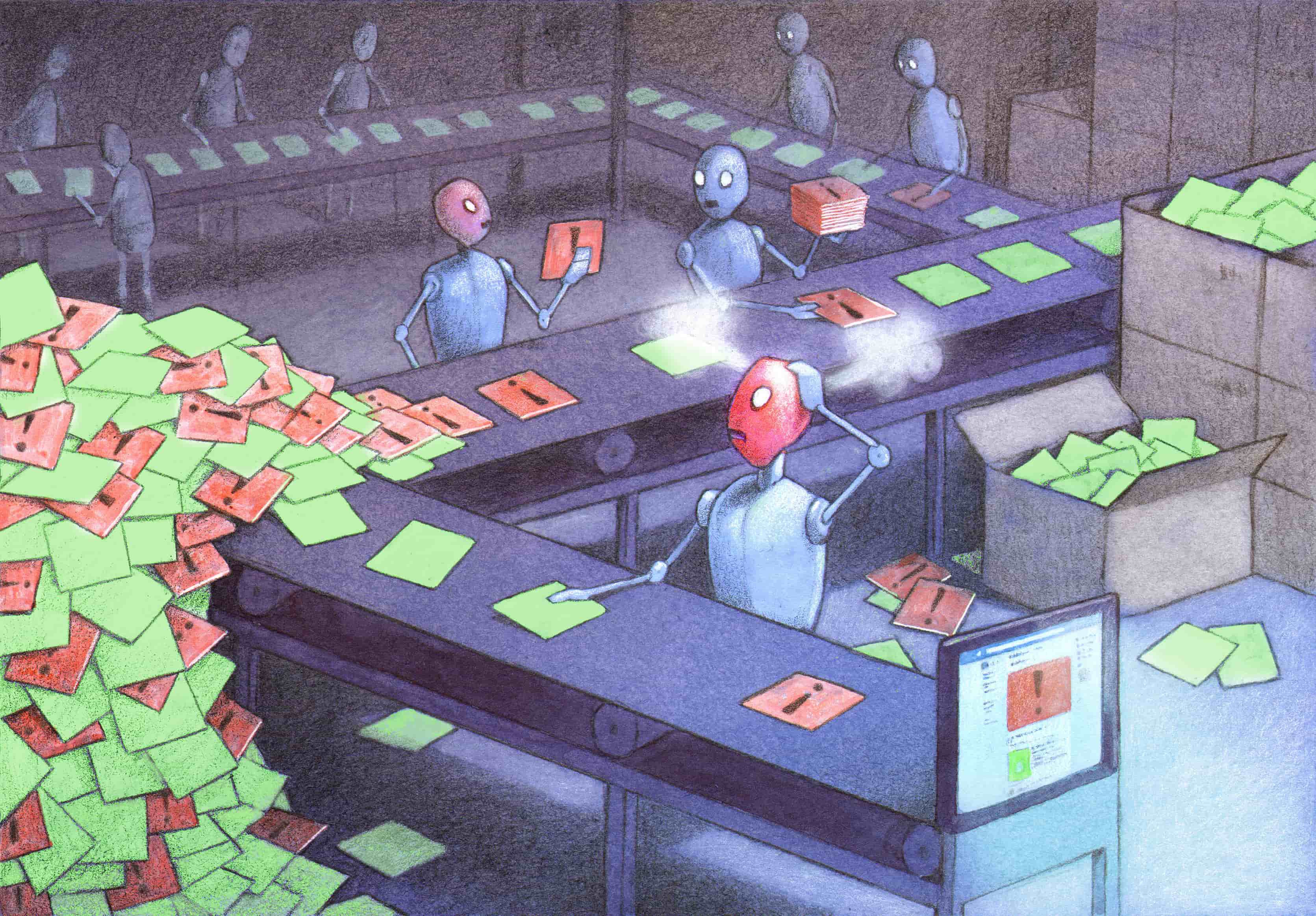

Original art by Pawe┼В Kuczy┼Дski.

We would also like to thank Craig Newmark Philanthropies for making this report possible.

To contact us about this report, please email info [AT] rankingdigitalrights.org or comms [AT] rankingdigitalrights.org

For a full list of current and former project funders and partners, please see: .

For more about RDRтАЩs vision, impact, and strategy see:

For more about ╣·▓·╩╙╞╡, please visit .

For more about the Open Technology Institute, please visit www.newamerica.org/oti.

This work is licensed under the Creative Commons Attribution 4.0 International License. To view a copy of this license, please visit .

Downloads

Executive Summary

Before the COVID-19 pandemic hit, democracies were already struggling to address disinformation, hate, extremism, and other dangerous online content while also protecting free speech and privacy. Now, Facebook, Twitter, and GoogleтАЩs YouTube are awash with disinformation and misinformation that can be deadly. Despite the companiesтАЩ commitment to take unprecedented steps to control the problem, they are failing.

This report argues that Facebook, Twitter, and GoogleтАЩs targeted advertising business models, and the opaque algorithmic systems that support them, are the root cause of their failure to staunch the flow of misinformation.

The second in a two-part series aimed at U.S. policymakers and anybody concerned with the question of how internet platforms should be regulated, this report reinforces the need to adopt a human rights framework for platform accountability. We propose concrete areas where Congress needs to act to mitigate the harms of misinformation and other dangerous speech without compromising free expression and privacy: transparency and accountability for online advertising, starting with political ads; federal privacy law; and corporate governance reform.

First, we point to concerning examples from the pandemic that highlight the connection between targeted advertising and misinformation about the coronavirus and its purported remedies. We explain how international human rights standards provide a framework for holding social media platforms accountable that complements existing U.S. law and can help lawmakers determine how best to regulate these companies without curtailing usersтАЩ rights.

Drawing on our five years of research for the , we then point to concrete ways that the three social media giants have failed to respect usersтАЩ human rights as they deploy targeted advertising business models and algorithmic systems. We describe how the absence of data protection rules enables the unrestricted use of algorithms to make assumptions about users that determine what content they see and what advertising is targeted to them. We note that such targeting can result in discriminatory practices as well as the amplification of misinformation and harmful speech.

Next, we explain why expanding the transparency requirements that currently apply to print and broadcast political ads to all types of online advertising is a prerequisite for oversight and accountability, and how a robust federal privacy law can help fight misinformation and dangerous speech, while acknowledging enforcement challenges.

The final section makes a case for corporate governance reform. We explain how trends in environmental, social, and governance (ESG) investing are prompting companies to adopt due diligence and impact assessment standards, and can strengthen corporate governance over the long term. In light of investorsтАЩ growing interest in holding companies accountable for their social impact, we describe the role Congress can play in mandating corporate disclosure of information pertaining to the social and human rights impact of targeted advertising and algorithmic systems. We also recommend actions that could be taken by the U.S. Securities and Exchange Commission (SEC) to empower shareholders. We again highlight enforcement challenges while also noting strides made by European companies that, if ignored, may portend a loss in global market share for American companies that do not follow.

To conclude, we offer some thoughts about how civil society stakeholders, including researchers, journalists, and advocacy and grassroots organizations, are critical to addressing accountability gaps, especially in the absence of effective regulation and oversight. We also explain why companies must proactively engage with civil society as a part of their efforts to mitigate the negative social impacts of their business models.

Key Recommendations for U.S. Policymakers

Enact federal privacy law that protects people from the harmful impact of targeted advertising.╠¤Specifically, this law should:

- Ensure effective enforcement by designating an existing federal agency, or create a new agency, to enforce privacy and transparency requirements applicable to digital platforms.

- Include strong data-minimization and purpose limitation provisions: Users should not be able to opt-in to discriminatory advertising or to the collection of data that would enable it.

- Give users very clear control over collection and sharing of their information.

- Restrict how companies are able to target users. The law should prohibit the use of third-party data to target specific individuals, as well as discriminatory advertising that violates usersтАЩ civil rights.

Require that platforms maintain a public ad database to ensure compliance with all privacy and civil rights laws when engaging in ad targeting: Pass the Honest Ads Act, expand the public ad database to include all advertisements, and allow regulators and researchers to audit it.

Require relevant disclosure and due diligence around the social and human rights impact of targeted advertising and algorithmic systems.

- Mandate disclosure of targeted advertising revenue along with disclosure of environmental, social, and governance (ESG) information, including information relevant to the social impact of targeted advertising and algorithmic systems.

- Require due diligence: Companies should be required to conduct assessments of their social impact and risks, including human rights risks associated with targeted advertising and algorithmic systems.

Strengthen corporate governance and oversight. The U.S. Securities and Exchange Commission (SEC) rules should empower shareholders to hold company leadership accountable for social impact.╠¤

- Phase out dual-class shares: Companies should not be able to maintain a dual class system of shares that effectively empowers the CEO to vote down shareholder resolutions in perpetuity.

- Do not make it harder to file shareholder resolutions: The SEC should scrap proposed rule changes that will make it more difficult for shareholders to file proposals.

Introduction

As the pandemic death toll continued to rise in the spring of 2020, myriad myths and conspiracy theories circulated, including some dangerous falsehoods, about COVID-19 on Twitter and other social media: mobile 5G technology helps spread the virus; zinc, celluloid silver, miracle mineral solution, and garlic can cure the virus; hydroxychloroquine has a 100 percent success rate in treating the virusтАФingesting bleach may help; and vaccines are being developed with microchip tracking technology funded by Bill Gates.1

Misinformation can be both polarizing and deadly. It is disturbing that social media companies failed to remove posts promoting the falsehoods described above, despite the companiesтАЩ commitments to remove COVID-19 misinformation. With so little known about the novel coronavirus and how to stop its spread, conspiracy theorists, hucksters, political opportunists, and would-be authoritarians worldwide have adopted tactics honed for recent political campaigns to further exploit social mediaтАЩs algorithmic toolset, using platformsтАЩ targeted advertising systems to amplify unfounded virus transmission theories and remedies.

Policymakers have proposed holding companies liable for speech rather than for their targeted advertising business models, the real source of the problem.

Social media platforms are part of a broader information ecosystem that also includes news organizations and other influential actors, including political leaders and celebrities. The growing impact of social media platforms on our information environment is fueled by advertising in general, and targeted advertising in particular. Fifty-five percent of American adults say they get their news from social media.2 That includes information about political candidates and policy issues from ads on Facebook and Google. In 2018, those two companies combined receive almost 60 percent of all digital advertising dollars in the United States.3 They are projected to earn 77 percent of the more than $1.34 billion that is expected to be spent on U.S. digital political advertising in the 2019тАУ2020 election cycle.4 Before deciding to ban political advertising last fall, Twitter earned $3 million from political ads during the 2018 midterm elections.5 Social media platforms draw attention and advertising dollars away from news outlets whose journalism also includes critical facts without which citizens cannot make informed choices about how to live their livesтАФor to vote.

But digital platformsтАЩ increasing share of news and advertising is only the beginning of the story. Since the 2016 U.S. presidential election, companies like Facebook, Google, and Twitter have struggled to curtail the circulation of both organic and paid false information on their platforms. The content moderation and takedown systems that social media companies have put in place to enforce their content rules are failing society as well as individual users: harmful misinformation continues to flow; while content moderation rules are enforced in inconsistent and often arbitrary ways, resulting in unintended deletion of content produced by activists and journalists, thereby restricting freedom of expression.6 Company systems intended to block coronavirus misinformation from ad platforms have been proven ineffective.

In parallel, policymakers have begun to address the problem of dangerous online content, particularly by formulating proposals to amend the extent to which companies are legally liable for user-generated speech on their platforms.7 But such proposals do not address what makes these platforms differentтАФand so potentially dangerous: their targeted advertising business models and the algorithmic systems that drive them.

Targeted advertising business models require extensive data collection and algorithmic content shaping in order to maximize targeted advertising revenue. Designed to prioritize sensational, eye-catching, and controversial content, they disproportionately amplify organic and paid speech that has great potential to corrupt the quality of information we need to promote not just healthy democracies and fair elections, but also, as weтАЩve seen in the COVID-19 pandemic, public health. At the same time, targeted advertising enables paying customers to target different types of content, including ads, at specific audiences based on peopleтАЩs demographics and declared interests as well as algorithmically inferred assumptions about other affinities and traits that may not even be correct.

Content moderation is necessary, but far from sufficient.

Against the backdrop of both a global pandemic and a U.S.-presidential election campaign, it is now incontrovertible that strengthening platform accountability and, thus, the integrity and resilience of our information ecosystem, is critical to the future of democracy. This report, which offers concrete U.S. policy recommendations, is the second in a two-part series examining how targeted advertising business models can drive the spread of misinformation8 and dangerous speech,9 and what U.S. lawmakers and regulators can do to hold companies accountable for these systems without infringing on human rights. We make the case for why and how policymakers and advocates should prioritize holding platforms accountable for mechanisms, policies, and practices that enable the amplification and targeting of user-generated misinformation and dangerous speechтАФwithout which the speech would have less reach and, thus, fewer negative effectsтАФinstead of holding the companies liable for the speech itself.

Some policymakers are focused on breaking up these huge companies as the key to limiting social harms they can cause or contribute to. Antitrust is not a focus of this report, though encouraging competition, ensuring interoperability, and potentially breaking up monopolies are important parts of the policy toolkit that have the potential to alleviate some of the problems that are exacerbated by the major platformsтАЩ enormous scale. But these regulatory interventions alone will not be sufficient. Checking the power of Big Tech will require a two-pronged approach outside the context of antitrust, and competition law more broadly, that aims to mitigate the harms posed by the underlying ad- and attention-driven business model that drives platform revenue. That approach involves: 1) a comprehensive and enforceable federal data privacy regulation, and 2) holding companies directly accountable by shining sunlight on the harms created by the business model and promoting policies that increase the fairness, accountability, and overall transparency of those practices.

Having outlined in part one the challenges to both human rights and civil liberties targeted advertising and algorithmic systems present, this second report focuses on what social media companies and, in particular, policymakers can do to address them. We borrow a metaphor from the oil industry to clarify our contention: We cannot clean up downstream pollutants like misinformation or dangerous speech without tackling the upstream processesтАФtargeted advertising and algorithmic systemsтАФthat make this speech so damaging to our information environment in the first place. Focusing on the downstream effects of the infodemic,10 as has been the approach thus far, does nothing to address its upstream structural causes.

We cannot clean up downstream pollutants like misinformation or dangerous speech without tackling upstream processes like targeted advertising and algorithmic systems.

In the first report, , we examined two overarching types of algorithms: 1) content-shaping algorithms that determine what individuals users see when they use a companyтАЩs online services (including those that target ads) and 2) content moderation algorithms that help human reviewers identify (and sometimes remove) content that violates the companyтАЩs rules.11 We then gave examples of how these technologies are used both to propagate and prohibit different forms of online speech (including targeted ads), and showed how they can cause or catalyze social harm, particularly in the context of the 2020 U.S. election. We described how users are profiled, segmented, and targeted in ways that allow advertisers to continually reinforce a specific message to a particular type of audience. We illustrated how, when this capability is combined with mis- or disinformation, such as incorrect voting information or outright lies about a candidate, for political gain, the results can be disastrous for democracy. ╠¤

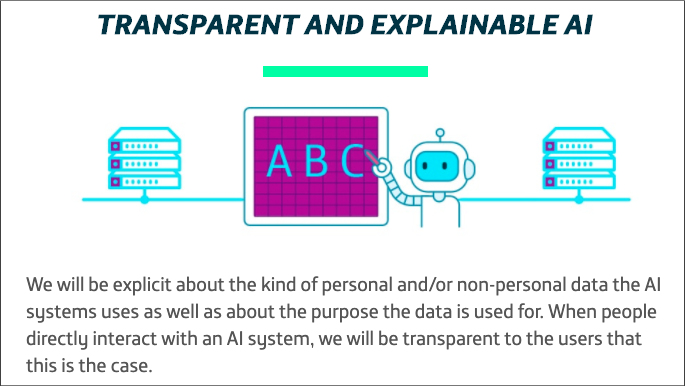

We also highlighted what we╠¤donтАЩt╠¤know about these systems. We called on companies to be much more transparent about how their content-shaping algorithms and content moderation systems work, and to give users more control over how content is being prioritized and promoted to them, or targeted at them. We explained why a regulatory focus on holding companies liable for content shared by users, on its own, will not succeed in stemming the spread of problematic content, and will likely result in the violation of usersтАЩ free expression rights. We agreed that content moderation is necessary, but far from sufficient, and we asserted that the first step in addressing the problem is to require much greater transparency and accountability around a business model that relies on algorithmic curation and exploitation of user data.

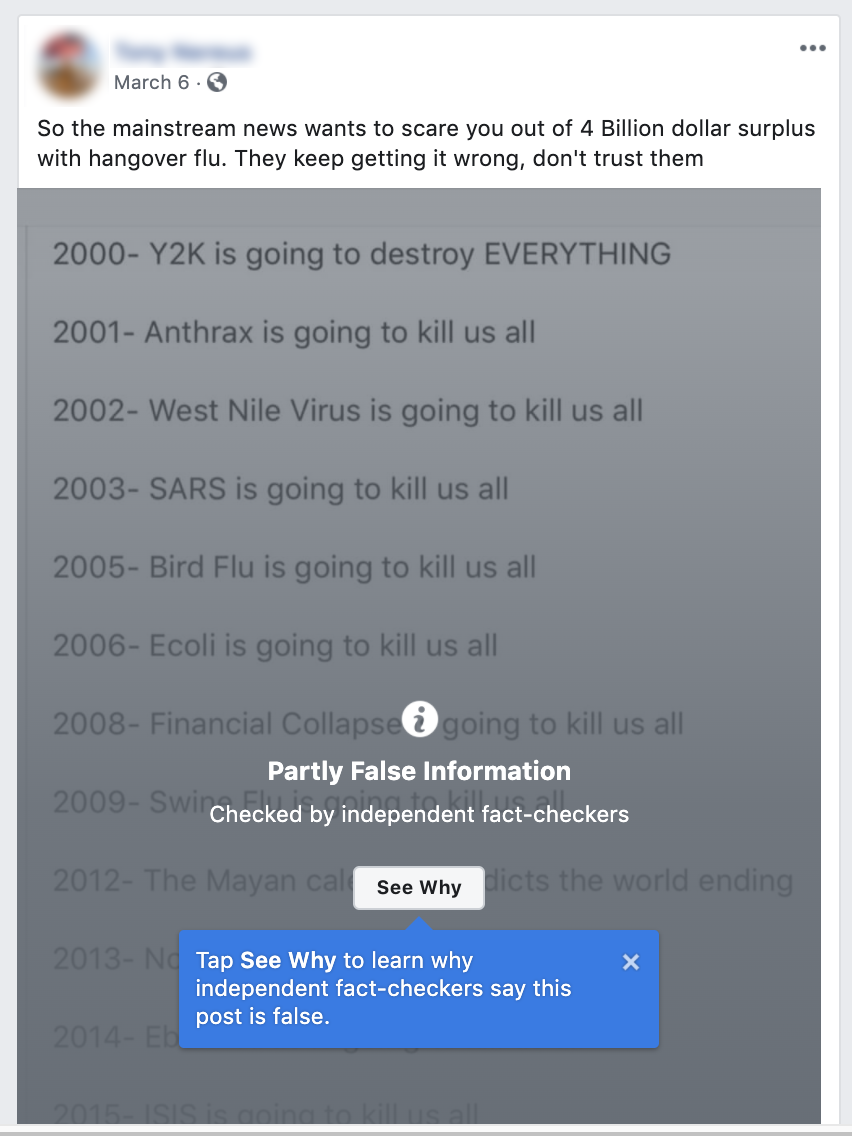

Identifying and removing misinformation and disinformation, and otherwise working to mitigate its impact by flagging it as false is an essential short-term measure. But as we pointed out in the previous report, it is important not to force companies to censor higher volumes of content across a broad range of topics, languages, and cultural contexts when their moderation systems lack the accuracy, consistency, and nuance to avoid violating usersтАЩ right to freedom of expression and information. But infodemics will keep plaguing usтАФand may get worseтАФunless Congress acts, and also empowers other stakeholders including institutional investors to hold companies accountable.

Building on five years of research for the Ranking Digital Rights Corporate Accountability Index (RDR Index), which evaluates how transparent companies are about their policies and practices that affect online freedom of expression and privacy, this report reinforces the case for adopting a human rights framework for platform accountability and proposes two concrete areas where U.S. Congress needs to act to mitigate the harms of misinformation and other dangerous speech without compromising free expression: federal privacy law and corporate governance reform.

In August 2019, the Business Roundtable published a statement signed by 181 CEOs of the major U.S. corporations, announcing their commitment to the idea that the purpose of business is no longer only to serve shareholders, but also to тАЬcreate value for all our stakeholdersтАЭ including employees, customers, and communities.12 It is no longer debatable whether businesses in any sector should be held accountable for their social impact.

The proliferation of misinformation during the COVID-19 pandemic has shown just how high the human costтАФand ultimately the economic costтАФcan be when companies prioritize shareholder returns over all else, and when the government fails to hold companies accountable to the public interest.13 Society is now paying the price for failing to require that companies make credible efforts to understand and track their social impact, and to take responsibility for preventing and mitigating social harms that their business may cause or contribute to. It is time to adjust course and design a resilient and equitable information environmentтАФthrough increased transparency; responsive, evidence-based regulation; and persistent stakeholder engagementтАФthat protects human rights and civil liberties especially in times of crisis and change.

Citations

- Newsguard. 2020. Tracking TwitterтАЩs COVID-19 Misinformation тАЬSuper-Spreaders.тАЭ (May 15, 2020).

- Khalid, Amrita. 2019. тАЬAmericans CanтАЩt Stop Relying on Social Media for Their News.тАЭ Quartz. (May 15, 2020).

- Sterling, Greg. 2019. тАЬAlmost 70% of Digital Ad Spending Going to Google, Facebook, Amazon, Says Analyst Firm.тАЭ Marketing Land. (May 17, 2020).

- Gibson, Kate. 2020. тАЬSpending on U.S. Digital Political Ads to Top $1 Billion for First Time.тАЭ CBS News. (May 15, 2020).

- Conger, Kate. 2019. тАЬTwitter Will Ban All Political Ads, C.E.O. Jack Dorsey Says.тАЭ The New York Times. (May 15, 2020).

- Gary, Jeff, and Ashkan Soltani. 2019. тАЬFirst Things First: Online Advertising Practices and Their Effects on Platform Speech.тАЭ Knight First Amendment Institute. (February 13, 2020).

- Mar├йchal, Nathalie, and Ellery Roberts Biddle. 2020. ItтАЩs Not Just the Content, ItтАЩs the Business Model: DemocracyтАЩs Online Speech Challenge – A Report from Ranking Digital Rights. Washington, D.C.: ╣·▓·╩╙╞╡. (May 7, 2020).

- Wardle, Claire, and Hossein Derakhshan. 2017. Information Disorder: Toward an Interdisciplinary Framework for Research and Policy Making. Strasbourg: Council of Europe. Council of Europe report.

- Dangerous Speech Project. 2016. тАЬWhat Is Dangerous Speech?тАЭ Dangerous Speech Project. (May 15, 2020).

- The World Health Organization (WHO) defines an infodemic as тАЬan over-abundance of informationтАФsome accurate and some notтАФthat makes it hard for people to find trustworthy sources and reliable guidance when they need it.тАЭ See World Health Organization. 2020. Novel Coronavirus (2019-NCoV) Situation Report – 13. Geneva: World Health Organization.

- Mar├йchal, Nathalie, and Ellery Roberts Biddle. 2020. ItтАЩs Not Just the Content, ItтАЩs the Business Model: DemocracyтАЩs Online Speech Challenge – A Report from Ranking Digital Rights. Washington, D.C.: ╣·▓·╩╙╞╡. (May 7, 2020).

- Business Roundtable. 2019. тАЬBusiness Roundtable Redefines the Purpose of a Corporation to Promote тАШAn Economy That Serves All Americans.тАЩтАЭ (May 15, 2020).

- Goodman, Peter S. 2020. тАЬBig Business Pledged Gentler Capitalism. ItтАЩs Not Happening in a Pandemic. – The New York Times.тАЭ The New York Times. (May 15, 2020).

Targeted Advertising and COVID-19 Misinformation: A Toxic Combination

In March, Facebook, Twitter, Google, YouTube, and others committed to join forces and work closely with networks of fact-checkers and researchers to combat the spread of pandemic-related misinformation.1 In April, as the U.S. economy slowed to a crawl and many state and local governments ordered people to stay home, Facebook took the further step of removing some posts that openly call on people to violate government orders, and committed to display warnings to people who had interacted with misinformation about COVID-19.2

Yet the torrent of misinformation continued, despite the best efforts of a growing number of organizations dedicated to external monitoring, flagging, and fact-checking. The platformsтАЩ content moderation systems could not keep up, and also made mistakes.3 University of OxfordтАЩs Reuters Institute, examining a sample of 225 pieces of misinformation posted between January and the end of March, found that while more than half of the content was either deleted or labeled with warnings, significant amounts of such content remained in circulation.4 Fifty-nine percent of posts flagged as false by fact-checkers remained on Twitter without any warning labels. Among the tweets that did get deleted was one by Rudy Giuliani, ╣·▓·╩╙╞╡ personal attorney and former mayor of New York City, quoting a well-known conservative activist making the false claim that тАЬhydroxychloroquine has been shown to have a 100% effective rate treating COVID-19.тАЭ5

YouTube for its part kept up 27 percent of content flagged as false, while 24 percent of posts containing content that fact-checkers had identified to be false remained on Facebook without warnings of any kind.6 But what is the actual prevalence of pandemic-related misinformation across the platforms? Facebook disclosed that in March it had displayed fact-checking labels on 40 million dangerous posts, based on 4,000 articles that its third-party reviewers had rated as false.7 So given the findings from the Oxford sample, we can conclude that millions of pieces of COVID-19 misinformation remained in circulation.

Also by March, fact-checking organizations reported being overwhelmed as the volume of potential misinformation related to the pandemic exploded.8 And when governments around the world started issuing mandatory stay-at-home orders to mitigate contagion, the platforms announced that since their human content moderators could no longer work in the office, and most could not work remotely because they lacked home internet access (along with privacy concerns), they would need to rely more heavily on content moderation algorithms to determine what needs to be deleted. They warned that this would likely result in more mistakes being made in determining what content does or does not violate the platform rules.9

The platforms have also failed to stem the flow of paid misinformation as well as deliberate disinformation. In mid-March, Consumer Reports Journalist Kaveh Waddell decided to test the effectiveness of FacebookтАЩs commitment to police its ad platform more closely. He created a page for a fake organization, then submitted several advertisements to run on the platform, calling the coronavirus a hoax and urging people to get out of the house and mingle:

тАЬDonтАЩt give in to the propagandaтАФjust live your life like you always have,тАЭ stated one of the ads. Another instructed people to тАЬstay healthy with SMALL daily dosesтАЭ of bleach. All were approved by Facebook for publication. Waddell responsibly removed them before they went live.10

Facebook discloses a detailed system for reviewing all ads before they are published for compliance with its policies. Its ad policy states: тАЬWeтАЩll check your adтАЩs images, text, targeting, and positioning, in addition to the content on your adтАЩs landing page.тАЭ11 But the company discloses no further details about what processes or technologies are used to review these ads, including if and how automation is used or if there is any human involvement. Whatever those processes might be, WaddellтАЩs experiment exposed just how ineffective FacebookтАЩs ad content policing mechanisms actually are in life-and-death crisis situationsтАФsuch as a global pandemic when people are desperate to understand what is happening and face an overwhelming avalanche of often contradictory information.

Content moderation is a downstream effort by platforms to clean up the mess caused upstream by their own systems designed for automated amplification and audience targeting.

To try to mitigate the devastating effects of targeted misinformation, social media platforms are engaged in an endless circular fight, in which they now must detect and delete content whose social, political, and even medical impact is magnified by the opaque and unaccountable mechanisms of their own business models. Content moderation is a downstream effort by platforms to clean up the mess caused upstream by their own systems designed for automated amplification and audience targeting. These downstream effortsтАФwhile necessaryтАФdo not fundamentally change the upstream systems that exacerbate the problem, and do not create greater transparency with the public about exactly how targeted systems are shaping what people can share, see, and know.

Take, for example, FacebookтАЩs mechanisms used to build profiles on users and target them with specific content. In April, just a week after Facebook CEO Mark Zuckerberg pledged to fight misinformation about COVID-19, Aaron Sankin, a journalist with The Markup, noticed in his Facebook news feed an advertisement for тАЬa hat that would supposedly protect my head from cellphone radiation.тАЭ12

One of the many conspiracy theories raging across social media links the spread of the coronavirus to signals emanating from 5G wireless cell towers.13 After clicking the тАЬWhy am I seeing this ad?тАЭ tab on the ad, Sankin learned that the ad was targeting people interested in тАЬpseudoscience.тАЭ We do not know how Facebook determined that Sankin was interested in pseudoscience, what types of advertisers used the audience category, or how often they did so. We do know from information provided to advertisers that the pseudoscience audience category contained 78 million users. With a few more clicks, Sankin was able to use that same category to тАЬboostтАЭ Markup posts on Instagram (paying to increase how often the posts were shown to Instagram users in the target audience category). The fact that he was able to do so confirmed that Facebook had not disabled it despite its clear connection to misinformation. The audience category was only removed after Sankin brought it to the companyтАЩs attention.14

This was not the first time journalists have exposed dangerous audience categories, prompting a company apology and removal. In 2017, ProPublica found that racist audience categories were being offered to advertisers.15 Like those disturbing categories, the pseudoscience audience category was likely generated by an algorithmic system that used a host of data points, like usersтАЩ Facebook and Instagram posts, comments, and interactions with other posts as well as off-platform data about their personal characteristics and behaviors. On that basis, Facebook determined that these 78 million people were interested in pseudoscience and enabled advertisers to reach them. WhatтАЩs more, in many cases the automated ad targeting systems also determined which paid content was most likely to appeal to pseudoscience enthusiasts. This appears to have been the case for the anti-cell phone radiation hat: the CEO of the company behind the ad told The Markup that his team hadnтАЩt chosen the pseudoscience category.16

SankinтАЩs experiment suggests that in addition to classifying individual users as тАЬinterested in pseudoscience,тАЭ FacebookтАЩs algorithmic systems are also able to determine that specific ads contain pseudoscience. Rather than flagging such ads for further scrutiny by a human reviewer, the companyтАЩs algorithmic systems make it easier for such ads to reach users who are vulnerable to their messages. A hat that purports to block cell phone radiation may not be likely to cause much harm, but seeing an ad for one reinforces the worldview that creates demands for such products. The same ad-targeting technology also spreads dangerous hoaxes like the idea that consuming bleach protects against the novel coronavirus or that childhood immunizations cause autism, as well as posts that incite hate or violence.

The problem with targeted advertising is that you canтАЩt put the cat back in the bag.

The problem with targeted advertising is that you canтАЩt put the cat back in the bag. A clear example can be seen in how misinformation radiated from Facebook pages protesting the pandemic lockdown meant to slow the spread of the coronavirus.

In mid-April protesters in state capitols across the country wielded signs with slogans like тАЬ#endtheshutdownтАЭ and тАЬgive me liberty or give me COVID-19!,тАЭ calling for governors to lift their stay-at-home orders. The protesters were driven by different motives, from economic anxiety at a time of unprecedented unemployment to long-standing fears about government overreach.

Anti-quarantine protest participants may have seen themselves as part of a spontaneous grassroots movement. But the coordinated marketing and messaging around the Facebook pages used to organize the protests was supported by organizations with long histories and deep pockets. Thanks to dogged reporting by several news organizations, it became clear that the emergence and expansion of these protests were made possible in no small part to the support of politically influential, well-funded backers including the National Rifle Association.17

According to research by First Draft, an organization that tracks the spread and analyzes the causes of disinformation, 100 state-specific Facebook pages were created in April to protest state governmentsтАЩ stay-at-home orders. NBC reported that as of April 20, these protest pages were used to organize at least 49 different events. The groups, many with names like тАЬWisconsinites Against Excessive QuarantineтАЭ and тАЬReopen MinnesotaтАЭ repeated across Facebook with different state names, had by April 20 attracted more than 900,000 members.18 These groups and their members were also reported to be active spreaders of coronavirus misinformation, much of it coordinated. Researchers at Carnegie Mellon UniversityтАЩs CyLab Security and Privacy Institute tracked nearly identical claims posted across multiple platforms, from the Facebook groups to Twitter and Reddit.19

We cannot rely on a game of whack-a-mole to protect public healthтАФand ultimately the health of our democracy.

Frustratingly, it is impossible to document with any precision the scale at which COVID-19 misinformation circulating on social media platforms has been boosted by targeted advertising tools, as the companies keep that information to themselves. In general, the platforms do not disclose nearly enough information about whether the content users see on the platform was boosted, by whom, and whether they are being targeted by tools like FacebookтАЩs тАЬcustom audiences,тАЭ which enable advertisers to target specific individuals.

But we do know that a large volume of that misinformation is being shared by followers and administrators of pages that can easily afford to pay for targeted advertising, and that many of these pages are run by people with plenty of experience in using such tools.

Disinformation, misinformation, hate speech, and scams of all sorts are powerful precisely because digital platformsтАЩ automated content optimization systems aim them at just the people who are most vulnerable to these messages, while hiding them from other users who would otherwise be in a position to flag them and provide corrective counter-speech. False information about COVID-19, and other dangerous content, would be much less effective if it was not algorithmically targeted and amplified. It is clear that even if social media companiesтАЩ content rules were perfectтАФwhich they are notтАФenforcing them fairly and accurately at a global scale is not possible.

Banning dangerous content itself is both contrary to free expression standards and impossible to achieve at a global scale. But we can stymie its reach by denying targeting and optimization algorithms their power, through fundamental reforms grounded in human rights principles.

Citations

- Douek, Evelyn. 2020. тАЬCOVID-19 and Social Media Content Moderation.тАЭ Lawfare. (May 15, 2020).

- Romm, Tony. 2020. тАЬFacebook Will Alert People Who Have Interacted with Coronavirus тАШMisinformation.тАЩтАЭ Washington Post. (May 15, 2020).

- Heilweil, Rebecca. 2020. тАЬFacebook Is Flagging Some Coronavirus News Posts as Spam.тАЭ Vox. (May 15, 2020).

- Brennen, J. Scott, Felix Simon, Philip N. Howard, and Rasmus Kleis Nielsen. 2020. Types, Sources, and Claims of COVID-19 Misinformation. Oxford: University of Oxford. (May 15, 2020).

- Wong, Queenie. 2020. тАЬTwitter Leaves up ╣·▓·╩╙╞╡ тАШliberateтАЩ Tweets about States with Lockdown Protests.тАЭ CNET. (May 15, 2020).

- Brennen, J. Scott, Felix Simon, Philip N. Howard, and Rasmus Kleis Nielsen. 2020. Types, Sources, and Claims of COVID-19 Misinformation. Oxford: University of Oxford. (May 15, 2020).

- Rosen, Guy. 2020. тАЬAn Update on Our Work to Keep People Informed and Limit Misinformation ╣·▓·╩╙╞╡ COVID-19.тАЭ Facebook Newsroom. (May 7, 2020).Romm, Tony. 2020. тАЬFacebook Will Alert People Who Have Interacted with Coronavirus тАШMisinformation.тАЩтАЭ Washington Post. (May 15, 2020).

- Leskin, Paige. 2020. тАЬOne of the InternetтАЩs Oldest Fact-Checking Organizations Is Overwhelmed by Coronavirus Misinformation тАФ and It Could Have Deadly Consequences.тАЭ Business Insider. (May 15, 2020).

- Bergen, Mark, Joshua Brustein, and Sarah Frier. 2020. тАЬTechтАЩs Shadow Workforce Sidelined, Leaving Social Media to the Machines.тАЭ Bloomberg. (May 15, 2020).

- Waddell, Kaveh. 2020. тАЬFacebook Approved Ads With Coronavirus Misinformation.тАЭ Consumer Reports. (May 15, 2020).

- Facebook. n.d. тАЬAdvertising Policies.тАЭ (May 15, 2020).

- Sankin, Aaron. 2020. тАЬWant to Find a Misinformed Public? FacebookтАЩs Already Done It.тАЭ The Markup. (May 7, 2020).

- Satariano, Adam, and Davey Alba. 2020. тАЬBurning Cell Towers, Out of Baseless Fear They Spread the Virus.тАЭ The New York Times. (May 15, 2020).

- Sankin, Aaron. 2020. тАЬWant to Find a Misinformed Public? FacebookтАЩs Already Done It.тАЭ The Markup. (May 7, 2020).

- Julia Angwin, Madeleine Varner. 2017. тАЬFacebook Enabled Advertisers to Reach тАШJew Haters.тАЩтАЭ ProPublica. (February 12, 2020).

- Sankin, Aaron. 2020. тАЬWant to Find a Misinformed Public? FacebookтАЩs Already Done It.тАЭ The Markup. (May 7, 2020).

-

Bixby, Scott. 2020. тАЬDeVos Has Deep Ties to Michigan Protest Group, But Is Quiet On Tactics.тАЭ The Daily Beast. (May 5, 2020);

Stanley-Becker, Isaac, and Rony Romm. 2020. тАЬPro-Gun Activists Using Facebook Groups to Push Anti-Quarantine Protests.тАЭ Washington Post. (May 15, 2020). - Zadrozny, Brandy, and Ben Collins. 2020. тАЬConservative Activist Family behind тАШGrassrootsтАЩ Anti-Quarantine Facebook Events.тАЭ NBC News. (May 15, 2020).

- Seitz, Amanda. 2020. тАЬVirus Misinformation Flourishes in Online Protest Groups.тАЭ Associated Press. (May 15, 2020).

Human Rights: Our Best Toolbox for Platform Accountability

Viewed through the lens of human rights standards, the COVID-19 infodemic is exacerbated by social media platformsтАЩ failure to align the full range of their business operations with a commitment to human rights. Over time, their surveillance-based business models can corrode a societyтАЩs information environment, poisoning the flow of information on which deliberative democracy depends as well as creating the conditions for human rights violations, or worse.

As globally dominant social media platforms, Facebook, Google and Twitter have also played a positive role in advancing global human rights, which is important to acknowledge: They enable free expression and the global free flow of information by providing opportunities for a wide range of speech, about politics, health, and practically anything else. Laudably, they have taken significant, if uneven and imperfect, steps to shield users around the world from acts of government censorship and surveillance that violate human rights.1 Google and Facebook are both members of the Global Network Initiative (GNI), a multi-stakeholder organization that works with information and communications technology companies to protect usersтАЩ rights when they receive government demands to delete content, restrict access to service, and provide access to user information that violate international human rights standards for freedom of expression and privacy.2

Yet free speech clearly has a dark side: misinformation can be deadly. Given this contradiction, there is little wonder that these three companies have found themselves at the vortex of heated debates about online speech and democracy.

International human rights standards, grounded in the Universal Declaration of Human Rights (UDHR) as well as the U.S.-ratified International Covenant of Civil and Political Rights, offer a framework for companies to protect and respect the rights of users and communities in a manner that addresses key gaps in U.S. law. The U.S. Constitution is designed primarily to protect people from government abuse: The First and Fourth Amendments forbid government censorship and unlawful search and seizure. But when it comes to how companiesтАЩ own business decisions affect individuals and society, U.S. law has largely left them off the hook. The law allows commercial social media platforms to moderate and take down content according to their own self-determined rules.3 In essence, U.S. platforms have the right to restrict free expression and shape usersтАЩ access to information on their platforms without public accountability: The companies can formulate their private rules through an opaque process, change them frequently, and present them in a way that is hard for many users to understand let alone abide by.

U.S. law has largely left companies off the hook when it comes to how their business decisions affect individuals and society.

Congress has over the past century passed many laws that forbid a vast range of abusive, exploitative, or discriminatory corporate behavior. But the question of how to regulate social media has been both difficult and contentious, given the technological, political, and constitutional complexities around anything having to do with speechтАФor framed as such. In our first report in this two-part series, we argued that changing the law to hold companies liable for content shared by users is not the answer, and due to technical realities that content moderation ultimately clashes with freedom of expression. The problems of private content moderation are compounded by amplification and targeting systems that shape the flow of information to users based on their personal traits or political beliefs. Yet at the same time, the three social media giants have failed to fully respect and protect usersтАЩ expression and information rights as articulated by international human rights standards. That failure has implications for other rights, including the rights to privacy, nondiscrimination, assembly and association, and economic, social, and cultural rights.

Buttressed by emerging accountability frameworks driven by institutional investors and civil society advocates who are pushing governments to adopt them, international human rights standards apply to companies as well as governments. They offer a corporate accountability toolbox that can be used by policymakers, institutional investors, and other affected stakeholders in any country. Yet, this toolbox has so far been largely overlooked by policymakers seeking to hold companies accountable for their social impact in the United States.

The international human rights toolbox has so far been largely overlooked by U.S. policymakers seeking to hold companies accountable for their social impact.

For social media platforms, the fundamental rights to free expression (UDHR Article 19) and privacy (UDHR Article 12) must be protected and respected so that people can use technology effectively to exercise and defend other political, religious, economic, and social rights. The pandemic further underscores how violations of free expression and information rights can cause or contribute to the violation of other rights, such as right to life, liberty, and security of person (UDHR Article 3). Similarly, violation of the right to privacy can also set off a chain reaction for the violation of other rights, including the human right to non-discrimination (UDHR Article 7, Article 23); freedom of thought (UDHR Article 18); freedom of association (UDHR Article 20); and the right to take part in the government of oneтАЩs country, directly or through freely chosen representatives (UDHR Article 21).4

The UN Guiding Principles on Business and Human Rights, approved in 2011 and co-sponsored by the U.S. government, has become the gold standard of тАЬguidelines for States and companies to prevent, address and remedy human rights abuses committed in business operations.тАЭ5 For the tech sector, applying the principles means respecting usersтАЩ privacy and freedom of expression, and all other human rights that their business operations may have an impact onтАФboth online and offline. Respecting usersтАЩ freedom of expression does not preclude a private company establishing rules and moderation processes, or even editorial guidelines related to the purpose and scope of the service (for example, if it is meant to serve a specific community or purpose, such as a platform for members of a given profession). But to be effective, human rights standards must be тАЬimplemented transparently and consistently with meaningful user and civil society input,тАЭ and must be accompanied by industry-wide oversight and accountability mechanisms.6

Ranking Digital Rights (RDR) offers a dynamic and regularly-updated instruction manual for what steps companies can take todayтАФeven in absence of clear regulationтАФto improve their respect for human rights. Since 2015, the RDR Index has evaluated the worldтАЩs most powerful digital platforms and telecommunications companies according to their disclosed commitments to respect usersтАЩ human rights.7 Grounded in the UDHR and the UN Guiding Principles, the RDR Index methodology comprises more than three dozen indicators in three categories: governance, freedom of expression, and privacy. For 2020 we have upgraded the RDR Index methodology, placing greater focus on rights like non-discrimination and the right to life, liberty, and security of person.8

The Impact of Targeted Advertising and Algorithmic Systems on Human Rights

In our previous report, we described the growing use of algorithms to moderate content even before the coronavirus outbreak, and how these algorithms make frequent errors that lead to the deletion of journalism, activism, and other speech that does not actually violate the platform rules. We also pointed to data from the past four iterations of the RDR Corporate Accountability Index. Only since 2018 have companies started to disclose any data about the volume and nature of content being removed for violating their rules, known variously as terms of service or community guidelines.9 But their disclosures about content moderation continue to fall very short of what free expression advocates and academic researchers believe is the minimum baseline standard for transparency and accountability as private arbiters of global online speech.10

In late 2019 and early 2020, our research team examined how transparent Facebook, Twitter, and YouTube are about how their automated algorithmic systems determine what users can post and share, what gets removed or blocked for violating the rules, and what information is shown to them most prominently through news feeds and recommendations. As we described in our last report, while Facebook, Google, and Twitter do not hide the fact that they use algorithms to shape content, they disclose little about how the algorithms actually work, what factors influence them, and how users can customize their own experiences.11

Pressure for change is growing with the public health crisis. Concerned that this opacity about what happens to content on platforms could be especially dangerous amidst heated public debates about how best to fight the pandemic without destroying peoplesтАЩ livelihoods, 75 organizations and researchers released an open letter calling on platforms to preserve information тАЬabout what their systems are automatically blocking and taking down.тАЭ╠¤Without such information, according to the nonprofit Center for Democracy and Technology which helped draft the letter, тАЬit will be hard to assess the efficacy of efforts to share vital public health information while combating the spread of coronavirus scams and pandemic profiteering.тАЭ12

To ensure that social media platforms do not contribute to or enable the violation of usersтАЩ rights, laws and regulations must strictly limit how users can be targeted.

Yet greater transparency alone will not address fundamental problems related to the targeted advertising business model. Companies have experimented with controlling, or being more transparent about, advertisements placed by or in support of political candidates in elections. Facebook now publishes a library of political adsтАФwith a serious flaw in that its political-ad archive depends on political advertisers complying with labeling requirements, and the platforms detecting those that do not. тАЬCurrently, dishonest companies can spend an unlimited amount of undeclared money in favor of a political agenda through the Facebook ads platform,тАЭ argue the authors of one recent academic paper. Nor does the Facebook Ad Library disclose information about how these advertisers may have deployed targeted advertising tools, or what types of audience characteristics were targeted.13

Algorithmic targeting systems only work because the companies that profit from them have access to unfathomable amounts of user data. Google (which owns YouTube), Facebook, Twitter, and other companies whose business models rely on targeted advertising have every incentive to hoover up every crumb of data they can access, and to create more opportunities to surveil our daily behavior. Thanks to complex automated processes that frequently arenтАЩt fully understood even by their own creators, digital platforms can identify the individuals who are most likely to make a purchase, be convinced by a political message, or be susceptible to various types of misinformation. Such manipulation is a form of discrimination, in addition to being a clear violation of freedom of opinion and of information, particularly in the context of elections.14

In our first report in this series, we outlined the dangers to democracy when targeted advertising is manipulated for political gain during elections. Election experts have raised concerns that the same technologies and tactics will also be used to spread disinformation about the voting process.15 Both user-declared and algorithmically-deduced political leanings can be exploited to target voters with paid, deliberate disinformation about polling places and times or equally damaging but unverifiable claims about an opponentтАЩs character or the effects of their policy proposals. This practice, which relies on the processing of vast amounts of user information without explicit consent, violates the right to non-discrimination by determining what information a user sees based on disclosed or assumed protected traits, such as race, ethnicity, age, gender identity and expression, sexual orientation, health, disability, etc.16

But the violation of individual usersтАЩ rights is not the only harm done. Company practices, incentivized by targeted advertising business models, can also contribute to the violation of the rights of entire communities or categories of people. For example, catering to advertisersтАЩ desire to reach potential job applicants who are demographically similar to their current workforce leads digital platforms to enable their advertiser clients to illegally target job ads by gender,17 race,18 ethnicity,19 and other protected attributes.20

The human rights risks associated with targeted advertising are clear. To ensure that social media platforms do not contribute to or enable the violation of usersтАЩ rights, laws and regulations must strictly limit how users can be targeted. They must also require much greater transparency about the nature and design of platformsтАЩ algorithmic systems, processes, and business models.

Citations

- Crocker, Andrew et al. 2019. Who Has Your Back? Censorship Edition 2019. Electronic Frontier Foundation. (May 16, 2020).

- Global Network Initiative. 2020. тАЬGlobal Network Initiative.тАЭ Global Network Initiative. (May 16, 2020).

- Specifically by Section 230 of the 1996 Communications Decency Act. For more, see Mar├йchal, Nathalie, and Ellery Roberts Biddle. 2020. ItтАЩs Not Just the Content, ItтАЩs the Business Model: DemocracyтАЩs Online Speech Challenge – A Report from Ranking Digital Rights. Washington, D.C.: ╣·▓·╩╙╞╡. (May 7, 2020).

- Ranking Digital Rights. 2019. Human Rights Risk Scenarios: Targeted Advertising, Consultation Draft. Washington, D.C.: ╣·▓·╩╙╞╡.

- OHCHR. 2011. Guiding Principles on Business and Human Rights: Implementing the United Nations тАЬRespect, Protect and RemedyтАЭ Framework. Geneva: United Nations Office of the High Commissioner on Human Rights.

- U.N. Human Rights Council. 2018. Report of the Special Rapporteur on the Promotion and Protection of the Right to Freedom of Opinion and Expression. A/HRC/38/35, 14. Kaye, David. 2019. Speech Police: The Global Struggle to Govern the Internet. New York: Columbia Global Reports.

- Ranking Digital Rights. 2019. Corporate Accountability Index. Washington, D.C.: ╣·▓·╩╙╞╡.

- Ranking Digital Rights. 2020. 2020 Ranking Digital Rights Corporate Accountability Index Draft Indicators. Washington, DC: ╣·▓·╩╙╞╡.

- Frenkel, Sheera. 2018. тАЬFacebook Says It Deleted 865 Million Posts, Mostly Spam.тАЭ The New York Times. (May 16, 2020).

- Singh, Spandana. 2019. Assessing YouTube, Facebook and TwitterтАЩs Content Takedown Policies. Washington, D.C.: ╣·▓·╩╙╞╡. (May 16, 2020).

- Ranking Digital Rights. 2020. The RDR Corporate Accountability Index: Transparency and Accountability Standards for Targeted Advertising and Algorithmic Systems тАФ Pilot Study and Lessons Learned. Washington, D.C.: ╣·▓·╩╙╞╡. www.rankingdigitalrights/pilot-report-2020

- Llans├│, Emma J. 2020. тАЬUnderstanding Automation and the Coronavirus Infodemic: What Data Is Missing?тАЭ Center for Democracy and Technology. (May 16, 2020).Radsch, Courtney J. 2020. тАЬCPJ, Partners Call on Social Media and Content Sharing Platforms to Preserve Data.тАЭ (May 16, 2020).

- Silva, M├бrcio et al. 2020. тАЬFacebook Ads Monitor: An Independent Auditing System for Political Ads on Facebook.тАЭ In Proceedings of The Web Conference 2020, Taipei, Taiwan: ACM, 224тАУ34. (May 16, 2020).

- Information collected for targeted advertising purposes enables companies and advertisers to segment audiences in a very granular manner, tailoring messages to very specific attributes including preferences, habits, or traits. As this data is shared across the targeted advertising ecosystem, this in turn enables discrimination against internet users on the basis of protected traits and even the targeting of specific individuals. For more on the relationship between targeted advertising business models and discrimination, see Ranking Digital Rights. 2019. Human Rights Risk Scenarios: Targeted Advertising, Consultation Draft. Washington, D.C.: ╣·▓·╩╙╞╡.

- Hasen, Richard L. 2020. тАЬWhat Happens in November If One Side DoesnтАЩt Accept the Election Results?тАЭ Slate. (May 16, 2020).

- U.N. General Assembly. (1948). Universal Declaration of Human Rights (217 [III] A), Article 2. Paris.

- Tobin, Ariana, and Jeremy B. Merrill. 2018. тАЬFacebook Is Letting Job Advertisers Target Only Men.тАЭ ProPublica. (May 11, 2020).

- Angwin, Julia, and Terry Parris Jr. 2016. тАЬFacebook Lets Advertisers Exclude Users by Race.тАЭ ProPublica. (May 11, 2020).

- Tobin, Ariana. 2018. тАЬFacebook Promises to Bar Advertisers From Targeting Ads by Race OrтАж.тАЭ ProPublica. (May 11, 2020).

- Ranking Digital Rights. 2019. Human Rights Risk Scenarios: Targeted Advertising, Consultation Draft. Washington, D.C.: ╣·▓·╩╙╞╡.

Making All Ads тАЬHonestтАЭ Through Transparency, Limited Targeting, and Enforcement

The targeted advertising debate in the United States has focused on the issue of online political advertising, particularly platformsтАЩ responsibility to police the veracity of politiciansтАЩ claims, but this narrow focus is misguided, as are the proposed solutions. Aside from the inevitable First Amendment challenges that would arise from any government attempt to force platforms to fact-check political speech, it is doubtful that they would be able to do so accurately and fairly, especially at the scale at which they operate.1

Other rules that govern broadcast and print advertising by political candidates and campaigns do not currently apply online, leaving platforms in the position of setting and enforcing the norms for a growing portion of American electoral discourse. The proposed Honest Ads Act, first introduced in Congress in 2017, would require online advertising platforms to maintain a "public file" database with detailed information about the political ads they serveтАФsimilar to existing requirements for broadcast mediaтАФincluding who paid for each ad and how much it cost. Critically, the Honest Ads Act goes beyond platformsтАЩ current voluntary disclosures by including targeting information.2 The bill is unlikely to advance before the next election. For the time being, online political advertising is entirely unregulated, leaving platforms to set their own rules.

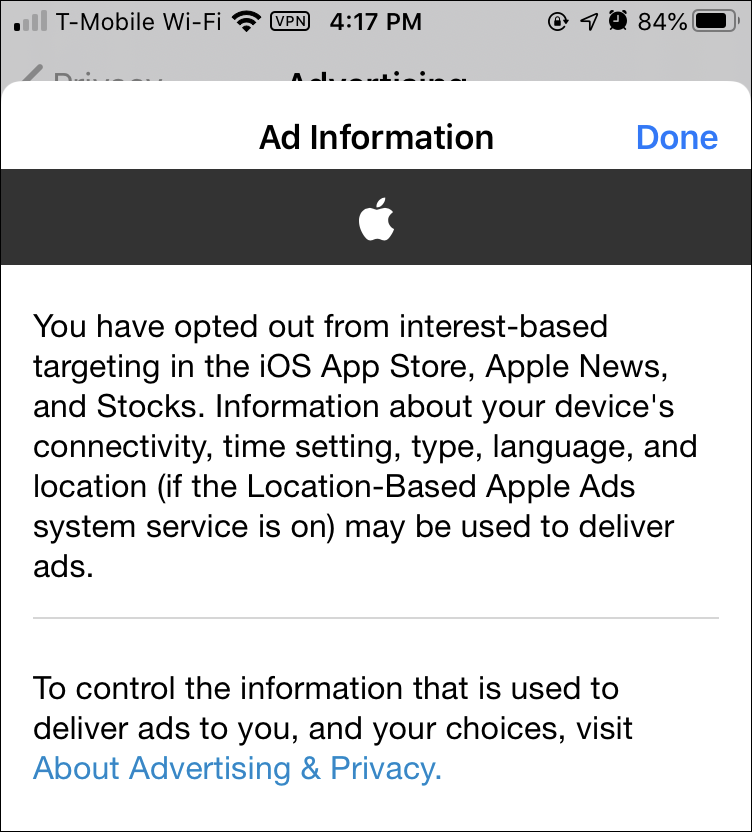

While Twitter and Google rolled out bans on targeting users by political affiliation in late 2019, Facebook in early January 2020 clarified that it would not follow suit, instead announcing changes that would make its political ad library easier to navigate and granting users somewhat more control over how many political ads they seeтАФalbeit on an opt-out basis.3 As noted above, these voluntary ad libraries do not include targeting information, a key requirement of the proposed Honest Ads Act.4

Thus, as of May 2020, it remains possible to target users with political advertising on Facebook (but not on Twitter or any of GoogleтАЩs platforms, including YouTube) in three powerful ways. First, individuals can be targeted if advertisers upload custom lists from off-platform data, including voter registration rolls, donor lists to parties and candidates, lists of subscribers to emails from parties and candidates, etc.5 Second, platforms can algorithmically generate new audiences that are similar to an uploaded custom list in terms of demographics, interests, and other data points. And third, advertisers can select which audience categories they want to reach, based on audience categories and profiles that Facebook has created from peopleтАЩs online and offline activities, such as the content they post, the accounts and pages they follow, the content they like or otherwise engage with, their credit card purchases, and the known and/or inferred political affiliation of the other users they are connected to on the platform.

All three tactics allow political advertisers to send different messages and even contradictory ones to different groups of users. In both 2016 and 2018, African American internet users (African Americans vote overwhelmingly Democratic)6 in swing states were targeted with ads designed to suppress voter turnout, whether by providing false information about when, where, and how to vote or by flooding their newsfeeds with negative ads about the advertiserтАЩs opponent.7 The platforms now prohibit ads that mislead voters about the voting process, but itтАЩs unclear how effectively this policy is enforced.

While enacting the Honest Ads Act and limiting targeting for political ads are important and urgent steps, Congress must go beyond the narrow definition of тАЬelectioneering communicationsтАЭ to safeguard democracy from algorithmic manipulation by both foreign and domestic actors.8 Currently, political advertisers self-identify as such, and there is no way of knowing how many neglect to do so and consequently are not included in the existing ad libraries. Any ad database as required by the Honest Ads Act, would run into the same enforcement problem. Moreover, drawing a clear line around political and issue ads is a vexing conundrum, as the controversy surrounding TwitterтАЩs October 2019 decision to ban all political ads demonstrates. CEO Jack DorseyтАЩs initial announcement9 indicated that issue ads, including those from public-interest organizations, would be covered by the ban, but the final policy issued a few weeks later was more narrow.10 No matter where the line is drawn, any database of political ads, as the Honest Ads Act would require, would miss a significant number of politically relevant paid messages, just as the current voluntary ad libraries do. This alone is reason enough to expand the advertising transparency requirement to all online ads.

In addition to these definitional and enforcement issues, the current infodemic has made it clear that ads need not be related to elections to raise serious concerns. Online advertising transparency requirements must go beyond the scope of the Honest Ads Act and include non-political ads as well. This will allow regulators as well as independent researchers to verify that platforms, and their advertisers, are complying with applicable laws and the platformsтАЩ own rules. Such transparency would further enable credible, empirical research on the state of online advertising and its impact on political life, public health, and other important issues. Moreover, it would provide crucial oversight over platformsтАЩ respect for the law (notably the Civil Rights Act, Fair Housing Act, and various public accommodation laws) and enforcement of their own rules.

As problematic as the rules themselves are, uneven enforcementтАФby the company of its own rulesтАФexacerbates the extent to which people are being targeted and manipulated in ways that clearly violate usersтАЩ information and non-discrimination rights. Neither Google, Facebook, nor Twitter include data about their ad policy enforcement in their transparency reports that disclose data related to the moderation of user-generated content. Recent reporting, including the aforementioned Consumer Reports experiment with ads containing clear coronavirus misinformation, demonstrates the inadequacy of FacebookтАЩs ad review process. While investigative journalists have not exposed similar failures in GoogleтАЩs or TwitterтАЩs ad review systems, this should not be taken as evidence that these systems function well. More oversight is sorely needed, and a universal database for all online advertising would be a key step in that direction.

Citations

- For a discussion of this point, see Mar├йchal, Nathalie, and Ellery Roberts Biddle. 2020. ItтАЩs Not Just the Content, ItтАЩs the Business Model: DemocracyтАЩs Online Speech Challenge – A Report from Ranking Digital Rights. Washington, D.C.: ╣·▓·╩╙╞╡. (May 7, 2020).

- Honest Ads Act, S. 1989, 115th Congress, 2017.

- Associated Press. 2020. тАЬFacebook Refuses to Restrict Untruthful Political Ads and Micro-Targeting.тАЭ The Guardian. (May 16, 2020).

- However, RedditтАЩs recently introduced Political Ads Transparency Community does include targeting information. See Singh, Spandana. 2020. тАЬRedditтАЩs Intriguing Approach to Political Advertising Transparency.тАЭ Slate. (May 18, 2020).

- The same data is used for direct mail, get-out-the-vote door-knocking, phone canvassing, and email blasts. See National Conference of State Legislatures. 2019. тАЬAccess To and Use Of Voter Registration Lists.тАЭ (May 17, 2020).

- Laird, Chryl, and Ismael White. 2020. тАЬWhy So Many Black Voters Are Democrats, Even When They ArenтАЩt Liberal.тАЭ FiveThirtyEight. (May 16, 2020).

- Romm, Tony. 2018. тАЬHow Facebook and Twitter Are Rushing to Stop Voter Suppression Online for the Midterm Elections.тАЭ Washington Post. (May 17, 2020).

- The Federal Election Commission defines тАЬelectioneering communicationsтАЭ as тАЬpersons, groups of persons or organizations, including corporations and labor organizations, may make electioneering communications. See Federal Election Commission. n.d. тАЬElectioneering Communications.тАЭ FEC.gov. (May 16, 2020).

- Jack Dorsey (@jack). 2019. тАЬтАШWeтАЩve Made the Decision to Stop All Political Advertising on Twitter Globally. We Believe Political Message Reach Should Be Earned, Not Bought. Why? A Few ReasonsтАжтАЩ / Twitter.тАЭ (May 16, 2020).

- Twitter. n.d. тАЬPolitical Content.тАЭ (May 16, 2020).

By Protecting Data, Federal Privacy Law Can Reduce Algorithmic Targeting and the Spread of Disinformation

A strong data privacy law, effectively enforced, can protect internet users from the discrimination inherent to automated content optimization and limit the viral spread of harmful messages. The way to achieve that is by strictly limiting data collection and retention to the absolute minimum that is required to deliver the service to the end-user, irrespective of the companyтАЩs business model or financial interests. As Alex Campbell wrote in Just Security: тАЬAbsent the detailed data on usersтАЩ political beliefs, age, location, and gender that currently guide ads and suggested content, disinformation has a higher chance of being lost in the noise.тАЭ1

The United States lacks a comprehensive federal privacy law governing the collection, processing, and retention of personal information, though there are sector-specific laws that apply to education, healthcare, and other sectors.2 A strong federal privacy law, backed up by robust enforcement mechanisms, is perhaps the strongest tool at CongressтАЩ disposal to stem the tide of online misinformation and dangerous speech by disrupting the algorithmic systems that amplify such content. This approach would also have the benefit of side-stepping the thornier issues related to free speech and the First Amendment.

Even when these companies do disclose what they collect, the scope of what is collected is staggering.

But as things stand, targeted advertising companies are free to collect virtually any information they want to, and use it however it benefits their bottom line. Facebook, Google, and Twitter hoover up massive amounts of data about internet users (both on their platforms and off). Indeed, not only do platforms track what users do while using their services, they also follow them around the internet and purchase data about the offline behavior from credit card companies and data brokers.3 The data that is collected becomes the core ingredient for developing very powerful digital profiles about users that can then be used by advertisers and political operatives to target groups and individuals, like in the pseudoscience example described previously. WhatтАЩs worse, the tech giants do not clearly disclose exactly what they are doing with usersтАЩ data. In such conditions, the notion of user consent is meaningless.

RDRтАЩs evaluation of the three American social media giantsтАЩ policies and disclosed practices is useful in clarifying just what needs to change, and how the companies should be regulated. Data from the 2019 RDR Index highlights the opacity of the major U.S. digital platforms when it comes to the collection, processing, and sharing of user information. Even when these companies do disclose what they collect, the scope of what is collected is staggering.

Scope and Purpose of Data Collection, Use, and Sharing

In evaluating companies for the RDR Index, we have examined if companies clearly disclose why and how they collect user information, by which we mean any data that is connected to an identifiable person, or may be connected to such a person by combining datasets or utilizing data-mining techniques. We look for a commitment to limit collection of user information to what is directly relevant and necessary to accomplish the purpose of the service from the end userтАЩs perspective.4 In our evaluation, we do not consider targeted advertising as the purpose of the service: While the revenue it generates enables the company to provide the service, users would get the same benefit if the company made money differently. User information should not be collected for targeted advertising by default, though people can be given a choice to opt in.

Google, Facebook, and Twitter all track users across the internet: Using тАЬcookiesтАЭ and other tracking technologies embedded in many websites, they collect data about what web pages people visited and what they have done there (purchased items, watched videos, etc).5 The three companies all disclose and explain what types of user information they collect but are less clear about how, and none commit to collecting only data that is necessary to provide the service.6 Facebook, for instance, states that it collects тАЬthings you do and others do and provide,тАЭ including the тАЬcontent, communications and other informationтАЭ users provide when using Facebook products (for example when signing up for an account, posting text, videos or images, and using messaging functions). This can include the content of user posts, metadata, and more. Facebook also discloses that it collects device information, including location information. In short: If Facebook can capture a piece of information about you, it does.7

All three companies disclose some information about the types of entities they share user data with, but none get more specific. Yet in the context of targeted advertising, it is especially important for companies to disclose to users specifically who their information is shared with for any purpose that isnтАЩt subject to legitimate law enforcement or national security limitations in accordance with human rights standards.8

While no company discloses enough about how they handle user information, Twitter deserves credit for having disclosed more than other U.S. platforms (Google, Facebook, Microsoft, and Apple) about its data handling policies, across all the user information indicators in the 2019 RDR Index.9

Federal privacy legislation should include strong data minimization and purpose limitation provisions: Users should not be able to opt in to discriminatory advertising or to the collection of data that would enable it.10 Any data processing that remains should be opt in. Using the service should not be contingent on giving up more data than that which is necessary to accomplish the purpose of the service. Crucially, the delivery of targeted advertising should not be considered the purpose of the service unless the serviceтАЩs primary purpose is in fact clearly described as such by the company to the general public in its marketing and public communications. Companies should disclose to users and to the relevant regulatory agency what user information they collect, share, retain, and infer; for what purpose; and how long it is retained. Companies should only collect user information from third parties, or share user information with third parties, if the two companies have a vendor-contractor relationship and the sharing of this user information is directly relevant and necessary for the purpose of delivering a service to the user. Companies should allow users to obtain all of their user information (collected and inferred, broadly defined) that the company holds, in a structured data format. They should delete all user information after a user terminates their account or at the userтАЩs request. Finally, the accuracy of disclosures and compliance with the above requirements should be independently audited.

Inferred Information and Targeting

In future versions of the RDR Index we will look for the same level of disclosure and commitment about what companies infer about users. Inference is a key way that user profiles are built. Companies perform big data analytics on their troves of collected user data in order to make predictions about the behaviors, preferences, and private lives of their users, and to then sort users into audience categories on that basis.11 Take, for example, the case of Aaron Sankin at The Markup. He doesnтАЩt know exactly why his account was included in the pseudoscience audience category, but he speculates it was likely because he had conducted research about medical misinformation on Facebook, causing the companyтАЩs algorithms to assume he had an interest in pseudoscience.12

Targeted advertising should be allowed only if the default is that users are not targeted upon joining a service. Companies would be wise to avoid relying on targeted advertising as their sole source of revenue and consider contextual advertising and subscription models, among others. Users must be able to actively opt in to being targeted and able to fully control what information can be used to target them, if any.13 Users might choose to be targeted based on certain types of data but not others. Companies should disclose sufficient information to users and to regulators so that people can understand exactly how, why, and by whom they have been targeted, and regulators can track broader patterns to identify abusive practices.

In the first report in this series, we called on companies to publicly explain the content-shaping algorithms that determine what user-generated content users see, and the ad-targeting systems that determine who can pay to influence them. We also called on them to disclose their rules for user content, advertising content, and ad targeting; to explain how they enforce those rules; and to publish regular transparency reports containing data about the actions they take to enforce these rules. We further call on the U.S. Congress to enact legislation to require companies to provide this information to policymakers and the public as a first step toward greater accountability.14 These transparency measures are necessary for a more nuanced understanding of a complex and dysfunctional ecosystem but insufficient to the task of making our online ecosystem work for democracy and the public interest. For that, much more active regulatory intervention is needed, starting with the barely regulated targeted advertising industry.

Policymakers should not be convinced by tech giantsтАЩ claim that targeted advertising benefits internet users by showing them ads that are most relevant to them. Targeted advertising is on by default on Facebook, Google, and Twitter. If users find targeted advertising as useful as companies say they do, many will choose to opt in. While users can customize options for the types of ads they want to see, they canтАЩt opt out of receiving tailored ads altogether. In the past year, Facebook has improved its disclosure of options users have to remove categories of interests and pages theyтАЩve visited, constituting some but not all of the information used to customize the ads they are seeing. Even when customization is an option, however, the settings can be hard to find.

A federal privacy law should also restrict the targeting options that platforms are allowed to offer to advertisers. Based on our research teamтАЩs examination of company policy disclosures across Facebook, Google, and Twitter, Facebook appears to have the fewest restrictions on ad targeting and the least transparency. It disclosed that users will be targeted with ads but did not disclose the exact ad targeting parameters that are prohibited. It disclosed that advertisers can tailor ads to custom audiencesтАФlists of individuals that advertisers can upload to the platformтАФbut are prohibited from using these targeting options тАЬto discriminate against, harass, provoke, or disparage users or to engage in predatory advertising practices.тАЭ15 None of these terms are defined, and the company does not clarify how it would detect breaches of the policy or what the penalty might be for doing so.

In addition to uploading their own custom audience lists, advertisers can also select from among FacebookтАЩs algorithmically-determined audience categories, which are based on profiles that Facebook has created from peopleтАЩs online and offline activities. These profiles can include the content a user posts, the accounts and pages they follow, the content they like or otherwise engage with, and the known and/or inferred interests of the other users they are connected to on the platform. These targeting options, however, are only visible when logged into the platform and going through the process of placing ads. As a result, only Facebook account holders who take the time to investigate the platformтАЩs audience categories can know what they are. This is how researchers and investigative journalists have discovered the existence of racist16 and otherwise problematic audience categories,17 alerting public opinion to the dark side of targeted advertising and leading companies to remove the offending categories. Despite calls from civil society (including RDR) to publish the list of available categories, companies have thus far declined to do so, making any kind of systematic oversight impossible.

The Challenge of Effective Enforcement

Privacy law is key to preventing targeted advertising systems from profiling and targeting people in dangerous and harmful ways. But a law is only as good as its enforcement. In that, the EUтАЩs challenges in enforcing the General Data Protection Regulation (GDPR) offer important lessons. National-level data protection agencies are under-funded with massive case backlogs, and critics worry that fines are not high enough, nor are other penalties sufficiently punitive, to force a change in industry practices.18 Enforcement needs a bigger stick to protect privacy rights.

For example, since the GDPR went into force in 2018, only one major tech giant has been fined for a violation: In early 2019, Google was docked 50 million euros (about $54 million, which the New York Times estimated is about one-tenth of GoogleтАЩs daily sales) for failing to adequately disclose how data is collected across its services for use in targeted advertising.19 A complaint filed against Facebook in May 2018 by privacy advocate Max Schrems argues that in order for users to even sign onto the companyтАЩs services (Facebook, Instagram, and WhatsApp), they are forced to agree to having their personal information harvested for targeted advertising. Such тАЬforced consentтАЭ is illegal, the complaint argues, if the core purpose of the service is social networkingтАФas the company statesтАФand not targeted advertising.20 In contrast to some major multinational Asian and European internet, mobile, and telecommunications companies who disclose that they limit collection of user information to what is directly relevant and necessary to accomplish the purpose of the service, none of the U.S.-based companies evaluated in the 2019 RDR Index (including Facebook) were found to have done so.21 While digital marketers once fretted that the GDPR would render targeted advertising a shadow of its former self, that will only happen if the law is strictly interpreted by courts and enforced.22