It’s Not Just the Content, It’s the Business Model: DemocracyŌĆÖs Online Speech Challenge

Abstract

Ranking Digital Rights (RDR) content is no longer updated on ╣·▓·╩ėŲĄŌĆÖs website. RDR is now located at the.

This report, the first in a two-part series, articulates the connection between surveillance-based business models and the health of democracy. Drawing from Ranking Digital RightsŌĆÖs extensive research on corporate policies and digital rights, we examine two overarching types of algorithms, give examples of how these technologies are used both to propagate and prohibit different forms of online speech (including targeted ads), and show how they can cause or catalyze social harm, particularly in the context of the 2020 U.S. election. We also highlight what we ╗Õ┤Ū▓įŌĆÖt know about these systems, and call on companies to be much more transparent about how they work.

Acknowledgments

RDR Director Rebecca MacKinnon, Deputy Director Jessica Dheere, Research Director Amy Brouillette, Communications Manager Kate Krauss, Company Engagement Lead Jan Rydzak, and Communications Associate Hailey Choi also contributed to this report.

We wish to thank all the reviewers who provided their feedback on the report, including:

- Jen Daskal, Professor and Faculty Director, Tech, Law & Security Program, American University Washington College of Law

- Sharon Bradford Franklin, Policy Director, Open Technology Institute

- Brandi Geurkink, European Campaigner, Mozilla Foundation

- Spandana Singh, Policy Analyst, Open Technology Institute

- Joe Westby, Researcher, Technology and Human Rights, Amnesty International

Original art by Paweł Kuczyński.

We would also like to thank Craig Newmark Philanthropies for making this report possible.

For a full list of RDR funders and partners, please see: .

For more about RDRŌĆÖs vision, impact, and strategy see: .

For more about ╣·▓·╩ėŲĄ, please visit www.newamerica.org.

For more about the Open Technology Institute, please visit www.newamerica.org/oti.

This work is licensed under the Creative Commons Attribution 4.0 International License. To view a copy of this license, please visit .

Downloads

Executive Summary

Democracies are struggling to address disinformation, hate speech, extremism, and other problematic online speech without requiring levels of corporate censorship and surveillance that will violate usersŌĆÖ free expression and privacy rights. This report argues that instead of seeking to hold digital platforms liable for content posted by their users, regulators and advocates should instead focus on holding companies accountable for how content is amplified and targeted. ItŌĆÖs not just the content, but tech companiesŌĆÖ surveillance-based business models that are distorting the public sphere and threatening democracy.

The first in a two-part series aimed at U.S. policymakers and anybody concerned with the question of how internet platforms should be regulated, this report by Ranking Digital Rights (RDR) draws on new findings from a study examining company policies and disclosures about targeted advertising and algorithmic systems to propose more effective approaches to curtailing the most problematic online speech. Armed with five years of research on corporate policies that affect online speech and privacy, we make a case for a set of policy measures that will protect free expression while holding digital platforms much more accountable for the effects of their business models on democratic discourse.

We begin by describing two types of algorithmsŌĆöcontent shaping and content moderation algorithmsŌĆöand how each can violate usersŌĆÖ freedom of expression and information as well as their privacy. While these algorithms can improve user experience, their ultimate purpose is to generate profits for the companies by keeping users engaged, showing them more ads, and collecting more data about themŌĆödata that is then used to further refine targeting in an endless iterative process.

Next, we give examples of the types of content that have sparked calls for content moderation and outline the risks posed to free expression by deploying algorithms to filter, remove, or restrict content. We emphasize that because these tools are unable to understand context, intent, news value, and other factors that are important to consider when deciding whether a post or an advertisement should be taken down, regulating speech can only result in a perpetual game of whack-a-mole and, while damaging democracy, will not fix the internet.

We then describe the pitfalls of the targeted advertising business model, which relies on invasive data collection practices and black-box algorithmic systems to create detailed digital profiles. Such profiling not only results in unfair (and sometimes even illegal) discrimination, but enables any organization or person who is allowed to buy ads on the platform to target specific groups of people who share certain characteristics with manipulative and often misleading messages. The implications for society are compounded by companiesŌĆÖ failure to conduct due diligence on the social impact of their targeted advertising systems, failure to impose and enforce rules that prevent malicious targeted manipulation, and failure to disclose enough information to users so that they can understand who is influencing what content they see online.

Nowhere in the U.S. have the effects of targeted advertising and misinformation been felt more strongly than in recent election cycles. Our next section describes how we are only beginning to understand how powerful these systems can be in shaping our information environmentŌĆöand in turn our politics. Despite efforts to shut down foreign-funded troll farms and external interference in online political discourse, we note that companies remain unacceptably opaque about how we can otherwise be influenced and by whom. This opacity makes it impossible to have an informed discussion about solutions, and how best to regulate the industry.

We conclude the report with a warning against recent proposals to revoke or dramatically revise Section 230 of the 1996 Communications Decency Act (CDA), which protects companies from liability for content posted by users on their platforms. We believe that such a move would undermine American democracy and global human rights for two reasons. First, algorithmic and human content moderation systems are prone to error and abuse. Second, removing content prior to posting requires pervasive corporate surveillance that could contribute to even more thorough profiling and targetingŌĆöand would be a potential goldmine for government surveillance. That said, we believe that in addition to strong privacy regulation, companies must take immediate steps to maximize transparency and accountability about their algorithmic systems and targeted advertising business models.

The steps listed below should be legally mandated if companies do not implement them voluntarily. Once regulators and the American public have a better understanding of what happens under the hood, we can have an informed debate about whether to regulate the algorithms themselves, and if so, how.

Key recommendations for corporate transparency

- CompaniesŌĆÖ rules governing content shared by users must be clearly explained and consistent with established human rights standards for freedom of expression.

- Companies should explain the purpose of their content-shaping algorithms and the variables that influence them so that users and the public can understand the forces that cause certain kinds of content to proliferate, and other kinds to disappear.

- Companies should enable users to decide whether and how algorithms can shape their online experience.

- Companies should be transparent about the ad targeting systems that determine who can pay to influence users.

- Companies should publish their rules governing advertising content (what can be advertised, how it can be displayed, what language and images are not permitted).

- All company rules governing paid and organic user-generated content must be enforced fairly according to a transparent process.

- People whose speech is restricted must have an opportunity to appeal.

- Companies must regularly publish transparency reports with detailed information about the outcomes of the steps taken to enforce the rules.

Introduction

Within minutes of the August 2019 mass shooting in Odessa, Texas, social media was ablaze with safety warnings and messages of panic about the attack. The shooter, later identified as Seth Ator, had opened fire from inside his car, and then continued his spree from a stolen postal service van, ultimately killing seven people and injuring 25. Not 30 minutes after the shooting, a tweet alleging that Ator was "a Democrat Socialist who had a Beto sticker on his truck," suggesting a connection between the shooter and former Rep. Beto O'Rourke (D-Texas), went viral. The post quickly spread to other social media sites, accompanied by a rear angle photo of a white minivan adorned with a Beto 2020 sticker. A cascade of speculative messages followed, alleging deeper ties between the shooter and OŌĆÖRourke, who was running for the Democratic presidential nomination at the time.

But the post was a fabrication. The O'Rourke campaign swiftly spoke out, denying any link between the candidate and the shooter and condemning social media platforms for hosting messages promoting the false allegation. Local law enforcement confirmed that neither of the vehicles driven by Ator had Beto stickers on them, and that Ator was registered as "unaffiliated," undercutting follow-on social media messages that called Ator a "registered Democrat." Twitter temporarily suspended the account, but much of the damage had already been done.

Within just two days, the post became "GoogleŌĆÖs second-highest trending search query related to OŌĆÖRourke," according to the Associated Press.1 Within a week, it had garnered 11,000 retweets and 15,000 favorites.2

What made this post go viral? We wish we knew.

One might ask why Twitter or other platforms didnŌĆÖt do more to stop these messages from spreading, as the OŌĆÖRourke campaign did. The incident raises questions about how these companies set and enforce their rules, what messages should or shouldnŌĆÖt be allowed, and the extent to which internet platforms should be held liable for usersŌĆÖ behavior.

But we also must ask what is happening under the hood of these incredibly powerful platforms. Did this message circulate so far and fast thanks only to regular users sharing and re-sharing it? Or did social media platformsŌĆÖ algorithmsŌĆötechnical systems that can gauge a messageŌĆÖs likely popularity, and sometimes cause it to go viralŌĆöexert some influence? The technology underlying social media activity is largely opaque to the public and policymakers, so we cannot know for sure (this is a major problemŌĆöwhich is discussed more later in the report). But research indicates that in a scenario like this one, algorithmic systems can drive the reach of a message by targeting it to people who are most likely to share it, and thus influence the viewpoints of thousands or even millions of people.3

As a society, we are facing a problem stemming not just from the existence of disinformation and violent or hateful speech on social media, but from the systems that make such speech spread to so many people. We know that when a piece of content goes viral, it may not be propelled by genuine user interest alone. Virality is often driven by corporate algorithms designed to prioritize views or clicks, in order to raise the visibility of content that appears to inspire user interest. Similarly, when a targeted ad reaches a certain voter, and influences how or whether they vote, it is rarely accidental. It is the result of sophisticated systems that can target very specific demographics of voters in a particular place.4

Why do companies manipulate what we see? Part of their goal is to improve user experienceŌĆöif users had to navigate all the information that comes across our social media feeds with no curation, we would indeed be overwhelmed. But companies build algorithms not simply to respond to our interests and desires but to generate profit. The more we engage, the more data we give up. The more data they have, the more decisions they can make about what to show us, and the more money they make through targeted advertising, including political ads.5

Surveillance-based business models have driven the distortion of our information environment in ways that are bad for us individually and potentially catastrophic for democracy.

The predominant business models of the most powerful American internet platforms are surveillance-based. Built on a foundation of mass user-data collection and analysis, they are part of a market ecosystem that Harvard Professor Shoshana Zuboff has labeled surveillance capitalism.6 Evidence suggests that surveillance-based business models have driven the distortion of our information environment in ways that are bad for us individually and potentially catastrophic for democracy.7 Between algorithmically-induced filter bubbles, disinformation campaigns foreign and domestic, and political polarization exacerbated by targeted advertising, our digital quasi-public sphere has become harder to navigate and more harmful to the democratic process each year. Yet policymakers in the United States and abroad have been primarily focused on purging harmful content from social media platforms, rather than addressing the underlying technical infrastructure and business models that have created an online media ecosystem that is optimized for the convenience of advertisers rather than the needs of democracy.

Some foreign governments have responded to these kinds of content by directly regulating online speech and imposing censorship requirements on companies. In contrast, the First Amendment of the U.S. Constitution forbids the government from passing laws that ban most types of speech directly, and companies are largely free to set and enforce their own rules about what types of speech or behaviors are permitted on their platforms.8 But as private rules and enforcement mechanisms have failed to curb the types of online extremism, hate speech, and disinformation that many Americans believe are threatening democracy, pressure is mounting on Congress to change the law and hold internet platforms directly liable for the consequences of speech appearing on their platforms.

Policymakers, civil society advocates, and tech executives alike have spent countless hours and resources developing and debating ways that companies could or should remove violent extremist content, reduce viral disinformation (often described as ŌĆ£coordinated inauthentic behaviorŌĆØ) online, and minimize the effects of advertising practices that permit the intentional spread of falsehoods. Here too, technical solutions like algorithmic systems are often deployed. But efforts to identify and remove content that is either illegal or that violates a companyŌĆÖs rules routinely lead to censorship that violates usersŌĆÖ civil and political rights.

TodayŌĆÖs technology is not capable of eliminating extremism and falsehood from the internet without stifling free expression to an unacceptable degree.

There will never be a perfect solution to these challenges, especially not at the scale at which the major tech platforms operate.9 But we suspect that if they changed the systems that decide so much of what actually happens to our speech (paid and unpaid alike) once we post it online, companies could significantly reduce the problems that disinformation and hateful content often create.

At the moment, determining exactly how to change these systems requires insight that only the platforms possess. Very little is publicly known about how these algorithmic systems work, yet the platforms know more about us each day, as they track our every move online and off. This information asymmetry impedes corporate accountability and effective governance.10

This report, the first in a two-part series, articulates the connection between surveillance-based business models and the health of democracy. Drawing from Ranking Digital RightsŌĆÖs extensive research on corporate policies and digital rights, we examine two overarching types of algorithms, give examples of how these technologies are used both to propagate and prohibit different forms of online speech (including targeted ads), and show how they can cause or catalyze social harm, particularly in the context of the 2020 U.S. election. We also highlight what we ╗Õ┤Ū▓įŌĆÖt know about these systems, and call on companies to be much more transparent about how they work.

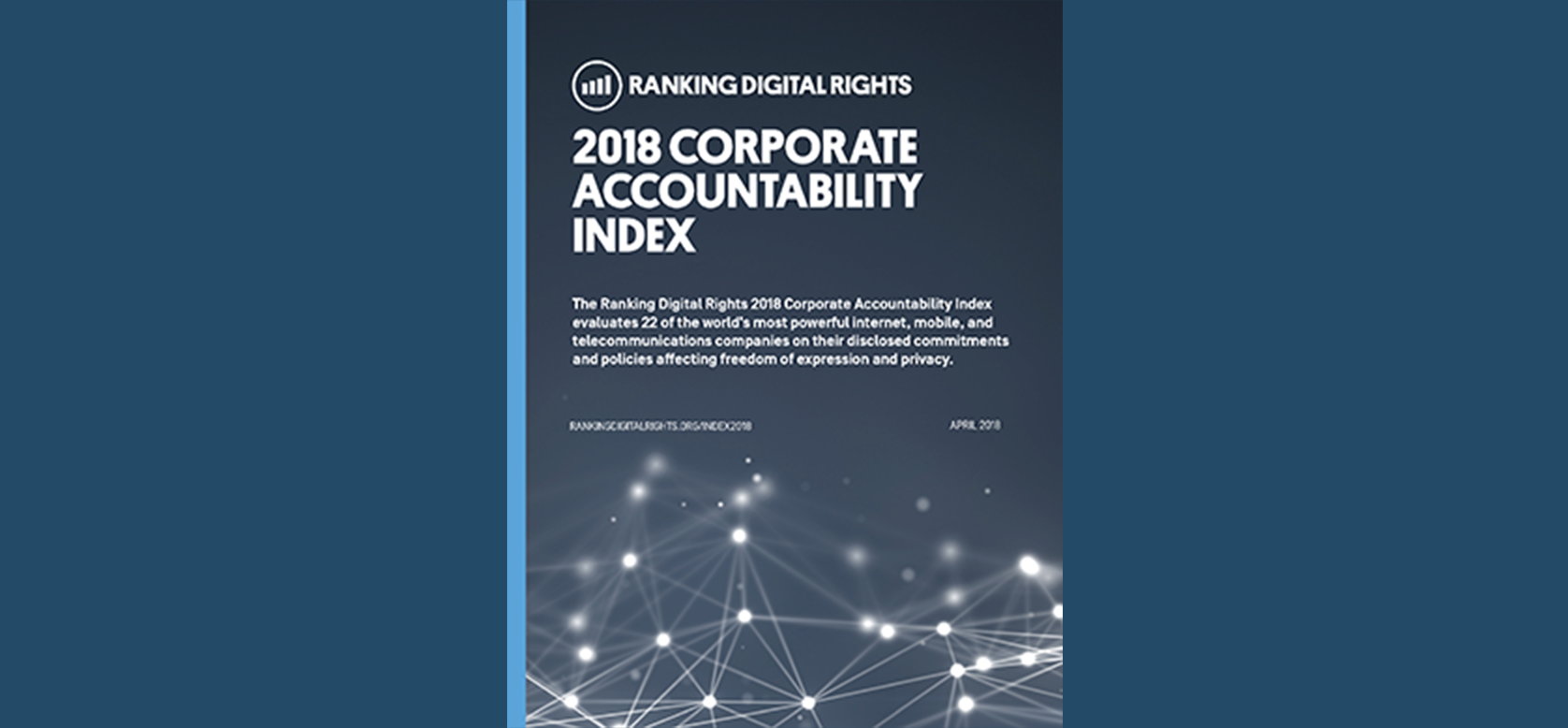

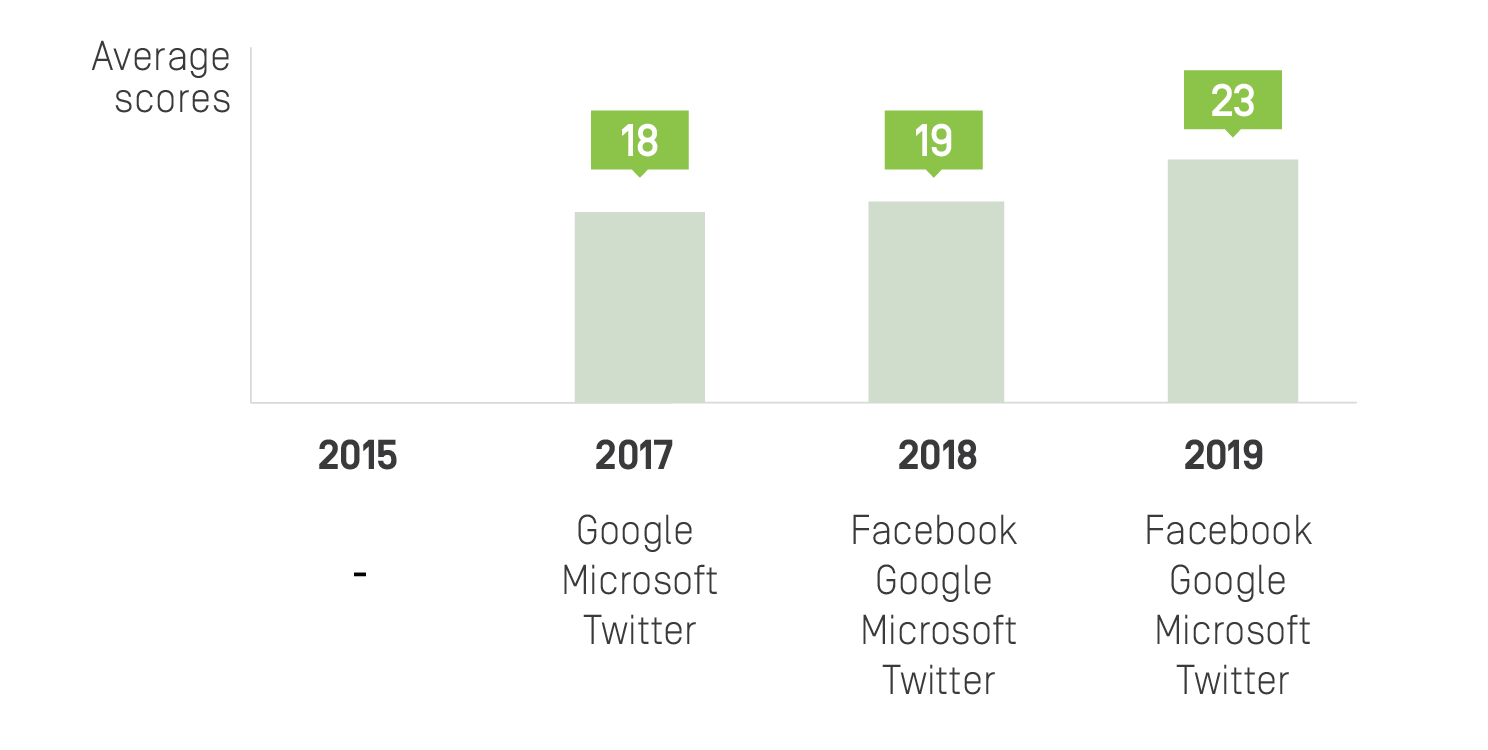

Since 2015, the Ranking Digital Rights (RDR) Corporate Accountability Index has measured how transparent companies are about their policies and practices that affect online freedom of expression and privacy. Despite measurable progress, most companies still fail to disclose basic information about how they decide what content should appear on their platforms, how they collect and monetize user information, and what corporate governance processes guide these decisions. This report offers recommendations for companies and regulators that would make our online ecosystem work for democracy rather than undermine it.

The second installment in this series will dive deeper into our solutions, which focus on corporate governance. We will look at the ways tech companies should anticipate and address the societal harms that their products cause or contribute to, and how society can hold the private sector accountable through elected government and democratic institutions. Strong privacy legislation will be a key first step towards curbing the spread of extremism and disinformation, by placing limits on whether and how vast amounts of personal data can be collected and used to target people. This must come hand in hand with greater transparency about how companies rule themselves, and how they make decisions that affect us all. Only then will citizens and their elected representatives have the tools and information they need to hold tech companies accountable (including with regulation) in a way that advances the public interest.

Citations

- Weissert, William, and Amanda Seitz. 2019. ŌĆ£False Claims Blur Line between Mass Shootings, 2020 Politics.ŌĆØ AP NEWS.

- Kelly, Makena. 2019. ŌĆ£Facebook, Twitter, and Google Must Remove Disinformation, Beto OŌĆÖRourke Demands.ŌĆØ The Verge.

- Vaidhyanathan, Siva. 2018. Antisocial Media: How Facebook Disconnects Us and Undermines Democracy. New York, NY: Oxford University Press.

- Amnesty International. 2019. Surveillance Giants: How the Business Model of Google and Facebook Threatens Human Rights. London: Amnesty International.

- Gary, Jeff, and Ashkan Soltani. 2019. ŌĆ£First Things First: Online Advertising Practices and Their Effects on Platform Speech.ŌĆØ Knight First Amendment Institute.

- Zuboff, Shoshana. 2019. The Age of Surveillance Capitalism: The Fight for a Human Future at the New Frontier of Power. First edition. New York: PublicAffairs.

- OŌĆÖNeil, Cathy. 2016. Weapons of Math Destruction: How Big Data Increases Inequality and Threatens Democracy. First edition. New York: Crown.

- Companies are required to police and remove content that is not protected by the First Amendment, including child sexual abuse materials and content that violates copyright laws.

- Kaye, David. 2019. Speech Police: The Global Struggle to Govern the Internet. New York: Columbia Global Reports.

- Zuboff, Shoshana. 2019. The Age of Surveillance Capitalism: The Fight for a Human Future at the New Frontier of Power. First edition. New York: PublicAffairs.

A Tale of Two Algorithms

In recent years, policymakers and tech executives alike have begun to invoke the almighty algorithm as a solution for controlling the harmful effects of online speech. Acknowledging the pitfalls of relying primarily on human content moderators, technology companies promote the idea that a technological breakthrough, something that will automatically eliminate the worst kinds of speech, is just around the corner.1

But the public debate often conflates two very different types of algorithmic systems that play completely different roles in policing, shaping, and amplifying online speech.2

First, there are content-shaping algorithms. These systems determine the content that each individual user sees online, including user-generated or organic posts and paid advertisements. Some of the most visible examples of content-shaping algorithms include FacebookŌĆÖs News Feed, TwitterŌĆÖs Timeline, and YouTubeŌĆÖs recommendation engine.3 Algorithms also determine which users should be shown a given ad. The advertiser usually sets the targeting parameters (such as demographics and presumed interests), but the platformŌĆÖs algorithmic systems pick the specific individuals who will see the ad and determine the adŌĆÖs placement within the platform. Both kinds of personalization are only possible because of the vast troves of detailed information that the companies have accumulated about their users and their online behavior, often without the knowledge or consent of the people being targeted.4

While companies describe such algorithms as matching users with the content that is most relevant to them, this relevance is measured by predicted engagement: how likely users are to click, comment on, or share a piece of content. Companies make these guesses based on factors like usersŌĆÖ previous interaction with similar content and the interactions of other users who are similar to them. The more accurate these guesses are, the more valuable the company becomes for advertisers, leading to ever-increasing profits for internet platforms. This is why mass data collection is so central to Big TechŌĆÖs business models: companies need to surveil internet users in order to make predictions about their future behavior.5

Companies can and do change their algorithms anytime they want, without any legal obligation to notify the public.

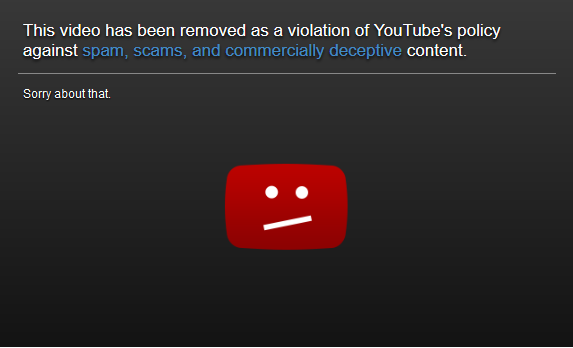

Second, we have content moderation algorithms, built to detect content that breaks the companyŌĆÖs rules and remove it from the platform. Companies have made tremendous investments in these technologies. They are increasingly able to identify and remove some kinds of content without human involvement, but this approach has limitations.6

Content moderation algorithms work best when they have a hard and fast rule to follow. This works well when seeking to eliminate images of a distinct symbol, like a swastika. But machine-driven moderation becomes more difficult, if not impossible, when content is violent, hateful, or misleading and yet has some public interest value. Companies are, in effect, adjudicating such content, but this requires the ability to reasonŌĆöto employ careful consideration of context and nuance. Only humans with the appropriate training can make these kinds of judgmentsŌĆöthis is beyond the capability of automated decision-making systems.7 Thus, in many cases, human reviewers remain involved in the moderation process. The consequences that this type of work has had for human reviewers has become an important area of study unto itself, but lies beyond the scope of this report.8

Both types of systems are extraordinarily opaque, and thus unaccountable. Companies can and do change their algorithms anytime they want, without any legal obligation to notify the public. While content-shaping and ad-targeting algorithms work to show you posts and ads that they think are most relevant to your interests, content moderation processes (including algorithms) work alongside this stream of content, doing their best to identify and remove those posts that might cause harm. LetŌĆÖs look at some examples of these dynamics in real life.

Citations

- Harwell, Drew. 2018. ŌĆ£AI Will Solve FacebookŌĆÖs Most Vexing Problems, Mark Zuckerberg Says. Just DonŌĆÖt Ask When or How.ŌĆØ Washington Post.

- It is worth nothing that terms like algorithms, machine learning and artificial intelligence have very specific meanings in computer science, but are used more or less interchangeably in policy discussions to refer to computer systems that use Big Data analytics to perform tasks that humans would otherwise do. Perhaps the most useful non-technical definition of an algorithm is Cathy OŌĆÖNeilŌĆÖs: ŌĆ£Algorithms are opinions embedded in codeŌĆØ (see OŌĆÖNeil, Cathy. 2017. The Era of Blind Faith in Big Data Must End. ).

- Singh, Spandana. 2019. Rising Through the Ranks: How Algorithms Rank and Curate Content in Search Results and on News Feeds. Washington D.C.: ╣·▓·╩ėŲĄŌĆÖs Open Technology Institute. source

- Singh, Spandana. 2020. Special Delivery: How Internet Platforms Use Artificial Intelligence to Target and Deliver Ads. Washington D.C.: ╣·▓·╩ėŲĄŌĆÖs Open Technology Institute. source

- Zuboff, Shoshana. 2019. The Age of Surveillance Capitalism: The Fight for a Human Future at the New Frontier of Power. First edition. New York: PublicAffairs.

- Singh, Spandana. 2019. Everything in Moderation: An Analysis of How Internet Platforms Are Using Artificial Intelligence to Moderate User-Generated Content. Washington D.C.: ╣·▓·╩ėŲĄŌĆÖs Open Technology Institute. source

- Gray, Mary L., and Siddharth Suri. 2019. Ghost Work: How to Stop Silicon Valley from Building a New Global Underclass. Boston: Houghton Mifflin Harcourt; Gorwa, Robert, Reuben Binns, and Christian Katzenbach. 2020. ŌĆ£Algorithmic Content Moderation: Technical and Political Challenges in the Automation of Platform Governance.ŌĆØ Big Data & Society 7(1): 205395171989794.

- Newton, Casey. 2020. ŌĆ£YouTube Moderators Are Being Forced to Sign a Statement Acknowledging the Job Can Give Them PTSD.ŌĆØ The Verge. ; Roberts, Sarah T. 2019. Behind the Screen: Content Moderation in the Shadows of Social Media. New Haven: Yale University Press.

Russian Interference, Radicalization, and Dishonest Ads: What Makes Them So Powerful?

Russian interference in recent U.S. elections and online radicalization by proponents of violent extremism are just two recent, large-scale examples of the content problems that we reference above.

Following the 2016 election, it was revealed that Russian government actors had attempted to influence U.S. election results by promoting false online content (posts and ads alike) and using online messaging to stir tensions between different voter factions. These influence efforts, alongside robust disinformation campaigns run by domestic actors,1 were able to flourish and reach millions of voters (and perhaps influence their choices) in an online environment where content-shaping and ad-targeting algorithms play the role of human editors. Indeed, it was not the mere existence of misleading content that interfered with peopleŌĆÖs understanding of what was true about each candidate and their positionsŌĆöit was the reach of these messages, enabled by algorithms that selectively targeted the voters whom they were most likely to influence, in the platformsŌĆÖ estimation.2

The revelations set in motion a frenzy of content restriction efforts by major tech companies and fact-checking initiatives by companies and independent groups alike,3 alongside Congressional investigations into the issue. Policymakers soon demanded that companies rein in online messages from abroad meant to skew a voterŌĆÖs perspective on a candidate or issue.

How would companies achieve this? With more algorithms, they said.4 Alongside changes in policies regarding disinformation, companies soon adjusted their content moderation algorithms to better identify and weed out harmful election-related posts and ads. But the scale of the problem all but forced them to stick to technical solutions, with the same limitations as those that caused these messages to flourish in the first place.

Content moderation algorithms are notoriously difficult to implement effectively, and often create new problems. Academic studies published in 2019 found that algorithms trained to identify hate speech for removal were more likely to flag social media content created by African Americans, including posts using slang to discuss contentious events and personal experiences related to racism in America.5 While companies are free to set their own rules and take down any content that breaks those rules, these kinds of removals are in tension with U.S. free speech values, and have elicited the public blowback to match.

Content restriction measures have also inflicted collateral damage on unsuspecting users outside the United States with no discernible connection to disinformation campaigns. As companies raced to reduce foreign interference on their platforms, social network analyst Lawrence Alexander identified several users on Twitter and Reddit whose accounts were suspended simply because they happened to share some of the key characteristics of disinformation purveyors.

One user had even tried to notify Twitter of a pro-Kremlin campaign, but ended up being banned himself. ŌĆ£[This] quick-fix approach to bot-hunting seemed to have dragged a number of innocent victims into its nets,ŌĆØ wrote Alexander, in a research piece for Global Voices. For one user who describes himself in his Twitter profile as an artist and creator of online comic books, ŌĆ£it appears that the key ŌĆśsuspiciousŌĆÖ thing about their account was their locationŌĆöRussia.ŌĆØ6

The lack of corporate transparency regarding the full scope of disinformation and malicious behaviors on social media platforms makes it difficult to assess how effective these efforts actually are.

For four years, RDR has tracked whether companies publish key information about how they enforce their content rules. In 2015, none of the companies we evaluated published any data about the content they removed for violating their platform rules.7 Four years later, we found that Facebook, Google, Microsoft, and Twitter published at least some information about their rules enforcement,8 including in transparency reports.9 But this information still doesnŌĆÖt demonstrate how effective their content moderation mechanisms have actually been in enforcing their rules, or how often acceptable content gets caught up in their nets.

Companies have also deployed automated systems to review election-related ads, in an effort to better enforce their policies. But these efforts too have proven problematic. Various entities, ranging from media outlets10 to LGBTQ-rights groups11 to BushŌĆÖs Baked Beans,12 have reported having their ads rejected for violating election-ad policies, despite the fact that their ads had nothing to do with elections.13 Yet companiesŌĆÖ disclosures about how they police ads are even less transparent than those pertaining to user-generated content, and thereŌĆÖs no way to know how effective these policing mechanisms have been in enforcing the actual rules as intended. 14

The issue of online radicalization is another area of concern for U.S. policymakers, in which both types of algorithms have been in play. Videos and social media channels enticing young people to join violent extremist groups or to commit violent acts became remarkably easy to stumble upon online, due in part to what seems to be these groupsŌĆÖ savvy understanding of how to make algorithms that amplify and spread content work to their advantage.15 Online extremism and radicalization are very real problems that the internet has exacerbated. But efforts to address this problem have led to unjustified censorship.

Widespread concern that international terrorist organizations were recruiting new members online has led to the creation of various voluntary initiatives, including the Global Internet Forum to Counter Terrorism (GIFCT), which helps companies jointly assess content that has been identified as promoting or celebrating terrorism.

Scale mattersŌĆöthe societal impact of a single message or video rises exponentially when a powerful algorithm is driving its distribution.

The GIFCT has built an industry-wide database of digital fingerprints or ŌĆ£hashesŌĆØ for such content. Companies use these hashes to filter offending content, often prior to upload. As a result, any errors made in labeling content as terrorist in this database16 are replicated on all participating platforms, leading to the censorship of photos and videos containing speech that should be protected under international human rights law.

Thousands of videos and photos from the Syrian civil war have disappeared in the course of these effortsŌĆövideos that one day could be used as evidence against perpetrators of violence. No one knows for sure whether these videos were removed because they matched a hash in the GIFCT database, because they were flagged by a content moderation algorithm or human reviewer, or some other reason. The point is that this evidence is often impossible to replace. But little has been done to change the way this type of content is handled, despite its enormous potential evidentiary value.17

In an ideal world, violent extremist messages would not reach anyone. But the public safety risks that these carry rise dramatically when such messages reach tens of thousands, or even millions, of people.

The same logic applies to disinformation targeted at voters. Scale mattersŌĆöthe societal impact of a single message or video rises exponentially when a powerful algorithm is driving its distribution. Yet the solutions for these problems that we have seen companies, governments, and other stakeholders put forth focus primarily on eliminating content itself, rather than altering the algorithmic engines that drive its distribution.

Citations

- Benkler, Yochai, Rob Faris, and Hal Roberts. 2018. Network Propaganda: Manipulation, Disinformation, and Radicalization in American Politics. New York, NY: Oxford University Press.

- U.S. Senate Select Committee on Intelligence. 2019a. Report of the Select Committee on Intelligence United States Senate on Russian Active Measures Campaigns and Interference in the 2016 U.S. Election Volume 1: Russian Efforts against Election Infrastructure. Washington, D.C.: U.S. Senate. ; U.S. Senate Select Committee on Intelligence. 2019b. Report of the Select Committee on Intelligence United States Senate on Russian Active Measures Campaigns and Interference in the 2016 U.S. Election Volume 2: RussiaŌĆÖs Use of Social Media. Washington, D.C.: U.S. Senate.

- Many independent fact-checking efforts stemmed from this moment, but it is difficult to assess their effectiveness.

- Harwell, Drew. 2018. ŌĆ£AI Will Solve FacebookŌĆÖs Most Vexing Problems, Mark Zuckerberg Says. Just DonŌĆÖt Ask When or How.ŌĆØ Washington Post.

- Ghaffary, Shirin. 2019. ŌĆ£The Algorithms That Detect Hate Speech Online Are Biased against Black People.ŌĆØ Vox. ; Sap, Maarten et al. 2019. ŌĆ£The Risk of Racial Bias in Hate Speech Detection.ŌĆØ In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, Florence, Italy, 1668ŌĆō78. ; Davidson, Thomas, Debasmita Bhattacharya, and Ingmar Weber. 2019. ŌĆ£Racial Bias in Hate Speech and Abusive Language Detection Datasets.ŌĆØ In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, Florence, Italy, 25ŌĆō35.

- Lawrence Alexander. ŌĆ£In the Fight against Pro-Kremlin Bots, Tech Companies Are Suspending Regular Users.ŌĆØ 2018. Global Voices.

- Several companies (including Facebook, Google, and Twitter) did publish some information about content they removed as a result of a government request or a copyright claim. Ranking Digital Rights. 2015. Corporate Accountability Index. Washington, DC: ╣·▓·╩ėŲĄ.

- Ranking Digital Rights. 2019. Corporate Accountability Index. Washington, DC: ╣·▓·╩ėŲĄ.

- Transparency reports contain aggregate data about the actions that companies take to enforce their rules. See Budish, Ryan, Liz Woolery, and Kevin Bankston. 2016. The Transparency Reporting Toolkit. Washington D.C.: ╣·▓·╩ėŲĄŌĆÖs Open Technology Institute. source

- Jeremy B. Merrill, Ariana Tobin. 2018. ŌĆ£FacebookŌĆÖs Screening for Political Ads Nabs News Sites Instead of Politicians.ŌĆØ ProPublica.

- Eli Rosenberg. ŌĆ£Facebook Blocked Many Gay-Themed Ads as Part of Its New Advertising Policy, Angering LGBT Groups.ŌĆØ Washington Post.

- Mak, Aaron. 2018. ŌĆ£Facebook Thought an Ad From BushŌĆÖs Baked Beans Was ŌĆśPoliticalŌĆÖ and Removed It.ŌĆØ Slate Magazine.

- In a 2018 experiment, Cornell University professor Nathan Matias and a team of colleagues tested the two systems by submitting several hundred software-generated ads that were not election-related, but contained some information that the researchers suspected might get caught in the companiesŌĆÖ filters. The ads were primarily related to national parks and VeteransŌĆÖ Day events. While all of the ads were approved by Google, 11 percent of the ads submitted to Facebook were prohibited, with a notice citing the companyŌĆÖs election-related ads policy. See Matias, J. Nathan, Austin Hounsel, and Melissa Hopkins. 2018. ŌĆ£We Tested FacebookŌĆÖs Ad Screeners and Some Were Too Strict.ŌĆØ The Atlantic.

- Our research found that while Facebook, Google and Twitter did provide some information about their processes for reviewing ads for rules violations, they provided no data about what actions they actually take to remove or restrict ads that violate ad content or targeting rules once violations are identified. And because none of these companies include information about ads that were rejected or taken down in their transparency reports, itŌĆÖs impossible to evaluate how well their internal processes are working. Ranking Digital Rights. 2020. The RDR Corporate Accountability Index: Transparency and Accountability Standards for Targeted Advertising and Algorithmic Systems ŌĆō Pilot Study and Lessons Learned. Washington D.C.: ╣·▓·╩ėŲĄ.

- Popken, Ben. 2018. ŌĆ£As Algorithms Take over, YouTubeŌĆÖs Recommendations Highlight a Human Problem.ŌĆØ NBC News. ; Marwick, Alice, and Rebecca Lewis. 2017. Media Manipulation and Disinformation Online. New York, NY: Data & Society Research Institute. ; Caplan, Robin, and danah boyd. 2016. Who Controls the Public Sphere in an Era of Algorithms? New York, NY: Data & Society Research Institute. .

- Companies take different approaches to defining what constitutes ŌĆ£terroristŌĆØ content. This is a highly subjective exercise.

- Biddle, Ellery Roberts. ŌĆ£ŌĆśEnvision a New WarŌĆÖ: The Syrian Archive, Corporate Censorship and the Struggle to Preserve Public History Online.ŌĆØ Global Voices. ; MacDonald, Alex. ŌĆ£YouTube Admits ŌĆśwrong CallŌĆÖ over Deletion of Syrian War Crime Videos.ŌĆØ Middle East Eye.

Algorithmic Transparency: Peeking Into the Black Box

While we know that algorithms are often the underlying cause of virality, we ╗Õ┤Ū▓įŌĆÖt know much moreŌĆöcorporate norms fiercely protect these technologies as private property, leaving them insulated from public scrutiny, despite their immeasurable impact on public life.

Since early 2019, RDR has been researching the impact of internet platformsŌĆÖ use of algorithmic systems, including those used for targeted advertising, and how companies should govern these systems.1 With some exceptions, we found that companies largely failed to disclose sufficient information about these processes, leaving us all in the dark about the forces that shape our information environments. Facebook, Google, and Twitter do not hide the fact that they use algorithms to shape content, but they are less forthcoming about how the algorithms actually work, what factors influence them, and how users can customize their own experiences. 2

Companies largely failed to disclose sufficient information about their algorithmic systems, leaving us all in the dark about the forces that shape our information environments.

U.S. tech companies have also avoided publicly committing to upholding international human rights standards for how they develop and use algorithmic systems. Google, Facebook, and other tech companies are instead leading the push for ethical or responsible artificial intelligence as a way of steering the discussion away from regulation.3 These initiatives lack established, agreed-upon principles, and are neither legally binding nor enforceableŌĆöin contrast to international human rights doctrine, which offers a robust legal framework to guide the development and use of these technologies.

Grounding the development and use of algorithmic systems in human rights norms is especially important because tech platforms have a long record of launching new products without considering the impact on human rights.4 Neither Facebook, Google, nor Twitter disclose any evidence that they conduct human rights due diligence on their use of algorithmic systems or on their use of personal information to develop and train them. Yet researchers and journalists have found strong evidence that algorithmic content-shaping systems that are optimized for user engagement prioritize controversial and inflammatory content.5 This can help foster online communities centered around specific ideologies and conspiracy theories, whose members further radicalize each other and may even collaborate on online harassment campaigns and real-world attacks.6

One example is YouTubeŌĆÖs video recommendation system, which has come under heavy scrutiny for its tendency to promote content that is extreme, shocking, or otherwise hard to look away from, even when a user starts out by searching for information on relatively innocuous topics. When she looked into the issue ahead of the 2018 midterm elections, University of North Carolina sociologist Zeynep Tufekci wrote, ŌĆ£What we are witnessing is the computational exploitation of a natural human desire: to look ŌĆśbehind the curtain,ŌĆÖ to dig deeper into something that engages us. ŌĆ” YouTube leads viewers down a rabbit hole of extremism, while Google racks up the ad sales.ŌĆØ7

TufekciŌĆÖs observations have been backed up by the work of researchers like Becca Lewis, Jonas Kaiser, and Yasodara Cordova. The latter two, both affiliates of HarvardŌĆÖs Berkman Klein Center for Internet and Society, found that when users searched videos of childrenŌĆÖs athletic events, YouTube often served them recommendations of videos of sexually themed content featuring young-looking (possibly underage) people with comments to match.8

After the New York Times reported on the problem last year, the company removed several of the videos, disabled comments on many videos of children, and made some tweaks to its recommendation system. But it has stopped short of turning off recommendations on videos of children altogether. Why did the company stop there? The New York TimesŌĆÖs Max Fisher and Amanda Taub summarized YouTubeŌĆÖs response: ŌĆ£The company said that because recommendations are the biggest traffic driver, removing them would hurt creators who rely on those clicks.ŌĆØ9 We can surmise that eliminating the recommendation feature would also compromise YouTubeŌĆÖs ability to keep users hooked on its platform, and thus capture and monetize their data.

If companies were required by law to meet baseline standards for transparency in algorithms like this one, policymakers and civil society advocates alike would be better positioned to push for changes that benefit the public interest. And if they were compelled to conduct due diligence on the negative effects of their products, platforms would likely make very different choices about how they use and develop algorithmic systems.

Citations

- Mar├®chal, Nathalie. 2019. ŌĆ£RDR Seeks Input on New Standards for Targeted Advertising and Human Rights.ŌĆØ Ranking Digital Rights. ; Brouillette, Amy. 2019. ŌĆ£RDR Seeks Feedback on Standards for Algorithms and Machine Learning, Adding New Companies.ŌĆØ Ranking Digital Rights. We identified three areas where companies should be much more transparent about their use of algorithmic systems: advertising policies and their enforcement, content-shaping algorithms, and automated enforcement of content rules for usersŌĆÖ organic content (also known as content moderation). Ranking Digital Rights. RDR Corporate Accountability Index: Draft Indicators. Transparency and Accountability Standards for Targeted Advertising and Algorithmic Decision-Making Systems. Washington D.C.: ╣·▓·╩ėŲĄ.

- Ranking Digital Rights. 2020. The RDR Corporate Accountability Index: Transparency and Accountability Standards for Targeted Advertising and Algorithmic Systems ŌĆō Pilot Study and Lessons Learned. Washington D.C.: ╣·▓·╩ėŲĄ.

- Ochigame, Rodrigo. 2019. ŌĆ£The Invention of ŌĆśEthical AIŌĆÖ: How Big Tech Manipulates Academia to Avoid Regulation.ŌĆØ The Intercept. ">; Vincent, James. 2019. ŌĆ£The Problem with AI Ethics.ŌĆØ The Verge. ">.

- FacebookŌĆÖs record in Myanmar, where it was found to have contributed to the genocide of the Rohingya minority, is a particularly egregious example. See Warofka, Alex. 2018. ŌĆ£An Independent Assessment of the Human Rights Impact of Facebook in Myanmar.ŌĆØ Facebook Newsroom.

- Vaidhyanathan, Siva. 2018. Antisocial Media: How Facebook Disconnects Us and Undermines Democracy. New York, NY: Oxford University Press; Gary, Jeff, and Ashkan Soltani. 2019. ŌĆ£First Things First: Online Advertising Practices and Their Effects on Platform Speech.ŌĆØ Knight First Amendment Institute.

- Lewis, Becca. 2020. ŌĆ£All of YouTube, Not Just the Algorithm, Is a Far-Right Propaganda Machine.ŌĆØ Medium.

- Tufekci, Zeynep. 2018. ŌĆ£YouTube, the Great Radicalizer.ŌĆØ The New York Times.

- Unpublished study by Kaiser, Cordova & Rauchfleisch, 2019. The authors have not published the study out of concern that the algorithmic manipulation techniques they describe could be replicated in order to exploit children.

- Fisher, Max, and Amanda Taub. 2019. ŌĆ£On YouTubeŌĆÖs Digital Playground, an Open Gate for Pedophiles.ŌĆØ The New York Times.

Who Gets TargetedŌĆöOr ExcludedŌĆöBy Ad Systems?

Separate from the algorithms that shape the spread of user-generated content, advertising is the other arena in which policymakers must dig deeper and examine the engines that determine and drive distribution. Traditional advertising places ads based on context: everyone who walks by a billboard or flips through a magazine sees the same ad. Online targeted advertising, on the other hand, is personalized based on what advertisers and ad networks know (or think they know) about each person, based on the thick digital dossiers they compile about each of us.

In theory, advertisers on social media are responsible for determining which audience segments (as defined by their demographics or past behavior) will see a given ad, but in practice, platforms further optimize the audience beyond what the advertiser specified.1 Due to companiesŌĆÖ limited transparency efforts, we know very little about how this stage of further optimization works.

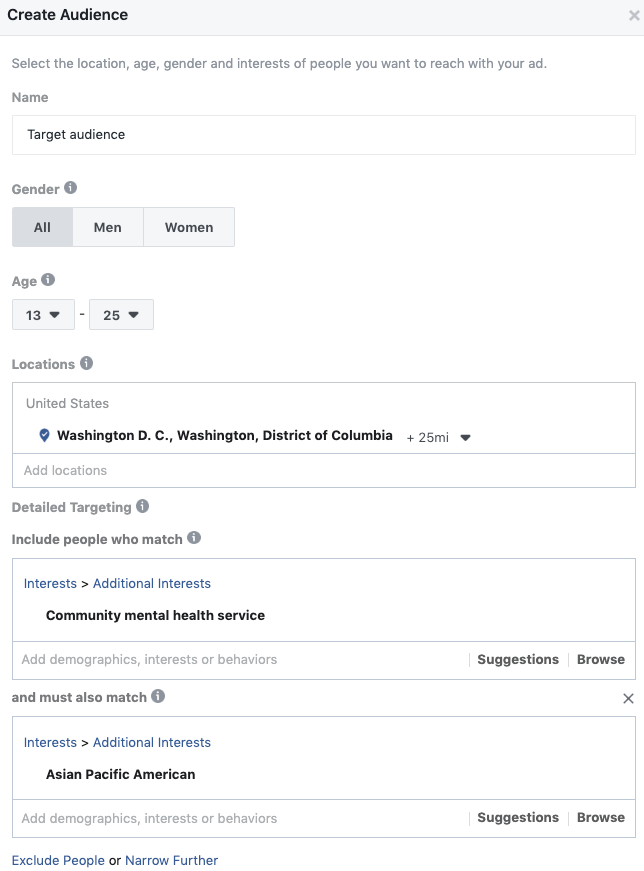

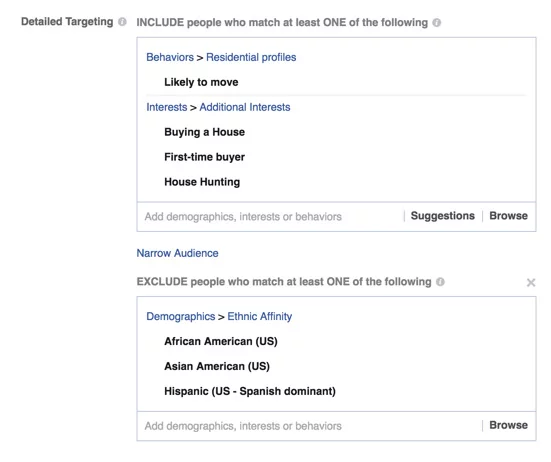

Nevertheless, from what we do know, these systems are already designed to discriminateŌĆöwhen you place an ad on a given platform, you are encouraged to select (from a list of options) different types of people youŌĆÖd like the ad to reach. Such differentiation amounts to unfair and even illegal discrimination in many instances.2 One powerful example of these dynamics (and of companiesŌĆÖ reticence to make changes at the systemic level) emerged from an investigation conducted by ProPublica, in which the media outlet attempted to test FacebookŌĆÖs ad-targeting tools by purchasing a few ads, selecting targeted recipients for the ads, and observing the process by which they were vetted and approved.3

ŌĆ£The ad we purchased was targeted to Facebook members who were house hunting and excluded anyone with an ŌĆśaffinityŌĆÖ for African-American, Asian-American or Hispanic people,ŌĆØ the reporters wrote. They explained that FacebookŌĆÖs ad-sales interface allowed them to tick different boxes, selecting who wouldŌĆöand would notŌĆösee their ads.4

The ads were approved 15 minutes after they were submitted for review. Civil liberties experts consulted by ProPublica confirmed that the option of excluding people with an ŌĆ£affinityŌĆØ for African-American, Asian-American, or Hispanic people was a clear violation of the U.S. Fair Housing Act, which prohibits real estate entities from discriminating against prospective renters or buyers on the basis of their race, ethnicity, or other identity traits.

After ProPublica wrote about and confronted Facebook on the issue, the company added an "advertiser education" section to its ad portal, letting advertisers know that such discrimination was illegal under U.S. law. It also began testing machine learning that would identify discriminatory ads for review. But the company preserved the key tool that allowed these users to be excluded in the first place: the detailed targeting criteria, pictured above, which allowed advertisers to target or exclude African-Americans and Hispanics.

Neither Facebook, Google, nor Twitter show evidence that they conduct due diligence on their targeted advertising practices.

Rather than addressing systemic questions around the social and legal consequences of this type of targeting system, Facebook focused on superficial remedies that left its business model untouched. In another investigation, ProPublica learned that these criteria are in fact generated not by Facebook employees, but by technical processes that comb the data of FacebookŌĆÖs billions of users and then establish targeting categories based on usersŌĆÖ stated interests. In short, by an algorithm.5

In spite of the companyŌĆÖs apparent recognition of the problem, Facebook did not take away or even modify these capabilities until many months later, after multiple groups took up the issue and filed a class action lawsuit claiming that the company had violated the Fair Housing Act.6

Here again, a company-wide policy for using and developing algorithms combined with human rights due diligence would most likely have identified these risks ahead of time and helped Facebook develop its ad-targeting systems in a way that respects free expression, privacy, and civil rights laws like the Fair Housing Act. But this is the norm rather than the exception. Neither Facebook, Google, nor Twitter show evidence that they conduct due diligence on their targeted advertising practices.7

Citations

- Ali, M., Sapiezynski, P., Bogen, M., Korolova, A., Mislove, A., & Rieke, A. (2019). Discrimination through Optimization: How Facebook's Ad Delivery Can Lead to Biased Outcomes. Proceedings of the ACM on Human-Computer Interaction, 3(CSCW), 1-30.

- Zuiderveen Borgesius, Frederik. 2018. Discrimination, Artificial Intelligence, and Algorithmic Decision-Making. Strasbourg: Council of Europe. ; Wachter, Sandra. 2019. Affinity Profiling and Discrimination by Association in Online Behavioural Advertising. Rochester, NY: Social Science Research Network. SSRN Scholarly Paper. Forthcoming in Berkeley Technology Law Journal, Vol. 35, No. 2, 2020.

- Angwin, Julia, and Terry Parris Jr. 2016. ŌĆ£Facebook Lets Advertisers Exclude Users by Race.ŌĆØ ProPublica.

- Julia Angwin, Terry Parris Jr. 2016. ŌĆ£Facebook Lets Advertisers Exclude Users by Race.ŌĆØ ProPublica.

- Julia Angwin, Madeleine Varner. 2017. ŌĆ£Facebook Enabled Advertisers to Reach ŌĆśJew Haters.ŌĆÖŌĆØ ProPublica.

- In a March 2019 settlement, Facebook agreed to create a distinct advertising portal for housing, employment, and credit ads, as civil rights law prohibits discriminatory advertising in these areas. The company also committed to create a new ŌĆ£Housing Search PortalŌĆØ allowing users to view all housing ads on the platform, regardless of whether the users are in the target audience selected by the advertiser.

- Ranking Digital Rights. 2020. The RDR Corporate Accountability Index: Transparency and Accountability Standards for Targeted Advertising and Algorithmic Systems ŌĆō Pilot Study and Lessons Learned. Washington D.C.: ╣·▓·╩ėŲĄ.

When Ad Targeting Meets the 2020 Election

Discriminating in housing ads is against the law.1 Discriminating in campaign ads may not be against the law, but many Americans strongly believe that it is bad for democracy.2

The same targeted advertising systems that are used to target people based on their interests and affinities were used to manipulate voters in the 2016 and 2018 elections. Most egregious, voters who were thought to lean toward Democratic candidates were targeted with ads containing incorrect information about how, when, and where to vote.3 Facebook, Google, and Twitter now prohibit this kind of disinformation, but we ╗Õ┤Ū▓įŌĆÖt know how effectively the rule is enforced: none of these companies publish information about their processes for enforcing advertising rules or about the outcomes of those processes.4

At the scale that these platforms operate, fact-checking ad content is hard enough when the facts in question are indisputable, such as the date of an upcoming election. ItŌĆÖs even thornier when subjective claims about an opponentŌĆÖs character or details of their policy proposals are in play. Empowering private companies to evaluate truth would be dangerously undemocratic, as Facebook CEO Mark Zuckerberg has himself argued.5 In the absence of laws restricting the content or the targeting of campaign ads, campaigns can easily inundate voters with ads peddling misleading claims on issues they care about, in an effort to sway their votes.

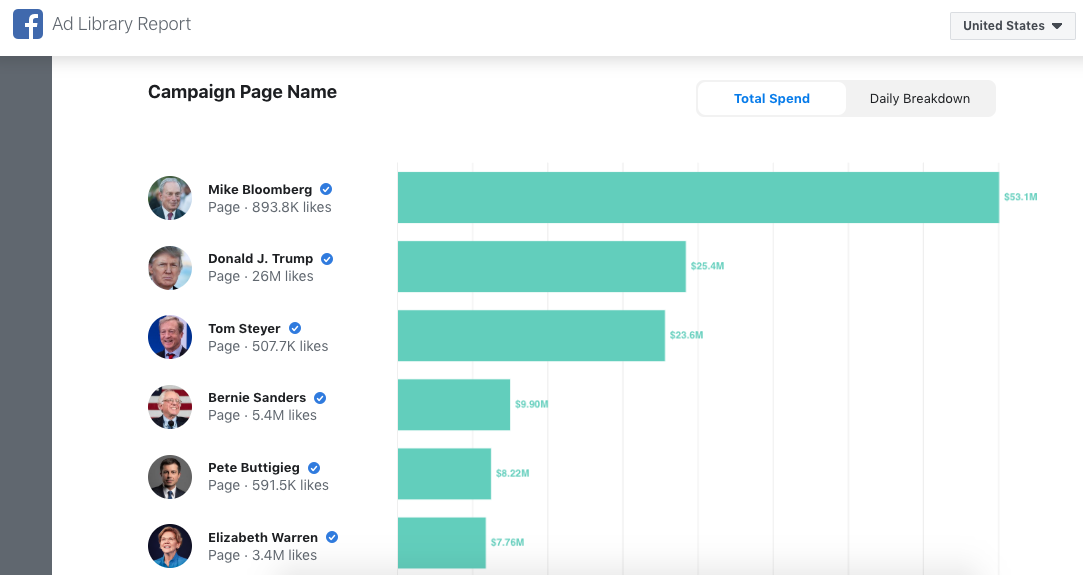

Barack Obama and Donald Trump both owe their presidencies to this type of targeting to an extent, though exactly to what extent is impossible to quantify. The 2008 Obama campaign pioneered the use of votersŌĆÖ personal information, and his reelection team refined the practice in 2012. In 2016, thanks to invaluable guidance from Facebook itself, Trump ran ŌĆ£the single best digital ad campaign […] ever seen from any advertiser,ŌĆØ as Facebook executive Andrew ŌĆ£BozŌĆØ Bosworth put it in an internal memo.6 The presidentŌĆÖs 2020 reelection campaign is on the same track.7 More than ever, elections have turned into marketing contests. This shift long predates social media companies, but targeted advertising and content-shaping algorithms have amplified the harms and made it harder to address them.

In the 2020 election cycle, we find ourselves in an online environment dominated by algorithms that appear ever-more powerful and effective at spreading content to precisely the people who will be most affected by it, thanks to continued advances in data tracking and analysis. Some campaigns are now using cell phone location data to identify churchgoers, Planned Parenthood patients, and similarly sensitive groups.8 Many of the risks weŌĆÖve articulated in unique examples thus far will be in play, and algorithms likely will multiply their effects for everyone who relies on social media for news and information.

We are entering a digital perfect storm fueled by deep political cleavages, opaque technological systems, and billions of dollars in campaign ad money that may prove disastrous for our democracy.

We need look no further than the bitter debates that played out around political advertising in the final months of 2019 to see just how high the stakes have become.

In October 2019, when Donald ╣·▓·╩ėŲĄ reelection campaign purchased a Facebook ad claiming that ŌĆ£Joe Biden promised Ukraine $1 billion if they fired the prosecutor investigating his son's company,ŌĆØ Facebook accepted it, enabling thousands of users to see (and perhaps believe) it. It didnŌĆÖt matter that the claim was unfounded, and had been debunked by two of FacebookŌĆÖs better-known fact-checking partners, PolitiFact and Factcheck.org.

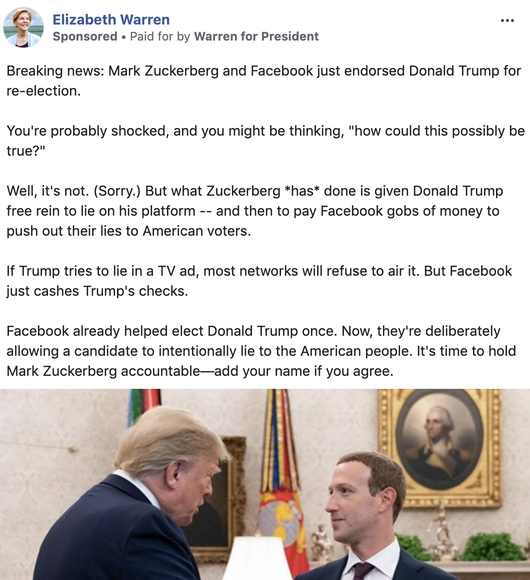

When Facebook decided to stand by this decision, and to let the ad stay up, Sen. Elizabeth Warren (D-Mass.)ŌĆöwho was running for the Democratic nomination at the time, alongside BidenŌĆöran a Facebook ad of her own, which made the intentionally false claim that ŌĆ£Mark Zuckerberg and Facebook just endorsed Donald Trump for re-election.ŌĆØ9

The ad was intended to draw attention to how easily politicians can spread misinformation on Facebook. Indeed, unlike print and broadcast ads, online political ads are completely unregulated in the United States: parties, campaigns, and outside groups are free to run any ads they want, if the platform or advertising network lets them. This gives companies like Google, Facebook, and Twitter tremendous power to set the rules by which political campaigns operate.

Soon after WarrenŌĆÖs attention-grabbing ad, the New York Times published a letter that had circulated internally at Facebook, and was signed by 250 staff members. The letterŌĆÖs authors criticized FacebookŌĆÖs refusal to fact-check political ads and tied the issue to ad targeting, arguing that it ŌĆ£allows politicians to weaponize our platform by targeting people who believe that content posted by political figures is trustworthyŌĆØ and could ŌĆ£cause harm in coming elections around the world.ŌĆØ10

Among other demands, the authors urged Facebook to restrict targeting for political ads. But the company did not relent, reportedly at the insistence of board member Peter Thiel.11 The only subsequent change that Facebook has made to this system is to allow users to opt out of custom audiences, a tool that allows advertisers to upload lists of specific individuals to target.12

In response to increased public scrutiny around political advertising, the other major U.S. platforms also moved to tweak their own approaches to political ads. Twitter, which only earned $3 million from political ads during the 2018 U.S. midterms,13 announced in October that it would no longer accept political ads,14 and restrict how ŌĆ£issue-basedŌĆØ ads (which argue for or against a policy position without naming specific candidates) can be targeted.15 Google elected to limit audience targeting for election ads to age, gender, and zip code, though it remains unclear precisely what kind of algorithm will be able to correctly identify (and then disable targeting for) election ads. None of the companies have given any indication that they conducted a human rights impact assessment or other due diligence prior to announcing these changes.16

The companiesŌĆÖ insistence on drawing unenforceable lines around ŌĆ£political ads,ŌĆØ ŌĆ£issue ads,ŌĆØ and ŌĆ£election adsŌĆØ highlights how central targeting is to their business models.

We might read Twitter and GoogleŌĆÖs decisions as an acknowledgment that the algorithms underlying the distribution of targeted ads are in fact a major driver of the kinds of disinformation campaigns and platform weaponization that can so powerfully affect our democracy. However, the companiesŌĆÖ insistence on drawing unenforceable lines around "political ads," "issue ads," and "election ads" highlights how central targeting is to their business models. FacebookŌĆÖs decision regarding custom audiences signals the same thing: as long as users are included in custom audiences by default, the change will have limited effects.

Ad targeting is just the beginning of such influence campaigns. As a Democratic political operative told the New York Times, ŌĆ£the real goal of paid advertising is for the content to become organic social media.ŌĆØ17 Once a user boosts an ad by sharing it as their own post, the platformŌĆÖs content-shaping algorithms treat it as normal user content and highlight it to people in the userŌĆÖs network who are more likely to click, like, and otherwise engage with it, allowing campaigns to reach audiences well beyond the targeted segments.

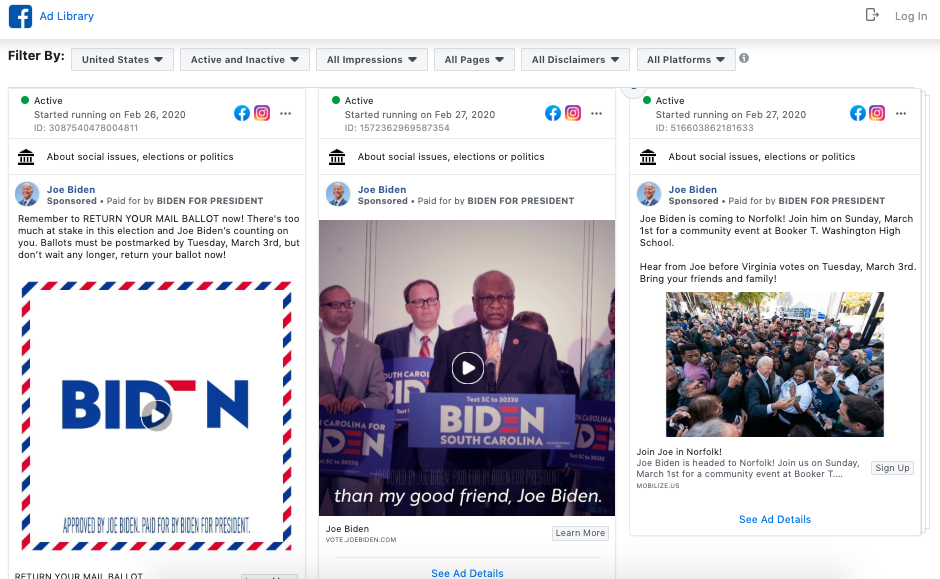

The major platformsŌĆÖ newly introduced ad libraries typically allow the public to find out who paid for an ad, how much they spent, and some targeting parameters. They shed some light into targeted campaigns themselves. But it is impossible to know how far messages travel without meaningful algorithmic transparency.18 All we know is that between ad-targeting and content-shaping algorithms, political campaigns are dedicating more resources to mastering the dark arts of data science.

We have described the nature and knock-on effects of two general types of algorithms. The first drives the distribution of content across a companyŌĆÖs platform. The second seeks to identify and eliminate specific types of content that have been deemed harmful, either by the law, or by the company itself. But we have only been able to scratch the surface of how these systems really operate, precisely because we cannot see them. To date, we only see evidence of their effects when we look at patterns of how certain kinds of content circulate online.

Although the 2020 election cycle is already in full swing, we are only beginning to understand just how powerful these systems can be in shaping our information environments, and in turn, our political reality.

Citations

- Fair Housing Act. 42 U.S.C. ┬¦ 3604(c).

- Anderson, Janna, and Lee Rainie. 2020. ŌĆ£Many Tech Experts Say Digital Disruption Will Hurt Democracy.ŌĆØ Pew Research Center: Internet, Science & Tech. ; McCarthy, Justin. 2020. In U.S., Most Oppose Micro-Targeting in Online Political Ads. Knight Foundation.

- This capability was used for voter suppression in both 2016 and 2018. The major platforms now prohibit ads that ŌĆ£are designed to deter or prevent people from voting,ŌĆØ but it is not at all clear how they will detect violations. See Hsu, Tiffany. 2018. ŌĆ£Voter Suppression and Racial Targeting: In FacebookŌĆÖs and TwitterŌĆÖs Words.ŌĆØ The New York Times. ; Leinwand, Jessica. 2018. ŌĆ£Expanding Our Policies on Voter Suppression.ŌĆØ Facebook Newsroom.

- Ranking Digital Rights. 2020. The RDR Corporate Accountability Index: Transparency and Accountability Standards for Targeted Advertising and Algorithmic Systems ŌĆō Pilot Study and Lessons Learned. Washington D.C.: ╣·▓·╩ėŲĄ.

- Zuckerberg, Mark. 2019. ŌĆ£Zuckerberg: Standing For Voice and Free Expression.ŌĆØ Speech at Georgetown University, Washington D.C.

- Roose, Kevin, Sheera Frenkel, and Mike Isaac. 2020. ŌĆ£DonŌĆÖt Tilt Scales Against Trump, Facebook Executive Warns.ŌĆØ The New York Times.

- Wong, Julia Carrie. 2020. ŌĆ£One Year inside ╣·▓·╩ėŲĄ Monumental Facebook Campaign.ŌĆØ The Guardian.

- Edsall, Thomas B. 2020. ŌĆ£╣·▓·╩ėŲĄ Digital Advantage Is Freaking Out Democratic Strategists.ŌĆØ The New York Times.

- The ad continued: ŌĆ£if Trump tries to lie in a TV ad, most networks will refuse to air it. But Facebook just cashes ╣·▓·╩ėŲĄ checks. Facebook already helped elect Donald Trump once. Now, theyŌĆÖre deliberately allowing a candidate to intentionally lie to the American people. ItŌĆÖs time to hold Mark Zuckerberg accountable ŌĆō add your name if you agree.ŌĆØ Epstein, Kayla. 2019. ŌĆ£Elizabeth WarrenŌĆÖs Facebook Ad Proves the Social Media Giant Still Has a Politics Problem.ŌĆØ Washington Post.

- Isaac, Mike. 2019. ŌĆ£Dissent Erupts at Facebook Over Hands-Off Stance on Political Ads.ŌĆØ The New York Times. ; The New York Times. 2019. ŌĆ£Read the Letter Facebook Employees Sent to Mark Zuckerberg ╣·▓·╩ėŲĄ Political Ads.ŌĆØ The New York Times.

- Glazer, Emily, Deepa Seetharaman, and Jeff Horwitz. 2019. ŌĆ£Peter Thiel at Center of FacebookŌĆÖs Internal Divisions on Politics.ŌĆØ Wall Street Journal.

- Barrett, Bridget, Daniel Kreiss, Ashley Fox, and Tori Ekstrand. 2019. Political Advertising on Platforms on the United States: A Brief Primer. Chapel Hill: University of North Carolina.

- Kovach, Steve. 2019. ŌĆ£Mark Zuckerberg vs. Jack Dorsey Is the Most Interesting Battle in Silicon Valley.ŌĆØ CNBC.

- Defined as ŌĆ£content that references a candidate, political party, elected or appointed government official, election, referendum, ballot measure, legislation, regulation, directive, or judicial outcome.ŌĆØ In the U.S., this applies to independent expenditure groups like PACs, Super PACs, and 501(c)(4) organizations.

- Its policies prohibit advertisers from using zip codes or keywords and audience categories related to politics (like ŌĆ£conservativeŌĆØ or ŌĆ£liberal,ŌĆØ or presumed interest in a specific candidate). Instead, issue ads can be targeted at the state, province, or regional level, or using keywords and audience categories that are unrelated to politics. See Barrett, Bridget, Daniel Kreiss, Ashley Fox, and Tori Ekstrand. 2019. Political Advertising on Platforms in the United States: A Brief Primer. Chapel Hill: University of North Carolina.

- Ranking Digital Rights. 2020. The RDR Corporate Accountability Index: Transparency and Accountability Standards for Targeted Advertising and Algorithmic Systems ŌĆō Pilot Study and Lessons Learned. Washington D.C.: ╣·▓·╩ėŲĄ.

- Edsall, Thomas B. 2020. ŌĆ£╣·▓·╩ėŲĄ Digital Advantage Is Freaking Out Democratic Strategists.ŌĆØ The New York Times. .

- Rosenberg, Matthew. 2019. ŌĆ£Ad Tool Facebook Built to Fight Disinformation DoesnŌĆÖt Work as Advertised.ŌĆØ The New York Times.

Regulatory Challenges: A Free Speech ProblemŌĆöand a Tech Problem

Until now, Congress has largely put its faith in companiesŌĆÖ abilities to self-regulate. But this has clearly not worked. We have reached a tipping pointŌĆöa moment in which protecting tech companiesŌĆÖ abilities to build and innovate unfettered might actually be putting our democracy at grave risk. We have come to realize that we have a serious problem on our hands, and that the government must step in with regulation. But what should this regulation look like?

There is no clear or comprehensive solution to these problems right now. But we know that we need more informationŌĆölikely through government-mandated transparency and impact-assessment requirementsŌĆöin order to assess the potential for damage and propose viable solutions. It is also clear that we need a strong federal privacy law. These recommendations will be explored in greater depth in the second part of this report series.

We have reached a tipping pointŌĆöa moment in which protecting tech companiesŌĆÖ abilities to build and innovate unfettered might actually be putting our democracy at grave risk.

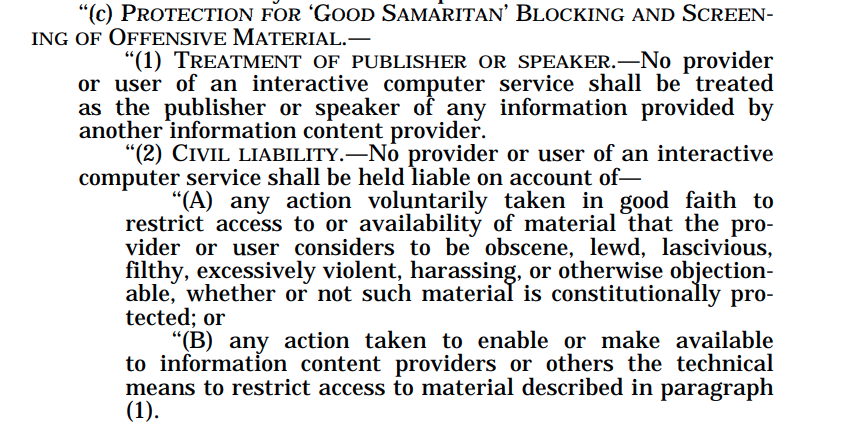

Members of Congress are understandably eager to hold tech platforms accountable for the harms they enable, but should resist the temptation of quick-fix solutions: not all types of regulation will actually solve the problems of disinformation and violent extremism online without also seriously corroding democracy. We urge policymakers to refrain from instituting a broad intermediary liability regime, such as by revoking or dramatically revising Section 230 of the 1996 Communications Decency Act (CDA). Section 230 provides that companies cannot be held liable for the content that their users post on their platforms, within the bounds of U.S. law. It also protects companiesŌĆÖ ability to develop their own methods for identifying and removing content. Without this protection, companies that moderate their usersŌĆÖ content would be held liable for damages caused by any content that they failed to remove, creating strong incentives for companies to censor usersŌĆÖ posts. Instead, Section 230 allows companies to do their best to govern the content on their platforms through their terms of service and community standards.

Experts in media and technology policy are all but unanimous that eliminating CDA 230 would be disastrous for free speech ŌĆö domestically and globally.1 Perfect enforcement is impossible, and holding companies liable for failing to do the impossible will only lead to over-censorship.

Foreign governments are not constrained by the U.S. First Amendment and have the power to regulate speech on internet platforms more directly. This is already happening in various jurisdictions, including Germany, where the 2018 NetzDG law requires social media companies to swiftly remove illegal speech, with a specific focus on hate speech and hate crimes, or pay a fine.2 While this may reduce illegal online speech, it bypasses important measures of due process, delegating judicial authority (normally reserved for judges) to private companies. It also incentivizes them to err on the side of censorship rather than risk paying fines.3

Another example comes with the anti-terrorist content regulation currently pending before the European Commission4 that would, among other things, require companies to institute upload filters. These still-hypothetical algorithmic systems would theoretically be able to evaluate not only the content of an image or video but also its context, the userŌĆÖs intent in posting it, and the competing arguments for removing the content versus taking it down. Automated tools may be able to detect an image depicting a terrorist atrocity, but they cannot recognize or judge the context or deeper significance of a piece of content. For that, human expertise and judgment are needed.5

A desire to see rapid and dramatic reduction in disinformation, hate speech, and violent extremism leads to a natural impulse to mandate outcomes. But technology simply cannot achieve these results without inflicting unacceptable levels of collateral damage to human rights and civil liberties.

Citations

- Kaye, David. 2019. Speech Police: The Global Struggle to Govern the Internet. New York: Columbia Global Reports.

- GermanyŌĆÖs Netzwerkdurchsetzungsgesetz (ŌĆ£Network Enforcemencement ActŌĆØ) went into effect in 2018.

- Kaye, David. 2019. Speech Police: The Global Struggle to Govern the Internet. New York: Columbia Global Reports.

- European Parliament. 2019. ŌĆ£Legislative Train Schedule.ŌĆØ

- Kaye, David. 2019. Speech Police: The Global Struggle to Govern the Internet. New York: Columbia Global Reports.

So What Should Companies Do?

What would a better framework, one that puts democracy, civil liberties, and human rights above corporate profits, look like?

It is worth noting that Section 230 stipulates that companies are protected from liability in taking ŌĆ£any action voluntarily taken in good faithŌĆØ to enforce its rules. Section 230 doesnŌĆÖt provide any guidance as to what ŌĆ£good faithŌĆØ actually means for how companies should govern their platforms.1 Some legal scholars have proposed reforms that would keep Section 230 in place but would clarify steps that companies need to take in order to demonstrate good-faith efforts to mitigate harm, in order to remain exempt from liability.2

Companies hoping to convince lawmakers not to abolish or drastically change Section 230 would be well advised to proactively and voluntarily implement a number of policies and practices to increase transparency and accountability. This would help to mitigate real harms that users or communities can experience when social media is used by malicious or powerful actors to violate their rights.

First, companiesŌĆÖ speech rules must be clearly explained and consistent with established human rights standards for freedom of expression. Second, these rules must be enforced fairly according to a transparent process. Third, people whose speech is restricted must have an opportunity to appeal. And finally, the company must regularly publish transparency reports with detailed information about the steps that the company takes to enforce its rules.3

Since 2015, RDR has encouraged internet and telecommunications companies to publish basic disclosures about their policies and practices that affect their usersŌĆÖ rights. Our annual benchmarks major global companies against each other and against standards grounded in international human rights law.

Much of our work measures companiesŌĆÖ transparency about the policies and processes that shape usersŌĆÖ experiences on their platforms. We have found thatŌĆöabsent a regulatory agency empowered to verify that companies are conducting due diligence and acting on itŌĆötransparency is the best accountability tool at our disposal. Once companies are on the record describing their policies and practices, journalists and researchers can investigate whether they are actually telling the truth.

Transparency allows journalists, researchers, and the public and their elected representatives to make better informed decisions about the content they receive and to hold companies accountable.

We believe that platforms have the responsibility to set and enforce the ground rules for user-generated and ad content on their services. These rules should be grounded in international human rights law, which provides a framework for balancing the competing rights and interests of the various parties involved.4 Operating in a manner consistent with international human rights law will also strengthen the U.S.ŌĆÖ long-standing bipartisan policy of promoting a free and open global internet.

But again, content is only part of the equation. Companies must take steps to publicly disclose the different technological systems at play: the content-shaping algorithms that determine what user-generated content users see, and the ad-targeting systems that determine who can pay to influence them. Specifically, companies should explain the purpose of their content-shaping algorithms and the variables that influence them so that users can understand the forces that cause certain kinds of content to proliferate, and other kinds to disappear.5 Currently, companies are not transparent or accountable for how their targeted-advertising policies and practices and their use of automation shape the online public sphere by determining the content and information that internet users receive.6

Companies also need to publish their rules for ad targeting, and be held accountable for enforcing those rules. Our research shows that while Facebook, Google, and Twitter all publish ad-targeting rules that list broad audience categories that advertisers are prohibited from using, the categories themselves can be excessively vague and unclearŌĆöTwitter for instance bans advertisers from using audience categories ŌĆ£that we consider sensitive or are prohibited by law, such as race, religion, politics, sex life, or health."7 Nor do these platforms disclose any data about the number of ads they removed for violating their ad-targeting rules (or other actions they took).8

Facebook says that advertisers can target ads to custom audiences, but prohibits them from using targeting options ŌĆ£to discriminate against, harass, provoke, or disparage users or to engage in predatory advertising practices.ŌĆØ However, not everyone can see what these custom audience options are, since these are only available to Facebook users. And Facebook publishes no data about the number of ads removed for breaching ad-targeting rules.

Platforms should set and publish rules for targeting parameters, which should apply equally to all adsŌĆöa practice like this would make it much more difficult for companies to violate anti-discrimination laws like the Fair Housing Act. Moreover, once an advertiser has chosen their targeting parameters, companies should refrain from further optimizing ads for distribution, as this may lead to further discrimination.9

Platforms should not differentiate between commercial, political, and issue ads, for the simple reason that drawing such lines fairly, consistently, and at a global scale is impossible and complicates the issue of targeting.

Eliminating targeting practices that exploit individual internet usersŌĆÖ characteristics (real or assumed) would protect privacy, reduce filter bubbles, and make it harder for political advertisers to send different messages to different constituent groups.

Limiting targeting, as Federal Elections Commissioner Ellen Weintraub has argued,10 is a much better approach, though here again, the same rules should apply for all types of ads. Eliminating targeting practices that exploit individual internet usersŌĆÖ characteristics (real or assumed) would protect privacy, reduce filter bubbles, and make it harder for political advertisers to send different messages to different constituent groups. This is the kind of reform that will be addressed in the second part of this report series.

In addition, companies should conduct due diligence through human rights impact assessments on all aspects of what their rules are, how they are enforced and what steps the company takes to prevent violations of usersŌĆÖ rights. This process forces companies to anticipate worst case scenarios, and change their plans accordingly, rather than simply rolling out new products or entering new markets and hoping for the best.11 A robust practice like this could reduce or eliminate some of the phenomena described above, ranging from the proliferation of election-related disinformation to YouTubeŌĆÖs tendency to recommend extreme content to unsuspecting users.

All systems are prone to error, and content moderation processes are no exception. Platform users should have access to timely and fair appeals processes to contest a platformŌĆÖs decision to remove or restrict their content. While the details of individual enforcement actions should be kept private, transparency reporting provides essential insight into how the company is addressing the challenges of the day. Facebook, Google, Microsoft, and Twitter have finally started to do so,12 though their disclosures could be much more specific and comprehensive.13 Notably, they should include data about the enforcement of ad content and targeting rules.

Our complete transparency and accountability standards can be found on our website. Key transparency recommendations for content shaping and content moderation are presented in the next section.

Citations

- Kosseff, Jeff. 2019. The Twenty-Six Words That Created the Internet. Ithaca: Cornell University Press.

- Citron, Danielle Keats, and Benjamin Wittes. 2017. ŌĆ£The Internet Will Not Break: Denying Bad Samaritans ┬¦230 Immunity.ŌĆØ Fordham Law Review 86(2): 401ŌĆō23.

- See also P├Łrkov├Ī, Eli┼Īka, and Javier Pallero. 2020. 26 Recommendations on Content Governance: A Guide for Lawmakers, Regulators, and Company Policy Makers. Access Now.

- Kaye, David. 2019. Speech Police: The Global Struggle to Govern the Internet. New York: Columbia Global Reports.