Sydney Saubestre

Senior Policy Analyst, Open Technology Institute, ╣·▓·╩ėŲĄ

Imagine having every tool and dataset needed to turn good intentions into measurable impact and solve complex policy challengesŌĆöonly to find that they canŌĆÖt connect or work together. Many state agencies face this challenge. Datasets sit isolated, technical standards diverge, and legal or procedural silos prevent meaningful integration. Without shared access to the data, insights canŌĆÖt flow, programs canŌĆÖt scale, and potential impact is left untapped.

This is particularly an issue in higher education state data. Unlike KŌĆō12 schools, which are largely state-run, higher education involves public and private universities, community colleges, workforce programs, and state agencies. Without linked systems, tracking students across these diverse institutions is extremely difficult. The disconnected systems are unable to inform programs that would improve student outcomes, optimize public spending, and guide institutions toward greater equity.

Rather than treating their systems as a collection of isolated parts, agencies can instead approach them as building blocks, with each database designed to fit, stack, and connect to a large structure through shared formats and connective points. This concept, known as interoperability, is the foundation for building systems that can work together effectively.

ŌĆ£Rather than treating their systems as a collection of isolated parts, agencies can instead approach them as building blocks, with each database designed to fit, stack, and connect to a large structure through shared formats and connective points.ŌĆØ

ThereŌĆÖs more to this than just making data sharing a priorityŌĆötrue interoperability requires establishing common standards and designing infrastructure so that different databases, tools, and applications can consistently recognize, trust, and interpret one another. It requires structuring data fields and metadata that align across systems and agencies. Most critically, it means viewing data and systems not as a collection of isolated parts, but as a cohesive blueprint that is continually refined.

This brief delineates the challenges and opportunities specific to education data interoperability, though many issues affecting one sector or agency often extend to others. While data is critical to most good policymaking, higher education outcomesŌĆösuch as graduation, employment, and wage growthŌĆöunfold over years or even decades. Disconnected systems make longitudinal tracking almost impossible, undermining policy, funding, and equity analysis, particularly for students who have historically faced barriers. When data flows efficiently and responsibly, policymakers and educators gain a clearer, more comprehensive understanding of who is succeeding, who needs additional resources, and where systemic gaps persist. This understanding empowers targeted interventions that promote fairness, opportunity, and success for all students.

States and institutions are being asked to answer urgent, complex questions: Are public investments translating into real student outcomes? Who is making it to graduation, and who is leaving school without a degree? What happens to students after their studies, and how does that vary by race, income, or geography? The answers canŌĆÖt be found in a single dataset, or even by a single agency. To fully address them, we need systems that can securely connect education data to workforce, social service, and financial aid data.

Many of our current systems were created before the digital era and werenŌĆÖt built to support cross-agency interoperability. They were designed to meet internal reporting requirements, not to answer collective questions or adapt to shifting policy demands and technological innovation.

ŌĆ£True interoperability doesnŌĆÖt start with codeŌĆöit starts with aligning leadership, legal frameworks, technical infrastructure, and a collective understanding of what good data sharing entails.ŌĆØ

This brief is a guide for how agencies can change that, beginning with readiness. True interoperability doesnŌĆÖt start with codeŌĆöit starts with aligning leadership, legal frameworks, technical infrastructure, and a collective understanding of what good data sharing entails. In addition to addressing common barriers, priorities for ensuring readiness, and practical steps to start the process, this brief also introduces a agencies can use to evaluate their own interoperability.

When done well, interoperability isnŌĆÖt just efficientŌĆöitŌĆÖs transformative. The goal isnŌĆÖt to merely replicate datasets and systems or to connect them without a clear purposeŌĆöitŌĆÖs to ensure they fit together in a way thatŌĆÖs useful and sustainable.

Interoperability means the ability to securely and meaningfully exchange data across systems without requiring custom workarounds or constant translation. As , a KŌĆō12 interoperability initiative, puts it, interoperability is the ŌĆ£seamless, secure, and controlled exchange of data between applications.ŌĆØ According to the United NationsŌĆÖ , interoperability also entails the ability to access, process, and integrate data ŌĆ£without losing meaning.ŌĆØ To put it succinctly, interoperability makes data useful beyond its original context.

In practical terms, interoperability means that different databases are either technically connected to each other or structured in analogous ways. For example, while a post-graduate survey can generate some insights into alumni careers, linking student data to workforce outcomes ensures a much larger sample size, allowing the state to better surface disparities and identify necessary changes. Interoperability can also mean ensuring common data formats or standards that align definitions and metadata, enabling different systems to automatically exchange information without manual intervention. For instance, across all levels of education allow KŌĆō12 and postsecondary systems to recognize the same course or subject across schools, enabling student progress and achievements to be compared and analyzed consistently. The applications of interoperability are endless: Shared analysis, service delivery, or program evaluation all depend on making sense of fragmented data.

Agencies and institutions want to make better use of their data. But interoperability canŌĆÖt be built overnight, and they face real barriers to connecting their data in usable ways. These barriers are more than technical: TheyŌĆÖre also institutional, legal, and cultural. Often, theyŌĆÖre hiding in plain sight:

These barriers are real, but agencies can effectively navigate them once they develop high interoperability readinessŌĆöthat is, the degree to which an organization, agency, or system is prepared to integrate, exchange, and use data across multiple systems securely and consistently.

Defining what ŌĆ£readyŌĆØ looks like will provide teams with a clear mandate to work across boundaries. The process is more than a technical checklistŌĆöitŌĆÖs a commitment to engaging with the people, policies, and infrastructure that make connection possible.

Prepared agencies and institutions ask questions like:

These questions are complex, but agencies that answer them are more likely to have systems that communicate seamlessly, decisions that reflect the whole picture, and data that works as hard as the people behind it.

A data system is only as strong as its foundation. While you can retrofit a system to be more interoperable, a system that builds it in from the start will be strongerŌĆöas long as the fundamentals are correct.

Think of data systems like buildings on a city block. Each building may look different on the outside, designed for its own purpose, but behind the facades, they all rely on the same underlying infrastructure. The plumbing, electricity, and internet system in each building links together across the entire block, feeding back into centralized systems. This is the kind of cooperative infrastructure that interoperability requires. Systems donŌĆÖt need to be identical or replicate each otherŌĆÖs designsŌĆöbut they do need to be designed with the greater context in mind.

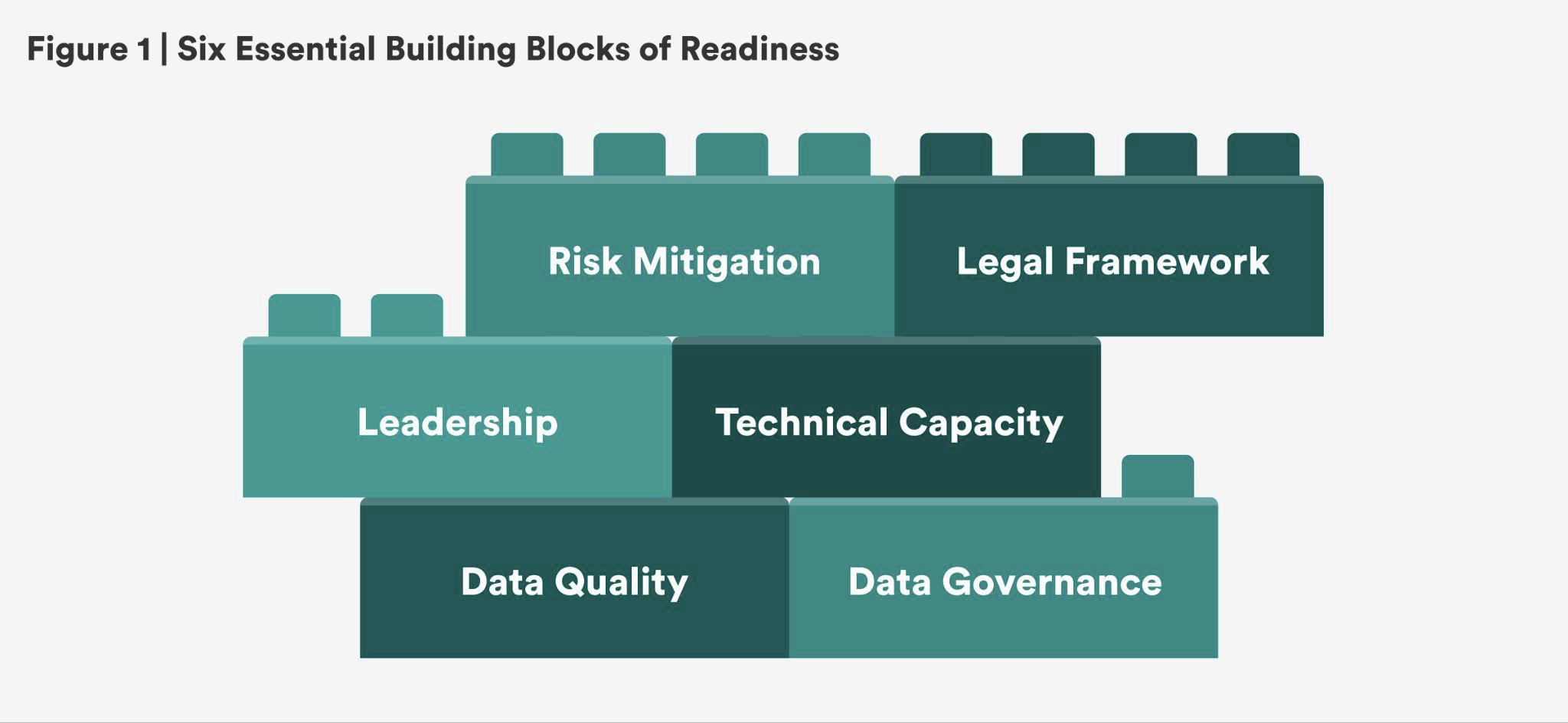

This brief outlines six essential ŌĆ£building blocksŌĆØ of interoperability. These key characteristics were identified through a review of interoperability frameworks from multiple sectorsŌĆöincluding , , , and ŌĆöbefore focusing on those most relevant to education. The building blocks draw from commonly cited frameworks, including the ŌĆ£ Ėķ│▄▓·░∙Š▒│”,ŌĆØ Diagnosis and Recommendations to Integrate Administrative Records Related to Children, Data Ready Playbook, and the ŌĆ£Interoperability Maturity ModelŌĆØ from the .

These organizations underscore the importance of: (1) data quality, (2) data governance, (3) grounded leadership, (4) technical capacity, (5) legal frameworks, and (6) risk management.

These core components make any data system capable of flexing, connecting, and growing across agencies and contexts. TheyŌĆÖre the shared foundation that lets agencies align systems without erasing differencesŌĆödesigning not for uniformity, but for compatibility. Adopting these building blocks intentionally is more important than adopting them perfectly.

This brief includes a to help agencies and institutions take stock of their progress toward interoperability readiness, spot potential obstacles, and map a path forward.

Interoperability only works if the underlying data is reliable. Incomplete submissions, mismatched timelines, inconsistent survey instruments, missing metadata, and basic entry errors can all undermine confidence in shared . Without reliable, accurate, and well-structured data, leaders risk arriving at misleading and meaningless conclusions. Quality isnŌĆÖt a one-time achievementŌĆöitŌĆÖs a continuous process of cleaning, removing duplication, validating, and documenting. Tools like , entry-level , and automated improve trust in both generated and shared data. Agencies also benefit from tracking simple metrics, such as the percentage of missing or unmatchable records, to monitor quality over time. That said, the best defense is context: people who know the data well enough to spot issues before they become problems.

How to implement: Start by assessing whether your current datasets actually reflect the questions they are trying to answer. For example, are they reliable indicators of student experiences and outcomes? Prioritize clarity: Inventory whatŌĆÖs in use, identify whatŌĆÖs immovable, and consider how the data was collected. Pay special attention to manual processes, which are often the source of inconsistency. Updating metadata as part of this process also improves interpretability and long-term usability. These initial steps help identify where quality might break down in fragmented data systems. Once thatŌĆÖs accomplished, data users can normalize and data where needed, such as aligning inconsistent grading scales or formatting addresses to match across districts.

Case study: The draws data from six agencies and institutions to evaluate studentsŌĆÖ career and college readiness. Early challenges with missing and inconsistent submissions led to the adoption of statewide and a , which ensures agenciesŌĆÖ data is chronologically comparable and checked for matching issues before publication. These tools aligned timing, clarified expectations, and set a threshold where review is triggered if more than 10 percent of any variable is missing. Together, theyŌĆÖve supported a decade of productive collaboration and credible recommendations.

Good turns scattered inputs into usable systems that preserve, secure, and transmit data throughout their lifecycleŌĆöfrom collection to access to archiving. Defining the policies, processes, and roles that govern data answers practical questions like: Who updates the spreadsheet? Where do survey results live? Can two datasets be linked over time? For this reason, many agencies a data governance body, policy, or manual that covers topics such as data collection, encryption, and the linkage of data systems. Without governance, agencies confusion, security breaches, or tech investments that donŌĆÖt meet long-term needs.

How to implement: Start by designating : people responsible for managing specific datasets and ensuring theyŌĆÖre accurate, protected, and shareable. As a guiding principle, these assignees should have intimate knowledge of how various data is collected and interpreted. Their first job should be to audit current practices and align them with relevant standards. From there, they can develop clear protocols for how data is gathered, stored, migrated, protected, and linked. These stewards should also produce guidance or training to help partners comply. As systems evolve, theyŌĆÖll need to manage vendor contracts and clarify who has the right to access or modify data. Data governance should be focused on long-term outcomes, not just short-term functionality.

Case study: The (OSPI) has developed a robust comprehensive governance . The manual outlines roles across local, state, and federal levels; lays out questions to ask before adding or eliminating a data element; and provides frameworks for linking KŌĆō12 and postsecondary data. When the state needed new data on dual language programs, OSPI already had the protocols in place, including a template for a project timeline and a standardized way of collecting student and teacher data. The result: a timely, coordinated effort that provided actionable data without the need to start from scratch in support of WashingtonŌĆÖs widespread .

Interoperability is often treated as a technical endeavorŌĆöbut at its core, it is a that depends on strong leadership and vision. Lasting progress requires champions who understand the landscape, bridge agencies, and sustain momentum across political cycles. Too many initiatives start with one passionate administrator, only to stall after that person moves on. To endure, interoperability efforts need shared vision, cross-agency coordination, a range of invested stakeholders, and governance structures that outlast individuals. Whether itŌĆÖs a governor-appointed board, a multi-agency task force, or a working group of district representatives and IT staff, must connect the dots between vision, strategy, and operations. Most critically, they are the people who can the ŌĆ£whyŌĆØ and bring others along.

How to implement: Leaders should start by aligning stakeholders on a clear purpose: a shared perspective on interoperability, core questions to explore in data, and a timeline. What are we trying to answer with this data? Who benefits? What can we feasibly achieve given our environment? From there, they can coordinate with policy and funding bodies, shepherd interagency agreements or legislation, and ensure interoperability is baked into long-term strategic and budget plans. Crucially, strong leaders also make sure that costs and benefits are distributed fairly across participantsŌĆöespecially when resources are uneven.

Case study: ░õ▓╣▒¶Š▒┤┌┤Ū░∙▓įŠ▒▓╣ŌĆÖs brings together 15 stakeholders through a 21-person that includes higher education administrators, state social service leaders, elected officials, nonprofit research professionals, governor and state appointees, and critically, members of the public. The board is tasked with strategic planning, reviewing governance protocols, and elevating community priorities. A key of the initiative has been the cultivation of data champions who have successfully communicated the importance of data to the public. For example, since 2022, the governing board meetings have been shared on YouTube in both English and Spanish. This cross-cutting and intentional structureŌĆöalong with its focus on continuityŌĆöhas been with the initiativeŌĆÖs staying power.

Once leadership and governance are in place, agencies need the technical capacity to systems. That includes infrastructure (like student information or learning management systems), integration tools (like application programming interfaces, or APIs), and adherence to shared data standards (like the Department of EducationŌĆÖs , or CEDS). But capacity isnŌĆÖt just about using the right softwareŌĆöitŌĆÖs also about who can maintain, troubleshoot, and evolve systems over time.

How to implement: Begin by inventorying the tools currently in useŌĆöwhat they cost, how well they work, and whether they meet program needs. Many agencies are managing thousands of technology tools, often with overlapping or underused features. One nationwide survey found used an average of in the 2023ŌĆō2024 academic year, up from 841 in 2018. Clarifying which ones are essential helps reduce bloat, align systems, and map gaps. From there, develop procurement practices that prioritize interoperability, specs for secure login and scalability. Professional associations like CoSN also the importance of tools that protect verified access to platforms through multi-factor authentication (MFA), single sign-on (SSO), and levels of authorized access. As systems evolve, ensure that at pace and that staff are equipped to manage them.

Case study: The New Jersey Education to Earnings Data System () sparks insights based on tech from the Coleridge Initiative, whose provides a cloud-based dashboard for the state to manage data stewardsŌĆÖ profiles, datasets from four state agencies, and project details, along with tools for queries and statistical analysis.

Thanks to this scaffolding, NJEEDS published reports on ŌĆ£wage scarringŌĆØ post-COVID-19, the impact of scholarships on educational attainment, and post-graduation retention of out-of-state students. The state now has trackable metrics on higher education outcomes that will inform its workshop for 90 higher education institutions in the state. New Jersey is also able to use the platform to share and receive data with others in the organized by the National Association of State Workforce Agencies.

As data flows more freely, so does risk. Interoperability requires strong safeguards to prevent misuse, , or violations of privacy. Risk management isnŌĆÖt about saying no to data sharingŌĆöitŌĆÖs about designing systems that are secure, ethical, and compliant to protect and institutions from harm. While , , the ChildrenŌĆÖs Online Privacy Protection Act (), and protect some of studentsŌĆÖ personal information from certain types of disclosure, they are not comprehensive enough to assess risk within an interoperable context.

How to implement: Start by defining your agencyŌĆÖs privacy principles, as modeled by Data Quality CampaignŌĆÖs . Then assess current tools and practices through a risk analysis that considers factors like the presence of personally identifiable information (PII), encryption practices, and levels of access. DonŌĆÖt forget to consider each tool and system in light of changing . Agencies should clarify who can access what, under what conditions, and with what oversight. More advanced systems may implement statistical , automated , and . Additionally, everyoneŌĆöfrom contractors to internal teamsŌĆöshould receive regular privacy training and operate under clear enforcement policies.

Case study: appointed privacy officers across various state agencies, along with a chief position. These officers track legislative developments, distribute grants for capacity-building projects, audit compliance, and support agency-wide training. Beyond strengthening policies to avoid inadvertent leaks, theyŌĆÖve also helped vet third-party vendors, ensuring student data isnŌĆÖt being misused or sold. By building a formal privacy infrastructure, Indiana has both reduced risk and reinforced public trust.

Even the best-intentioned collaboration canŌĆÖt proceed without the legal scaffolding to support it. (DUAs) and memorandums of understanding (MOUs) are critical tools that define roles, responsibilities, and protections, including the projectŌĆÖs purpose, what PII will be shared, and how access permissions will be distributed. DUAs what data is shared, with whom, for what purpose, and for how long. MOUs clarify broader termsŌĆöwho owns the data, how it will be secured, and how staff will be allocated. Together, these documents lay the legal foundation for safe, purposeful data exchange. They also establish mechanisms for agencies and institutions to assess and ensure that data-sharing partners comply with federal and state law, safeguarding privacy and maintaining trust throughout the collaboration.

How to implement: Use ŌĆölike those from the ŌĆöas starting points. Tailor them to clarify each partyŌĆÖs obligations, governance roles, and mechanisms for amendment. Legal agreements should connect directly to the data governance structures discussed earlier, reinforcing consistent workflows and compliance. Periodically revisit agreements to reflect changes in law, technology, or policy priorities.

Case study: To better understand outcomes for non-credit students, built upon an existing data-sharing agreement to request data on students who complete noncredit coursework to better understand employment and performance outcomes for community college students. The state updated an existing MOU between five state agencies to form a new data integration workforce with eight key stakeholders, all of whom fed individual data into a newly designed data warehouse. The revised system enabled the state to collect and aggregate information across many previously unlinked public workforce and higher education systemsŌĆöultimately revealing new insights and patterns. As a result, the public dashboard is now available for public use.

Interoperability is a distinct project, but building readiness can start anywhere: in any institution, of any size, at any time. Despite two decades of literature on interoperability in education, identifying where to begin can often be one of the biggest challenges. But the path forward doesnŌĆÖt need to be complicated. Fortunately, organizations can take several practical steps to build momentum:

Start with the questions. What gaps or opportunities exist in your stateŌĆÖs evidence-based policymaking? What education, workforce, or health questions are leaders wrestling with? What new insights could be gained by integrating data across agencies or states? Reflecting on these priorities helps focus energy on high-impact, achievable projects.

Prioritize easy wins. From that list, identify where you already have momentum. Existing state lawsŌĆölike those authorizing longitudinal data systemsŌĆöcan make some efforts easier to launch. Target simpler administrative projects and willing partners to establish proof points and build a base for more ambitious efforts down the line.

Fix downstream, think upstream. Ensure consistency and accuracy in how data enters your systems across formats, agencies, and timelines. Early investment in shared , secure infrastructure, and clear metadata will make future effortsŌĆölike dashboards, visualizations, or cross-agency disbursementsŌĆömore effective and trustworthy.

Lean into regional collaboration. Communities of practice offer resources, certifications, and shared strategies. The collects case studies and has even published a based on work with 150 school districts in 34 states. The operates a multistate longitudinal data exchange for six nearby states. and are great options for those seeking to publicly demonstrate their commitment to secure interoperability with credentials. And those not ready to join an can find meaningful resources in both the ŌĆÖ comparative analysis of longitudinal data systems and the ŌĆÖs extensive case studies and policy guides.

Plan for sustainable funding. Interoperability efforts often raise questions about long-term costs, especially for technical infrastructure and staffing. While pandemic-era aid sparked historic , many of those grants are sunsetting. Agencies can still find support across federal, state, and subject-matter lines (though funding availability is subject to change).

Within the public sphere, the has supported data system upgrades for enrollment and case management. The Institute of Education SciencesŌĆÖ 10 partner with state education departments on programs and research to improve student outcomes, offering both funding and technical support. In addition, the National Center for Education Statistics disseminates grants through the , with a focus on efficiency, resource allocation, and labor market results. And education agencies arenŌĆÖt the only sourceŌĆöthe Department of LaborŌĆÖs funds statesŌĆÖ efforts to connect workforce and education data in longitudinal databases.

Private grantmakers are stepping up, too. The is investing in bolstering interoperable systems so students nationwide gain valuable postsecondary credentials, and it helps fund Coleridge InitiativeŌĆÖs , which supports states, postsecondary institutions, and research organizations in building accessible, secure, and scalable data infrastructure. From studying unemployment claims to teacher vacancies, the project promotes cross-stakeholder engagement. The program from Results for America also supports data-driven decision-making by local program leaders, many of whom have launched multi-agency collaborations serving students in vulnerable conditions.

To move from planning to implementation and actually operationalize the building blocks, agencies and institutions need a clear sense of where they are, where the gaps lie, and whatŌĆÖs needed to move forward.

The diagnostic tool below is designed to help agencies surface existing strengths, identify areas of growth, and prioritize next steps. It is not necessarily a checklist to complete, but a practical framework for reflection, coordination, and action. Whether launching a new project or improving an existing one, these questions can help teams align their strategies and structure their efforts.

Whether an agency is examining studentsŌĆÖ paths into high-demand careers or tracking university retention, it doesnŌĆÖt need to start from scratch. Understanding common barriers and investing deliberately in foundational building blocks can transform interoperability from a distant ideal into a practical reality. Even where this is a new endeavor, readiness is attainable. It spurs systems to connect thoughtfully, securely, and with purpose, unlocking collective impact to better serve students and communities. In the end, interoperabilityŌĆÖs power does not stem from the technologyŌĆöit stems from the people using these tools so collaboration yields results.

Editorial disclosure: This brief was supported by the Gates Foundation. The views expressed here are solely those of the authors and are not intended to reflect the views of the Gates Foundation or ╣·▓·╩ėŲĄ.