A Chapter of: It’s Not Just the Content, It’s the Business Model: DemocracyŌĆÖs Online Speech Challenge

A Tale of Two Algorithms

In recent years, policymakers and tech executives alike have begun to invoke the almighty algorithm as a solution for controlling the harmful effects of online speech. Acknowledging the pitfalls of relying primarily on human content moderators, technology companies promote the idea that a technological breakthrough, something that will automatically eliminate the worst kinds of speech, is just around the corner.1

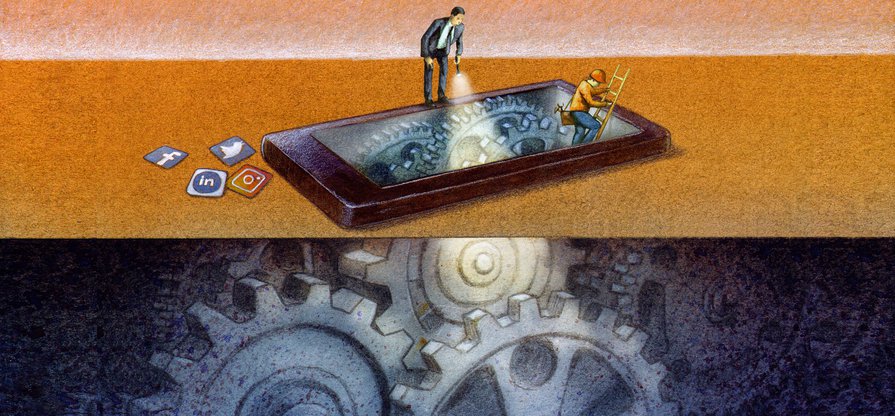

But the public debate often conflates two very different types of algorithmic systems that play completely different roles in policing, shaping, and amplifying online speech.2

First, there are content-shaping algorithms. These systems determine the content that each individual user sees online, including user-generated or organic posts and paid advertisements. Some of the most visible examples of content-shaping algorithms include FacebookŌĆÖs News Feed, TwitterŌĆÖs Timeline, and YouTubeŌĆÖs recommendation engine.3 Algorithms also determine which users should be shown a given ad. The advertiser usually sets the targeting parameters (such as demographics and presumed interests), but the platformŌĆÖs algorithmic systems pick the specific individuals who will see the ad and determine the adŌĆÖs placement within the platform. Both kinds of personalization are only possible because of the vast troves of detailed information that the companies have accumulated about their users and their online behavior, often without the knowledge or consent of the people being targeted.4

While companies describe such algorithms as matching users with the content that is most relevant to them, this relevance is measured by predicted engagement: how likely users are to click, comment on, or share a piece of content. Companies make these guesses based on factors like usersŌĆÖ previous interaction with similar content and the interactions of other users who are similar to them. The more accurate these guesses are, the more valuable the company becomes for advertisers, leading to ever-increasing profits for internet platforms. This is why mass data collection is so central to Big TechŌĆÖs business models: companies need to surveil internet users in order to make predictions about their future behavior.5

Companies can and do change their algorithms anytime they want, without any legal obligation to notify the public.

Second, we have content moderation algorithms, built to detect content that breaks the companyŌĆÖs rules and remove it from the platform. Companies have made tremendous investments in these technologies. They are increasingly able to identify and remove some kinds of content without human involvement, but this approach has limitations.6

Content moderation algorithms work best when they have a hard and fast rule to follow. This works well when seeking to eliminate images of a distinct symbol, like a swastika. But machine-driven moderation becomes more difficult, if not impossible, when content is violent, hateful, or misleading and yet has some public interest value. Companies are, in effect, adjudicating such content, but this requires the ability to reasonŌĆöto employ careful consideration of context and nuance. Only humans with the appropriate training can make these kinds of judgmentsŌĆöthis is beyond the capability of automated decision-making systems.7 Thus, in many cases, human reviewers remain involved in the moderation process. The consequences that this type of work has had for human reviewers has become an important area of study unto itself, but lies beyond the scope of this report.8

Both types of systems are extraordinarily opaque, and thus unaccountable. Companies can and do change their algorithms anytime they want, without any legal obligation to notify the public. While content-shaping and ad-targeting algorithms work to show you posts and ads that they think are most relevant to your interests, content moderation processes (including algorithms) work alongside this stream of content, doing their best to identify and remove those posts that might cause harm. LetŌĆÖs look at some examples of these dynamics in real life.

It’s Not Just the Content, It’s the Business Model: DemocracyŌĆÖs Online Speech Challenge

- Executive Summary

- Introduction

- A Tale of Two Algorithms

- Russian Interference, Radicalization, and Dishonest Ads: What Makes Them So Powerful?

- Algorithmic Transparency: Peeking Into the Black Box

- Who Gets TargetedŌĆöOr ExcludedŌĆöBy Ad Systems?

- When Ad Targeting Meets the 2020 Election

- Regulatory Challenges: A Free Speech ProblemŌĆöand a Tech Problem

- So What Should Companies Do?

- Key Transparency Recommendations for Content Shaping and Moderation

- Conclusion

Citations

- Harwell, Drew. 2018. ŌĆ£AI Will Solve FacebookŌĆÖs Most Vexing Problems, Mark Zuckerberg Says. Just DonŌĆÖt Ask When or How.ŌĆØ Washington Post.

- It is worth nothing that terms like algorithms, machine learning and artificial intelligence have very specific meanings in computer science, but are used more or less interchangeably in policy discussions to refer to computer systems that use Big Data analytics to perform tasks that humans would otherwise do. Perhaps the most useful non-technical definition of an algorithm is Cathy OŌĆÖNeilŌĆÖs: ŌĆ£Algorithms are opinions embedded in codeŌĆØ (see OŌĆÖNeil, Cathy. 2017. The Era of Blind Faith in Big Data Must End. ).

- Singh, Spandana. 2019. Rising Through the Ranks: How Algorithms Rank and Curate Content in Search Results and on News Feeds. Washington D.C.: ╣·▓·╩ėŲĄŌĆÖs Open Technology Institute. source

- Singh, Spandana. 2020. Special Delivery: How Internet Platforms Use Artificial Intelligence to Target and Deliver Ads. Washington D.C.: ╣·▓·╩ėŲĄŌĆÖs Open Technology Institute. source

- Zuboff, Shoshana. 2019. The Age of Surveillance Capitalism: The Fight for a Human Future at the New Frontier of Power. First edition. New York: PublicAffairs.

- Singh, Spandana. 2019. Everything in Moderation: An Analysis of How Internet Platforms Are Using Artificial Intelligence to Moderate User-Generated Content. Washington D.C.: ╣·▓·╩ėŲĄŌĆÖs Open Technology Institute. source

- Gray, Mary L., and Siddharth Suri. 2019. Ghost Work: How to Stop Silicon Valley from Building a New Global Underclass. Boston: Houghton Mifflin Harcourt; Gorwa, Robert, Reuben Binns, and Christian Katzenbach. 2020. ŌĆ£Algorithmic Content Moderation: Technical and Political Challenges in the Automation of Platform Governance.ŌĆØ Big Data & Society 7(1): 205395171989794.

- Newton, Casey. 2020. ŌĆ£YouTube Moderators Are Being Forced to Sign a Statement Acknowledging the Job Can Give Them PTSD.ŌĆØ The Verge. ; Roberts, Sarah T. 2019. Behind the Screen: Content Moderation in the Shadows of Social Media. New Haven: Yale University Press.